Healthcare

AI Models Trained On Sex Biased Data Perform Worse At Diagnosing Disease

Recently, a study published in the journal PNAS and conducted by researchers from Argentina, implied that the presence of sex-skewed training data leads to worse model performance when diagnosing diseases and other medical issues. As reported by Statsnews, the team of researchers experimented with training models where female patients were notably underrepresented or excluded altogether, and found that the algorithm performed substantially worse when diagnosing them. The same also held true for incidents where male patients were excluded or underrepresented.

Over the past half-decade, as AI models and machine learning have become more ubiquitous, more attention has been paid to the problems of biased datasets and the biased machine learning models that result from them. Data bias in machine learning can lead to awkward, socially damaging, and exclusive AI applications, but when it comes to medical applications lives can be on the line. However, despite knowledge of the problem, few studies have attempted to quantify just how damaging biased datasets can be. The study carried out by the research team found that data bias could have more extreme effects than many experts previously estimated.

One of the most popular uses for AI in the past few years, in medical contexts, has been the use of AI models to diagnose patients based on medical images. The research team analyzed models used to detect the presence of various medical conditions like pneumonia, cardiomegaly, or hernias from X-rays. The research teams studied three open-source model architectures: Inception-v3, ResNet, and DenseNet-121. The models were trained on chest X-rays pulled from two open-source datasets originating from Stanford University and the National Institutes of Health. Although the datasets themselves are fairly balanced when it comes to sex representation, the researchers artificially skewed the data by breaking them into subsets where there was a sex imbalance.

The research team created five different training datasets, each composed of different ratios of male/female patient scans. The five training sets were broken down as follows:

- All images were of male patients

- All images were of female patients

- 25% male patients and 75% female patients

- 75% female patients and 25% male patients

- Half male patients and half female patients

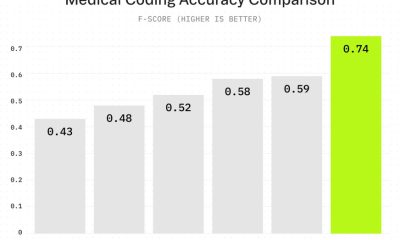

After the model was trained on one of the subsets, it was tested on a collection of scans from both male and female patients. There was a notable trend that was present across the various medical conditions, the accuracy of the models was much worse when the training data was significantly sex-skewed. An interesting thing to note is that if one sex was overrepresented in the training data, that sex didn’t seem to benefit from the overrepresentation. Regardless of whether or not the model was trained on data skewed for one sex or the other, it didn’t perform better on that sex compared to when it was trained on an inclusive dataset.

The senior author of the study, Enzo Ferrante, was quoted by Statnews as explaining that the study underlines how important it is for training data to be diverse and representative for all the populations you intend to test the model in.

It isn’t entirely clear why models trained on one sex tend to perform worse when implemented on another sex. Some of the discrepancies might be due to physiological differences, but various social and cultural factors could also account for some of the difference. For instance, women may tend to receive X-rays at a different stage of progression in their disease when compared to men. If this were true, it could impact the features (and therefore the patterns learned by the model) found within training images. If this is the case, it makes it much more difficult for researchers to de-bias their datasets, as the bias would be baked into the dataset through the mechanisms of data collection.

Even researchers who pay close attention to data diversity sometimes have no choice but to work with data that is skewed or biased. Situations where a disparity exists between how medical conditions are diagnosed will often lead to imbalance data. For example, data on breast cancer patients is almost entirely collected from women. Similarly, autism manifests differently between women and men, and as a result, the condition is diagnosed at a much higher rate in boys than girls.

Nonetheless, it’s extremely important for researchers to control for skewed data and data bias in any way that they can. To that end, future studies will help researchers quantify the impact of biased data.