Artificial Intelligence

AI Auditing: Ensuring Performance and Accuracy in Generative Models

In recent years, the world has witnessed the unprecedented rise of Artificial Intelligence (AI), which has transformed numerous sectors and reshaped our everyday lives. Among the most transformative advancements are generative models, AI systems capable of creating text, images, music, and more with surprising creativity and accuracy. These models, such as OpenAI’s GPT-4 and Google’s BERT, are not just impressive technologies; they drive innovation and shape the future of how humans and machines work together.

However, as generative models become more prominent, the complexities and responsibilities of their use grow. Generating human-like content brings significant ethical, legal, and practical challenges. Ensuring these models operate accurately, fairly, and responsibly is essential. This is where AI auditing comes in, acting as a critical safeguard to ensure that generative models meet high standards of performance and ethics.

The Need for AI Auditing

AI auditing is essential for ensuring AI systems function correctly and adhere to ethical standards. This is important, especially in high-stakes areas like healthcare, finance, and law, where errors can have serious consequences. For example, AI models used in medical diagnoses must be thoroughly audited to prevent misdiagnosis and ensure patient safety.

Another critical aspect of AI auditing is bias mitigation. AI models can perpetuate biases from their training data, leading to unfair outcomes. This is particularly concerning in hiring, lending, and law enforcement, where biased decisions can aggravate social inequalities. Thorough auditing helps identify and reduce these biases, promoting fairness and equity.

Ethical considerations are also central to AI auditing. AI systems must avoid generating harmful or misleading content, protect user privacy, and prevent unintended harm. Auditing ensures these standards are maintained, safeguarding users and society. By embedding ethical principles into auditing, organizations can ensure their AI systems align with societal values and norms.

Furthermore, regulatory compliance is increasingly important as new AI laws and regulations emerge. For example, the EU’s AI Act sets stringent requirements for deploying AI systems, particularly high-risk ones. Therefore, organizations must audit their AI systems to comply with these legal requirements, avoid penalties, and maintain their reputation. AI auditing provides a structured approach to achieve and demonstrate compliance, helping organizations stay ahead of regulatory changes, mitigate legal risks, and promote a culture of accountability and transparency.

Challenges in AI Auditing

Auditing generative models have several challenges due to their complexity and the dynamic nature of their outputs. One significant challenge is the sheer volume and complexity of the data on which these models are trained. For example, GPT-4 was trained on over 570GB of text data from diverse sources, making it difficult to track and understand every aspect. Auditors need sophisticated tools and methodologies to manage this complexity effectively.

Additionally, the dynamic nature of AI models poses another challenge, as these models continuously learn and evolve, leading to outputs that can change over time. This necessitates ongoing scrutiny to ensure consistent audits. A model might adapt to new data inputs or user interactions, which requires auditors to be vigilant and proactive.

The interpretability of these models is also a significant hurdle. Many AI models, particularly deep learning models, are often considered “black boxes” due to their complexity, making it difficult for auditors to understand how specific outputs are generated. Although tools like SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) are being developed to improve interpretability, this field is still evolving and poses significant challenges for auditors.

Lastly, comprehensive AI auditing is resource-intensive, requiring significant computational power, skilled personnel, and time. This can be particularly challenging for smaller organizations, as auditing complex models like GPT-4, which has billions of parameters, is crucial. Ensuring these audits are thorough and effective is crucial, but it remains a considerable barrier for many.

Strategies for Effective AI Auditing

To address the challenges of ensuring the performance and accuracy of generative models, several strategies can be employed:

Regular Monitoring and Testing

Continuous monitoring and testing of AI models are necessary. This involves regularly evaluating outputs for accuracy, relevance, and ethical adherence. Automated tools can streamline this process, allowing real-time audits and timely interventions.

Transparency and Explainability

Enhancing transparency and explainability is essential. Techniques such as model interpretability frameworks and Explainable AI (XAI) help auditors understand decision-making processes and identify potential issues. For instance, Google’s “What-If Tool” allows users to explore model behavior interactively, facilitating better understanding and auditing.

Bias Detection and Mitigation

Implementing robust bias detection and mitigation techniques is vital. This includes using diverse training datasets, employing fairness-aware algorithms, and regularly assessing models for biases. Tools like IBM’s AI Fairness 360 provide comprehensive metrics and algorithms to detect and mitigate bias.

Human-in-the-Loop

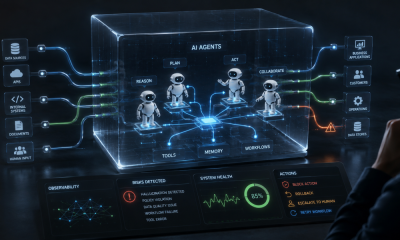

Incorporating human oversight in AI development and auditing can catch issues automated systems might miss. This involves human experts reviewing and validating AI outputs. In high-stakes environments, human oversight is crucial to ensure trust and reliability.

Ethical Frameworks and Guidelines

Adopting ethical frameworks, such as the AI Ethics Guidelines from the European Commission, ensures AI systems adhere to ethical standards. Organizations should integrate clear ethical guidelines into the AI development and auditing process. Ethical AI certifications, like those from IEEE, can serve as benchmarks.

Real-World Examples

Several real-world examples highlight the importance and effectiveness of AI auditing. OpenAI’s GPT-3 model undergoes rigorous auditing to address misinformation and bias, with continuous monitoring, human reviewers, and usage guidelines. This practice extends to GPT-4, where OpenAI spent over six months enhancing its safety and alignment post-training. Advanced monitoring systems, including real-time auditing tools and Reinforcement Learning with Human Feedback (RLHF), are used to refine model behavior and reduce harmful outputs.

Google has developed several tools to enhance the transparency and interpretability of its BERT model. One key tool is the Learning Interpretability Tool (LIT), a visual, interactive platform designed to help researchers and practitioners understand, visualize, and debug machine learning models. LIT supports text, image, and tabular data, making it versatile for various types of analysis. It includes features like salience maps, attention visualization, metrics calculations, and counterfactual generation to help auditors understand model behavior and identify potential biases.

AI models play a critical role in diagnostics and treatment recommendations in the healthcare sector. For example, IBM Watson Health has implemented rigorous auditing processes for its AI systems to ensure accuracy and reliability, thereby reducing the risk of incorrect diagnoses and treatment plans. Watson for Oncology is continuously audited to ensure it provides evidence-based treatment recommendations validated by medical experts.

The Bottom Line

AI auditing is essential for ensuring the performance and accuracy of generative models. The need for robust auditing practices will only grow as these models become more integrated into various aspects of society. By addressing the challenges and employing effective strategies, organizations can utilize the full potential of generative models while mitigating risks and adhering to ethical standards.

The future of AI auditing holds promise, with advancements that will further enhance the reliability and trustworthiness of AI systems. Through continuous innovation and collaboration, we can build a future where AI serves humanity responsibly and ethically.