Healthcare

Popular COVIDx Dataset Criticized by UK Researchers

A research consortium from the UK has leveled criticism at the extent of scientific confidence vested in open source datasets used for computer vision-based analysis of COVID-19 patients’ chest X-rays, centering on the popular open source dataset COVIDx.

The researchers, having tested COVIDx in various AI training models, claim that it is ‘not representative of the real clinical problem’, that the results obtained by using it are ‘inflated’, and that the models ‘do not generalise well’ to real world data.

The authors also note the inconsistency of the contributed data that makes up COVIDx, where original images come in a variety of resolutions which are automatically reformatted by the deep learning workflow into the consistent sizes necessary for training, and observe that this process can introduce deceptive artifacts relating to the image resizing algorithm, rather than the clinical aspect of the data.

The paper is called The pitfalls of using open data to develop deep learning solutions for COVID-19 detection in chest X-rays, and is a collaboration between the Center for Computational Imaging & Simulation in Biomedicine (CISTIB) at the University of Leeds, together with researchers from five other organizations in the same city, including the Leeds Teaching Hospitals NHS Trust.

The research details, among other negative practices, the ‘misuse of labels’ in the COVIDx dataset, as well as a ‘high risk of bias and confounding’. The researchers’ own experiments in putting the dataset through its paces across three viable deep learning models prompted them to conclude that ‘the exceptional performance reported widely across the problem domain is inflated, that model performance results are misrepresented, and that models do not generalise well to clinically-realistic data.’

Five Contrasting Datasets in One

The report* notes that the majority of current AI-based methodologies in this field depend on a ‘heterogeneous’ assortment of data from disparate open source repositories, observing that five datasets with notably different characteristics have been agglomerated into the COVIDx dataset despite (in the researchers’ consideration) inadequate parity of data quality and type.

The COVIDx dataset was released in May 2020 as a consortium effort led by the Department of Systems Design Engineering at the University of Waterloo in Canada, with the data made available as part of the COVID-Net Open Source Initiative.

The five collections that constitute COVIDx are: the COVID-19 Image Data Collection (an open sourced set from Montreal researchers); the COVID-19 Chest X-ray Dataset initiative; the Actualmed COVID-19 Chest X-ray dataset; the COVID-19 Radiography Database; and the RSNA Pneumonia Detection Challenge dataset, one of the many pre-COVID sets that have been pressed into service for the pandemic crisis.

(RICORD – see below – has since been added to COVIDx, but because it was included subsequent to the models of interest in the study, it was excluded from the test data, and in any case will have tended to variegate COVIDx even further, which is the central complaint of the authors of the study.)

The researchers contend that COVIDx is the ‘largest and most widely used’ dataset of its kind within the scientific community related to COVID research, and that data imported into COVIDx from the constituent external datasets does not adequately conform to the tripartite schema of the COVIDx dataset (i.e.., ‘normal’, ‘pneumonia’, and ‘COVID-19’).

Near Enough..?

In examining the provenance and suitability of the contributing datasets for COVIDx at the time of the study, the researchers found ‘misuse’ of the RSNA data, where data of one type has, the researchers claim, been herded into a different category:

‘The RSNA repository, which uses publicly available chest X-ray data from NIH Chestx-ray8 [**], was designed for a segmentation task and as such contains three classes of images, ‘Lung Opacity’, ‘No Lung Opacity/Not Normal’, and ‘Normal’, with bounding boxes available for ‘Lung Opacity’ cases.

‘In its compilation into COVIDx all chest X-rays from the ‘Lung Opacity’ class are included in the pneumonia class.’

Effectively, the paper claims, the COVIDx methodology expands the definition of ‘pneumonia’ to include ‘all pneumonia-like lung opacities’. Consequently, the like-for-like value of comparative data types is (presumably) threatened. The researchers state:

‘ […] the pneumonia class within the COVIDx dataset contains chest X-rays with an assortment of many other pathologies, including, pleural effusion, infiltration, consolidation, emphysema and masses. Consolidation is a radiological feature of possible pneumonia, not a clinical diagnosis. To use consolidation as a substitute for pneumonia without documenting this is potentially misleading.’

Alternative pathologies (besides COVID-19) associated with COVIDx. Source: https://arxiv.org/ftp/arxiv/papers/2109/2109.08020.pdf

The report finds that only 6.13% of the 4,305 pneumonia cases sourced from RSNA were accurately labeled, representing a mere 265 genuine pneumonia cases.

Further, many of the non-pneumonia cases included in COVIDx represented co-morbidities – complications of other diseases, or otherwise secondary medical issues in conditions that are not necessarily related to pneumonia.

Not ‘Normal’

The report further suggests that the influence of the RSNA challenge dataset in COVIDx has skewed the empirical stability of the data. The researchers observe that COVIDx prioritizes the ‘normal’ class of the RSNA data, effectively excluding all ‘no lung opacity/not normal’ classes in the wider dataset. The paper says:

‘While this is in keeping with what is expected within the ‘normal’ label, expanding the pneumonia class and using only ‘normal’ chest X-rays, rather than pneumonia-negative cases greatly simplifies the classification task.

‘The end result of this is dataset that reflects a task that is removed from the true clinical problem.’

Potential Biases From Incompatible Data Standards

The paper discerns a number of other types of bias in COVIDx, noting that some of the contributing data mixes pediatric chest X-ray images with the X-rays of adult patients, and further observes that this data is the only ‘significant’ source of pediatric images in COVIDx.

Also, images from the RSNA dataset have a 1024×1024 resolution, while another contributing dataset provides images only a 299×299 resolution. Since machine learning models will invariably resize images to accommodate the available training space (latent space), this means that the 299×299 images will be upscaled in a training workflow (potentially leading to artifacts related to a scaling algorithm rather than pathology), and the larger images downscaled. Again, this mitigates against the homogeneous data standards necessary for AI-based computer vision analysis.

Further, the ActMed data ingested into COVIDx contains ‘disk-shaped markers’ in COVID-19 chest X-rays, a recurrent feature that’s inconsistent with the wider dataset, and which would need to be handled as a ‘repetitive outlier’.

This is the kind of issue that is usually addressed by either cleaning or omitting the data, since the recurrence of the markers is enough to register as a ‘feature’ in training, but not frequent enough to generalize usefully in the wider scheme of the dataset. Without a mechanism for discounting the influence of the artificial markers, they could potentially be considered by the methodology of the machine learning system as pathological phenomena.

Training and Testing

The researchers tested COVIDx against two comparative datasets across three models. The extra two datasets were RICORD, which contains 1096 COVID-19 chest X-rays across 361 patients, sourced from four countries; and CheXpert, a public dataset

The three models used were COVID-Net, CoroNet and DarkCovidNet. All three models employ Convolutional Neural Networks (CNNs), though CoroNet consists of a two-stage image classification process, with autoencoders passing output to a CNN classifier.

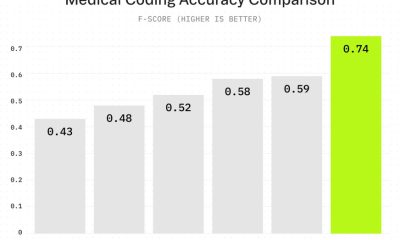

Testing showed a ‘steep drop’ in all model performance on non-COVIDx datasets compared to the 86% accuracy resulting when using COVIDx data. However, if the data is mislabeled or misgrouped, these are effectively false results. The researchers noted greatly decreased accuracy results on the comparable external datasets, which the paper proposes as more realistic and correctly-classified data.

Further, the paper observes:

‘A clinical review of 500 grad-CAM saliency maps generated by prediction on COVIDx test data showed a trend of significance in clinically irrelevant features. This commonly included a focus on bony-structures and soft tissues instead of diffuse bilateral opacification of the lung fields that are typical of COVID-19 infection.’

This is an X-ray of a confirmed COVID-19 case, assigned a mere 0.938 prediction probability from COVIDx trained on DarkCovidNet.

Conclusions

The researchers criticize the lack of demographic or clinical data related to the X-ray images in COVIDx, arguing that without these, it is impossible to account for ‘confounding factors’ such as age.

They also observe that the problems found in the COVIDx dataset may be applicable to other datasets that were similarly sourced (i.e. by mixing pre-COVID radiological image databases with recent COVID X-ray image data without adequate data architecture, variance compensation, and clear scope of the limitations of this approach).

In summarizing the shortfalls of COVIDx, the researchers emphasize the lop-sided inclusion of ‘clear’ pediatric X-rays, as well as their perception of the misuse of labels and high risk of bias and confounding in COVIDx, contending that ‘the exceptional performance [of COVIDx] reported widely across the problem domain is inflated, that model performance results are misrepresented, and that models do not generalise well to clinically-realistic data.’

The report concludes:

‘A lack of available hospital data combined with inadequate model evaluation across the problem domain has allowed the use of open-source data to mislead the research community. Continued publication of inflated model performance metrics risks damaging the trustworthiness of AI research in medical diagnostics, particularly where the disease is of great public interest. The quality of research in this domain must improve to prevent this from happening, this must start with the data.’

*Though the researchers of the study claim to have made the data, files and code for the new paper available online, access requires login, and, at the time of writing, no general public access to the files is available.

** ChestX-ray8: Hospital-scale Chest X-ray Database and Benchmarks on Weakly-Supervised Classification and Localization of Common Thorax Diseases – https://arxiv.org/pdf/1705.02315.pdf