Artificial Intelligence

Researchers Develop New Techniques for Improving Degraded Images

A team of researchers at Yale-NUS College have developed new computer vision and deep learning approaches to extract more accurate data from low-level vision in videos caused by environmental factors like rain and night-time conditions. They also improved the accuracy of 3D human pose estimation in videos.

Computer vision technology, which is used in applications like automatic surveillance systems, autonomous vehicles, and healthcare and social distancing tools, is often impacted by environmental factors, which can cause problems with the extracted data.

The new research was presented at the 2021 Conference on Computer Vision and Pattern Recognition (CVPR).

Environmental Impact on Images

Conditions like low light and human-made light effects like glare, glow, and floodlights affect night-time images. Rain images are also affected by rain streaks or rain accumulation.

Yale-NUS College Associate Professor of Science Robby Tan led the research team.

“Many computer vision systems like automatic surveillance and self-driving cars, rely on clear visibility of the input videos to work well. For instance, self-driving cars cannot work robustly in heavy rain and CCTV automatic surveillance systems often fail at night, particularly if the scenes are dark or there is significant glare or floodlights,” said Assoc. Prof Tan.

The team relied on two separate studies which introduced deep learning algorithms to enhance the quality of night-time videos and rain videos.

The first study focused on boosting the brightness while simultaneously suppressing noise and light effects, such as glare, glow, and floodlights to create clear night-time images. The new technique is aimed at improving the clarity in night-time images and videos when there is unavoidable glare, which existing methods have yet to do.

In countries where heavy rain is common, rain accumulation negatively impacts the visibility in videos. The second study set out to address the problem by introducing a method that employs a frame alignment, which enables better visual information without being affected by rain streaks, which often appear randomly in different frames. The team used a moving camera to employ depth estimation, which helped remove the rain veiling effect. While existing methods revolve around removing rain streaks, the newly developed ones can remove both rain streaks and the rain veling effect simultaneously.

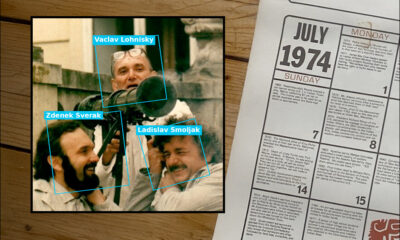

Image: Yale-NUS College

3D Human Pose Estimation

Along with the new techniques, the team also presented its research on 3D human pose estimation, which can be used in video surveillance, video gaming, and sports broadcasting.

3D multi-person pose estimation from a monocular video, or video taken from a single camera, has been increasingly researched over the last few years. Unlike videos from multiple cameras, monocular videos are more flexible and can be taken with a single camera, such as a mobile phone.

With that said, high activity like multiple individuals in the same scene affects accuracy in human detection. This is especially true when individuals are interacting closely or overlapping with each other in the monocular video.

The team’s third study estimated 3D human pose from a video by combining two existing methods, which were top-down and bottom-up approaches. The new method produces more reliable pose estimation in multi-person settings when compared to the other two, and it is better equipped to handle distance between individuals.

“As a next step in our 3D human pose estimation research, which is supported by the National Research Foundation, we will be looking at how to protect the privacy information of the videos. For the visibility enhancement methods, we strive to contribute to advancements in the field of computer vision, as they are critical to many applications that can affect our daily lives, such as enabling self-driving cars to work better in adverse weather conditions,” said Assoc. Prof Tan.