Interviews

Navrina Singh, CEO and Founder of Credo AI – Interview Series

Navrina Singh is CEO and Founder of Credo AI, a company designing the first comprehensive AI governance SaaS platform that enables companies and individuals to better understand and deliver trustworthy and ethical AI, stay ahead of new regulations and policies and create an AI record that is auditable and transparent throughout the organization. The company has been running in stealth and quietly building an impressive customer portfolio that includes one of the largest government contractors and financial services companies in the world, among other Fortune 100 brands.

You grew up in a small town in India with humble beginnings, how did you find yourself attracted to engineering and the field of AI?

I come from a middle-class family; my dad was in the military for four decades and my mom was a teacher turned fashion designer. From an early age, they always encouraged my sense of curiosity, love for scientific discoveries, and provided me a safe place to explore the “what ifs.”

When I was growing up in India, women weren’t afforded as many opportunities as men. Lots of decisions were already made for a girl, based on what was acceptable by society. The only way to break away and afford a good life for your family was through education.

After graduating high school, I owned my first computer which was my window into the world. I was really into hardware, and spent hours online learning how to disassemble motherboards, build robotics applications, learning to code, redoing electrical in my house (yikes!) and much more. Engineering became my safe space, where I could experiment, explore, build, and experience a world that I wanted to be a part of through my inventions. I was inspired and excited about how technology could be a powerful tool to affect change, especially for women.

Growing up, my means were limited, but the possibilities were not. I always dreamed of coming to the United States to build a stronger foundation in technology to solve the biggest challenges facing humanity. I immigrated here in 2001, to continue my engineering journey at the University of Wisconsin Madison.

Over the course of my nearly two decades in engineering, I had the privilege to work across multiple frontier technologies, from mobile to augmented reality.

In 2011, I started exploring Artificial Intelligence to build new emerging businesses at Qualcomm. I have also spent time building businesses and applications in AI across speech, vision and NLP at Microsoft and Qualcomm, over the last decade. As I learned more about AI and machine learning, it became evident that this frontier technology was going to massively disrupt our lives beyond what we can imagine.

Building enterprise scale Artificial Intelligence also made me realize how the technology can do a disservice and harm our people and communities, if left unchecked. We, as leaders, must take extreme responsibility for the technologies we are building. We must be proactive in maximizing its benefits to humanity and minimizing its risk. After all “What we make, makes us “ So building responsibly is critical, which has inspired the next phase of my career building AI governance.

When did you first begin seriously thinking about AI governance?

While I was the head of innovation at Qualcomm, we were exploring the possibilities that AI could bring to our emerging businesses. Our initial work was focused on collaborative robots that were used in supply chain. This was my first exposure to the “real” risks of AI, especially with respect to the physical safety of the humans who were operating alongside these robotics systems.

During this time, we spent significant time with startups, such as Soul Machines, learning new use cases and finding ways to partner with them to accelerate our work. Soul Machines had released a new version of their baby avatar, “Baby X”. Watching this neural net powered baby, actively learning from the world around her, made me pause and reflect on the unintended consequences of this technology. My daughter Ziya was born around the same time, and we fondly nicknamed her Baby “Z” after Soul Machines Baby “X”. I have spoken about this quite a lot including at the Women In Product Keynote in 2019.

The learning journeys of Baby X and Baby Z were eerily similar! As Ziya was growing up, we were providing her the initial vocabulary and tools to learn. Over time, she started to understand the world and put things together herself. Baby X, the neural net baby, was similarly learning through vision sensors and speech recognition, and quickly building a deep understanding of the human world.

These early AI moments made it clear to me that it was critical to find ways to manage risk and put effective guardrails around this powerful technology to ensure it is deployed for good.

Could you share the genesis story behind Credo AI?

After Qualcomm, I was recruited to lead the commercialization of the AI technologies at Microsoft in the business development group and, subsequently, in the product group. During my tenure, I was fortunate to work on a spectrum of AI technologies with applications in use cases from Conversational AI to contract intelligence, and from visual inspections to speech powered product discovery.

However, the seed of AI governance planted at Qualcomm kept growing as I continued witnessing the increasing “oversight deficit” in the development of Artificial Intelligence technologies. As a product leader working with the data science and engineering team, we were motivated to build a high performing AI application powered by machine learning and deploy it quickly in the market.

We viewed compliance and governance as gate check slowing down the pace of innovation. However, my growing concerns about the governance deficit between the technical and oversight professionals in risk, compliance, audit, motivated me to continue exploring the challenges in realizing AI governance.

All the unanswered questions about AI governance prompted me to seek answers. So I started laying the foundation for a nonprofit called MERAT (Marketplace for Ethical and Responsible AI tools) with the goal to create an ecosystem for startups and Global 2000s focusing on responsible development of AI.

Through early experimentation at MERAT, it became clear that there weren’t startups enabling multi- stakeholder tools to power comprehensive governance of AI and ML applications – until Credo AI. This gap was my opportunity to realize the mission of guiding AI intentionally. My hypothesis kept getting validated that the next frontier of AI revolution will be guided by governance. Enterprises which embrace governance will emerge as the leaders in trusted brands.

I was approached by the AI Fund, an incubator founded by Andrew Ng, a renowned AI expert. AI Fund had been focused on the same topics of AI auditability and governance that I had been consumed with for the past decade.

Credo AI was incorporated on February 28th, 2020, with funding from the AI Fund.

Credo means “a set of values that guides your actions.” With Credo AI, our vision is to empower enterprises to create AI with the highest ethical standards.

In a previous interview, you discussed how brands in the future will be trusted not “Only” based on how they build and deploy AI, but on how they scale AI so that it is in the service of humanity. Could you elaborate on this?

Artificial Intelligence is one of the game changing technologies of this century and offers transformative opportunities for the economy and society. It’s on track to contribute nearly $16 trillion to the global economy by 2030.

However, the past several years have put a spotlight on major AI threats (and no, it’s not the robot overlords!) already here. For example, judicial algorithms optimizing for minimal recidivism (a relapse into criminal behavior) can introduce bias and deny parole to those who deserve it.

Social algorithms optimizing for engagement can divide a nation.

Unfair algorithms can be the reason you don’t get a job or admission to the university.

The more autonomy we give our tools, the wider the range of unintended consequences. Extraordinary scale generates extraordinary impact, but not always the impact we intend (I write about this in Credo AI Manifesto).

I predict that enterprises who invest in AI governance to help them build technology responsibly and commit to ethical use of data , will be able to engender trust across stakeholders – customers, consumers, investors, employees, and others.

How does Credo AI help keep companies accountable?

Accountability means an obligation to accept responsibility for one’s actions. In the context of AI development and deployment, this means that enterprises need to not only have aligned on what “good looks like to them,” but take intentional steps to demonstrate action on those aligned goals and assume responsibility for the outcomes.

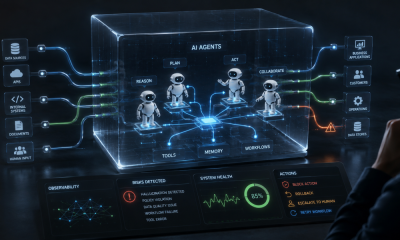

Credo AI aims to be a sherpa for the enterprises in their ethical and responsible AI initiatives to hold them accountable to the diverse stakeholders: users, employees, regulators, auditors, investors, executives and others. Hence Credo AI has pioneered a real-time, context-driven, comprehensive, and continuous solution to deliver ethical and responsible AI at scale.

Building accountability and trust in AI is a dynamic process. Organizations must align on enterprise values, codify those values, build them into their organizational infrastructure, observe impact and repeat the process with diverse voices providing input at every stage. Credo AI enables this accountability via a comprehensive and real time solution for managing compliance and measuring risk for AI deployments at scale.

Using Credo AI governance solution, enterprises are able to:

- Align on governance requirements for their AI use cases and models, with customizable policy packs and decision support tools for choosing model evaluation metrics.

- Assess and interrogate the data and ML models against those aligned goals

- Analyze technical specs into actionable risk impact for the business at every stage of model development and deployment, so you can catch and fix governance issues before they become massive problems

By recording the decisions and actions at every point, Credo AI captures the origin of decisions that went into the creation of the models as evidence. This evidence will be a record shared internally with enterprise stakeholders and externally with auditors that builds trust in the intentions of AI.

This evidence will help development teams predict and prevent unintended consequences and will standardize the way we assess the efficacy and trustworthiness of AI models.

We are in the very early innings of enabling AI governance through comprehensive assessments and assurance and are excited to work with more enterprises to promote accountability.

How does Credo AI help companies to shift away from AI governance being an afterthought?

In today’s world, there is a lack of clarity around what really “responsible,” “ethical,” and “trustworthy” AI is, and how can enterprises deliver on it. We help reframe this key question for the enterprises, by using the Credo AI SaaS platform to demonstrate the” economics of ethics”.

With Credo AI, enterprises are able to not only deliver fair, compliant and auditable AI, but they are able to build customer trust.

Building consumer trust can widen a company’s customer base and unlock more sales. For example, companies can provide the best possible customer experience by using ethical AI in their customer service chatbots, or responsibly use AI to understand user activity and suggest additional content or products for them. Extremely satisfied customers then offer the best advertising by sharing their experience with friends and family.

In addition, enterprise can also see how collaboration across technical and oversight functions can help them save cost because of faster time to compliance, informed risk management, lowered brand and regulatory risks. We help organizations deploy ethical AI by building a bridge between technical metrics and tangible business outcomes. As more and more people are realizing, championing ethical AI involves much more than checking a “regulatory compliance” box. But it has the power to deliver even more than a contribution to a more equitable world, which would be a worthwhile result by itself.

When a company upholds high ethical standards, it can expect real-life business outcomes, such as increased revenue. Shifting the perspective from an abstract “soft” concept, to an objective business decision has been a key way for us to help position AI governance as a critical part of the economic and social engine for the company.

Recently, the Biden Administration created the National Artificial Intelligence Advisory Committee. In your view, what are the implications of this for AI businesses?

Governments globally are going full force on regulating AI, and enterprises need to get ready. The Biden’s administration’s thoughtful creation of the National AI advisory committee and investments in the White House OSTP is a good first step to bring action to the discussions around the bill of rights to govern AI systems. However, much work needs to happen to realize what Responsible AI would mean for our country and economy.

I believe that 2022 is positioned to be the year of action for responsible AI. It’s a wake-up call for enterprises looking to implement or strengthen their AI ethical framework. Now is the time for companies to assess their existing framework for gaps, or, for companies lacking an approach, to implement a solid framework for trustworthy AI to set them up for success to lead in the age of AI.

We should expect more hard and soft regulations to emerge. In addition to the White House actions, there’s a lot of momentum coming from other policymakers and agencies, such as the Senate’s draft Algorithmic Accountability Act, the Federal Trade Commission (FTC) guidance around AI bias, and more.

If we fast forward to the future, how important will AI ethics and AI governance be in five years?

We are in the early innings of AI governance. To underscore how critical AI governance is, here are some of my predictions for the next few years:

- Ethical AI governance will become a top organization priority

Enterprises continue to invest and reap benefits of using AI in their businesses. IDC has projected a $340B spend on AI in 2022. Without governance, enterprises will be reluctant to invest in AI because of concerns over bias, security, accuracy, compliance of these systems.

Without trust, consumers will also be reluctant to accept AI based products or to share data to build these systems. Over the next five years, ethical AI governance will become a boardroom priority and an important metric that companies will have to disclose and report on to build trust across their stakeholders.

- New socio technical job categories will emerge within an enterprise

We are already seeing ethics forward organizations investing significantly in future proofing their development to ensure fair, compliant, and auditable AI applications.

To lead in AI, enterprises will need to further expedite learning about the AI regulatory landscape and implementations of accountability structures at scale. As the emerging field of ethical AI implementation evolves, a new sociotechnical job category could arise to facilitate these conversations and take charge in this area. This new component of the workforce could function as intermediaries between technical and the oversights, helping develop common standards and goals.

- New AI powered experiences will demand stricter governance

Managing the intended and the unintended consequence of artificial intelligence is critical, especially as we enter the Metaverse and web3 world. The risks of AI exist in the real world and the virtual world, but are further amplified in the latter.

By diving into the Metaverse head first with a lack of AI oversight, enterprises put their customers at risk for challenges like identity theft and fraud. It is paramount that AI and governance issues are solved in the real world now in order to keep consumers safe in the Metaverse.

- New ecosystems in ethical AI governance will emerge

The world has awakened to the idea that AI governance represents the basic building block to operationalizing responsible AI. Over the next several years, we will see a competitive and dynamic market of service providers emerge to provide tools, services, education and other capabilities to support this massive need.

In addition, we will also see consolidation and augmentation of existing MLops and GRC platforms, to bring governance and assessment capabilities to AI.

Is there anything else that you would like to share about Credo AI?

Ethical AI governance is a future-proofed investment that you can’t afford not to make, and today is the best day to begin.

Thank you for the detailed interview and for sharing all of your insights regarding AI governance and ethics, readers who wish to learn more should visit Credo AI.