Anderson's Angle

Jigsaw Puzzles Boost AI Visual Reasoning

New research indicates that AI models can get smarter at seeing by solving jigsaw puzzles. Rearranging scrambled images, videos, and 3D scenes helps them sharpen their visual skills without the need for extra data, labels, or tools.

In the current scramble to push Multimodal Large Language Models (MLLMs*) ahead of the pack (or at least stay three releases in front of the nearest rival), there are few easy wins and no free lunches.

Though many of 2025’s slew of impressive Chinese FOSS releases are reported to have lower development and running costs, occidental releases tend to throw more at the problem: more data volume, more inference grunt, more electricity (though not, as we recently noted, more actual human annotators, since that’s too expensive even for the $trillion+ scale gen-AI revolution).

In the research literature, most supposedly ‘free’ approaches to the evolution of AI architectures tend to offer only minor incremental improvements; or else improvements in areas that are not the most critically pursued. Nonetheless, the search for hitherto undiscovered ‘fundamental principles’ that could accelerate the pace of development is too tempting to abandon.

Picking up the Pieces

While not quite in that category, a new academic collaboration between Chinese institutions claims to have determined that making VLMs solve jigsaw puzzles improves their performance notably, even though this reinforcement learning approach has previously performed poorly in this area, and even though it requires no extra systems, ancillary models or other ‘bolt-on’ processes:

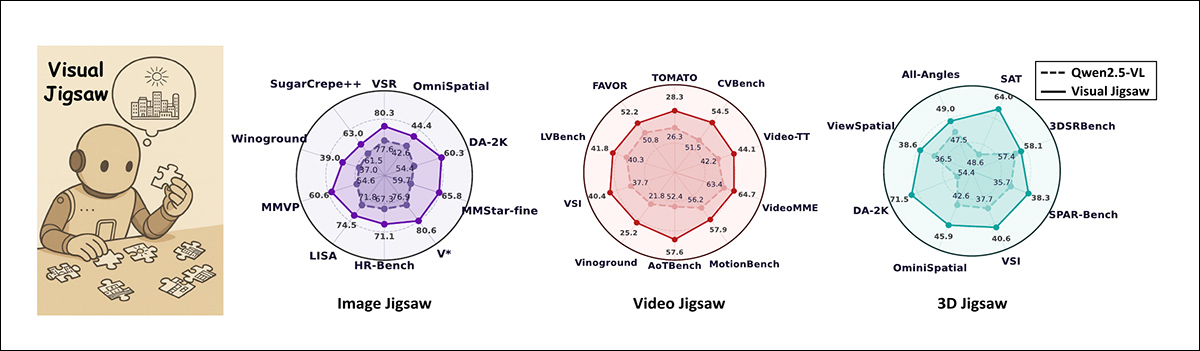

Visual Jigsaw is a self-supervised post-training framework that improves vision-centric skills in multimodal large language models. By training on jigsaw tasks across images, videos, and 3D data, the models gain sharper fine-grained, spatial, and compositional perception in images, stronger temporal reasoning in videos, and enhanced geometry-aware understanding in 3D scenes. The radar charts in the image above show consistent gains over base Qwen2.5-VL, with value scales adjusted for each benchmark for clarity. Source: https://arxiv.org/pdf/2509.25190

The system devised by the researchers is titled Visual Jigsaw, and involves training existing MLLMs on material that has been fragmented and randomly dispersed, like a jigsaw. The authors developed three modalities for this approach: image, video, and 3D (i.e., CGI-style meshes), and found that a moderate adaptation of the same process benefited all three domains:

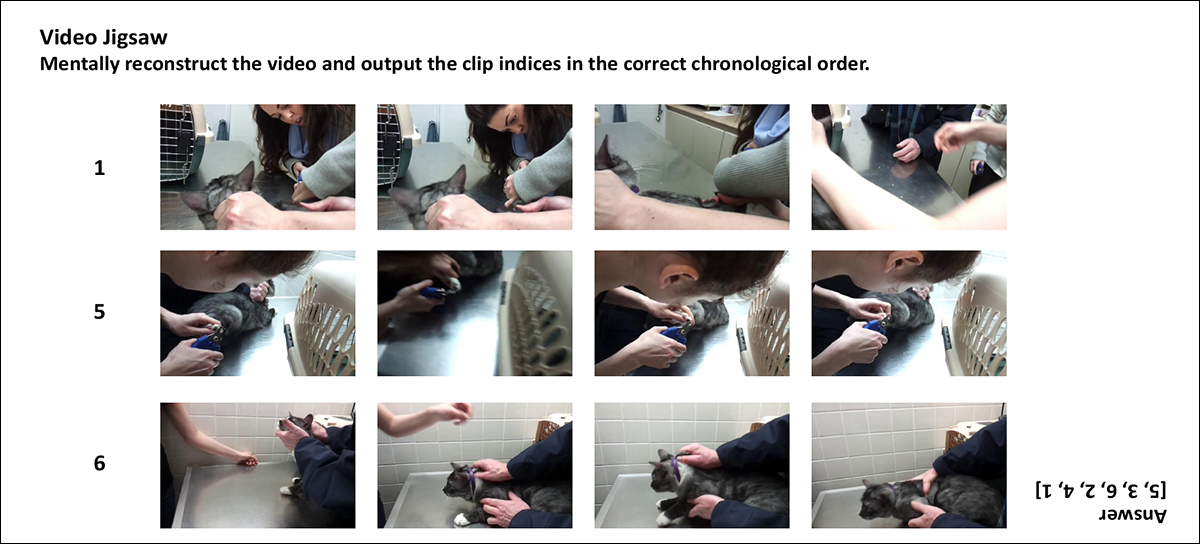

A representation of the three Visual Jigsaw tasks. In the Image Jigsaw, an image is split into patches, shuffled, and the model predicts the correct layout; in the Video Jigsaw, clips are shuffled and the model restores their order in time; in the 3D Jigsaw, points with different depths are shuffled and the model ranks them from nearest to farthest. Model outputs are scored against ground truth, with partial credit given for partly correct solutions.

Visual Jigsaw’s training method helps AI models improve at understanding visual information by making them reassemble these jumbled-up images, video clips, or 3D data points.

The process is based on words rather than images, and therefore there’s no need for the model to generate images or use any extra visual components. This method fits into a system called Reinforcement Learning from Verifiable Rewards (RLVR), where the model gets rewarded for correct answers based on clear, automatic rules; and where, therefore, no human labeling is required.

This crucial fact is actually hard to glean from the new paper: that the system is assembling the puzzle semantically, through descriptions, and not in the shape-representative way that humans learn to solve such puzzles:

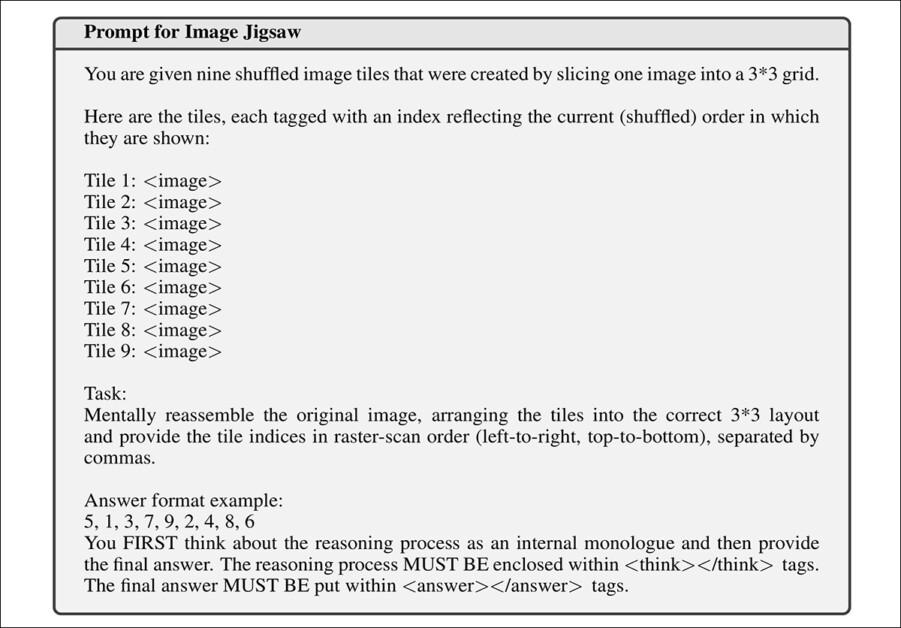

From the new paper’s supplementary material, an example RL task illustrating the text-based nature of this adjunct learning process. The images that would have been shown to the MLLM are not depicted here.

Though MLLMs deal extensively with vision-centric tasks, they are language-based architectures, not designed to generate images, videos or shape representations such as 3D meshes.

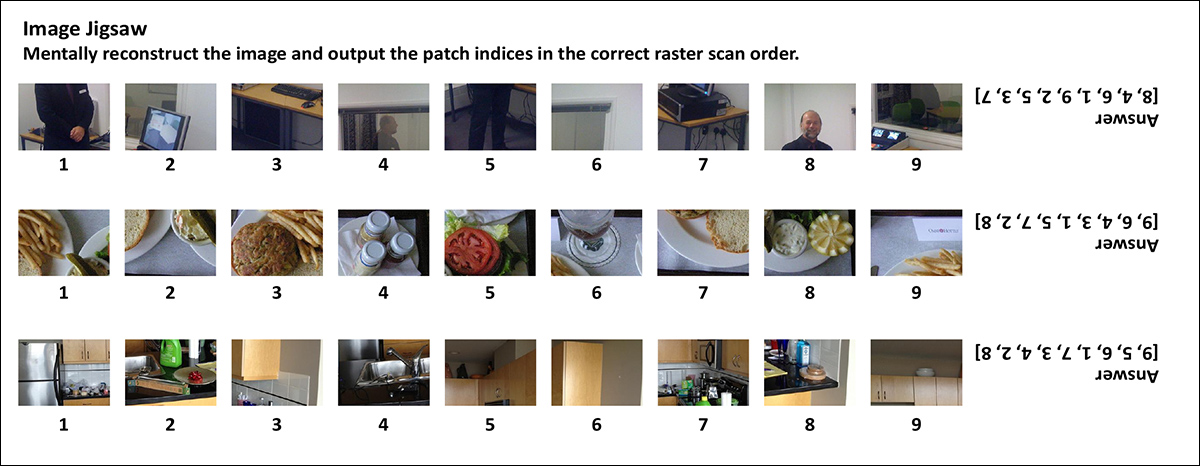

Examples from the image jigsaw task. Each row shows shuffled patches that the model must reorder into their original layout, with the correct arrangement shown on the right.

In any case, this type of training is done after the main learning phase, when the model already has some ability to understand images.

Prior approaches such as the 2017 Swiss paper Unsupervised Learning of Visual Representations by Solving Jigsaw Puzzles used this kind of reinforcement approach with rather less success, on convolutional neural networks (CNNs), a notably different kind of architecture in comparison to a modern MLLM.

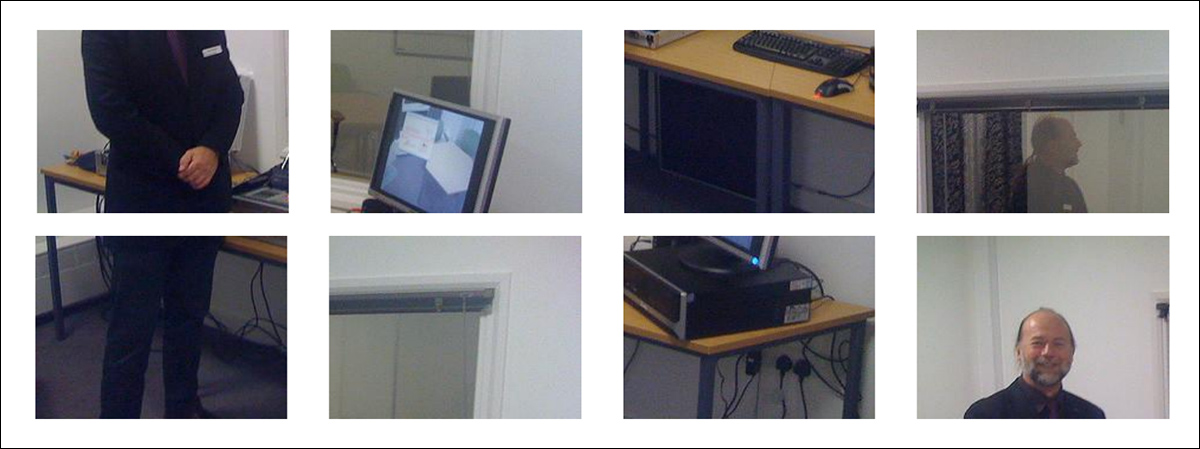

From the 2017 release ‘Unsupervised Learning of Visual Representations by Solving Jigsaw Puzzles ‘, an early example of the use of fragmentation as a reward-based challenge for a neural system. Source: https://arxiv.org/pdf/1603.09246

In tests, Visual Jigsaw led to what the authors claim to be consistent and measurable improvements across a wide range of benchmarks: the image jigsaw task improved fine-grained perception, spatial layout understanding, and compositional reasoning; the video jigsaw task enhanced the model’s ability to track temporal sequences and reason about event order; and the 3D jigsaw task strengthened depth-based understanding and spatial reasoning using only RGB-D inputs.

Across all three modalities, the paper reiterates, the new method outperformed several competitive baselines, despite requiring no architectural changes, extra visual modules, or additional supervised data:

‘Extensive experiments demonstrate substantial improvements in fine-grained perception, temporal reasoning, and 3D spatial understanding. Our findings highlight the potential of self-supervised vision-centric tasks in post-training MLLMs and aim to inspire further research on vision-centric pretext designs.’

The new paper is titled Visual Jigsaw Post-Training Improves MLLMs, and comes from six researchers across Nanyang Technological University, Linköping University, and SenseTime Research. The paper is accompanied by a project site with live demos (and you can even load your own image into the image-based jigsaw demo). The code and weights for the project have been made generally available,

Method

Though we’ll take a look at the way information is split up to accommodate the three tested modalities, we should first consider the reward design for the new system.

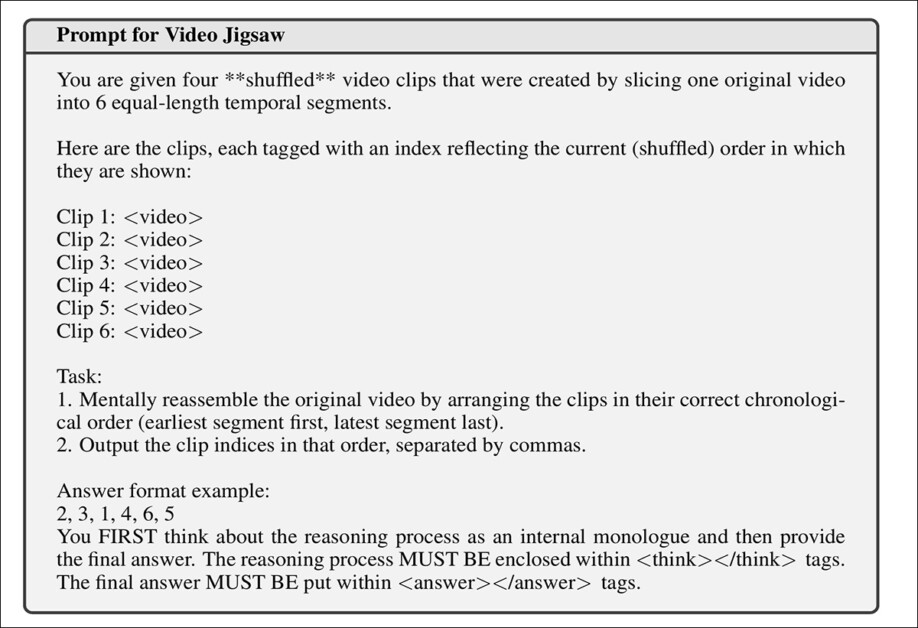

The Visual Jigsaw approach scores model responses using a graded reward, not just a simple pass or fail. If the model predicts the exact correct order of the jigsaw pieces, it receives a full reward; if the answer is mostly right, but not perfect, the model gets partial credit, scaled by a discount factor to avoid overvaluing near-misses (this prevents the model from gaming the system by repeating guesses that are only partially correct).

Invalid answers, such as the ‘cheat’ of using the same number repeatedly, receive a score of zero. To encourage consistent formatting, the model must place its reasoning inside <think> tags and its final answer inside <answer> tags. It receives a small bonus if this format is used correctly.

Images

To create a puzzle for the images modality, a picture is first divided into a grid of patches by slicing it into equal-sized blocks:

Examples of image-based patches for the system to solve.

The patches are laid out in a fixed order from top-left to bottom-right, as with the reading order on a page, before being scrambled with a random shuffle. The model is then exposed to this shuffled set of patches, and must figure out the original order by predicting the correct permutation that restores the original layout.

In training, 118,000 images from the COCO dataset were used, with each image yielding nine patches (i.e., ‘puzzle pieces’). The prompt given to the system is shown earlier in this article (the image above with the caption beginning ‘From the new paper’s supplementary material’).

Video

For the video jigsaw task, a video is chopped into a sequence of clips by slicing it evenly across time, and then shuffling the clip segments. The model is then shown this scrambled sequence, and must figure out their correct, original chronological order.

Truncated examples of the video puzzle challenge, from the paper’s supplementary material.

The training for this modality used 100,000 videos from the LLaVA-Video dataset, with each video split into six clips. To stop the model from exploiting obvious frame-matching cues at clip boundaries, 5% of the frames at the start and end of each clip were trimmed.

Clips contained no more than 12 frames, each at a maximum resolution of 128x28x28 pixels. Videos shorter than 24 seconds were excluded.

The prompt for this task is shown below:

The reinforcement learning prompt presented to the MLLMs for the video task, without the clips that would have been presented to the MLLM..

3D Data

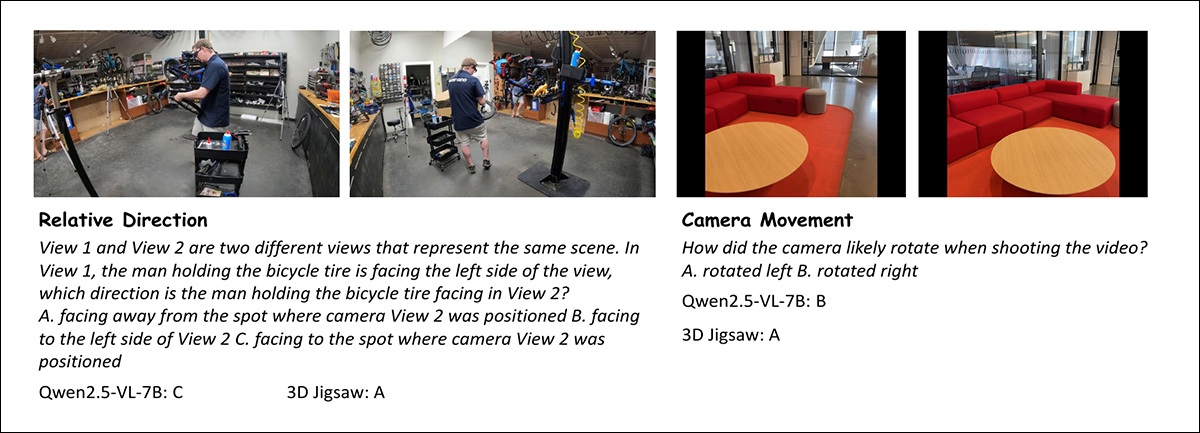

A full 3D jigsaw task would usually involve breaking up a 3D space (such as voxel blocks or point cloud fragments) into smaller chunks and training a model to reconstruct their original spatial layout.

However, the average MLLM is not equipped to handle raw 3D data directly, instead relying on semantically-interpreted image or video inputs. Therefore, to create a task that still taps into 3D reasoning while remaining compatible with current MLLMs, the authors introduced a more tractable variant using the aforementioned RGB-D images (i.e., 2D images that include depth information for each pixel).

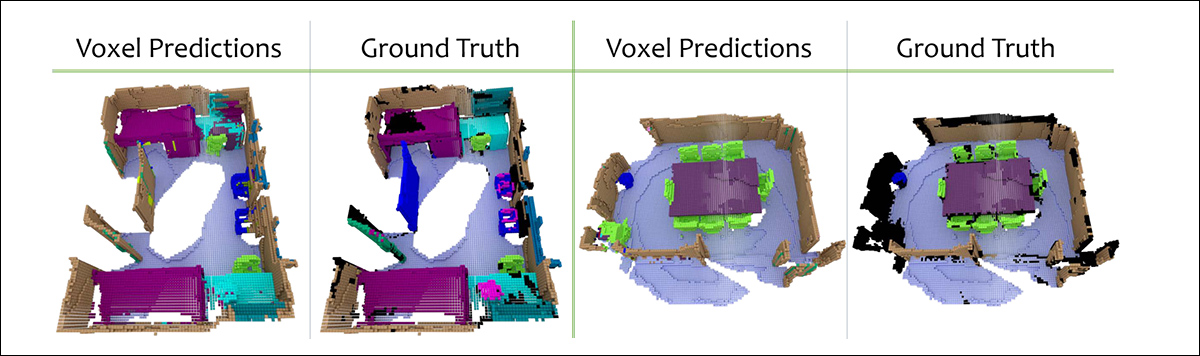

Example questions from the 3D Spatial Understanding Benchmark used to evaluate performance on relative viewpoint and camera motion reasoning. The 3D Jigsaw model correctly infers both the spatial relation between two views of a scene and the likely direction of camera rotation, outperforming the Qwen2.5-VL-7B baseline.

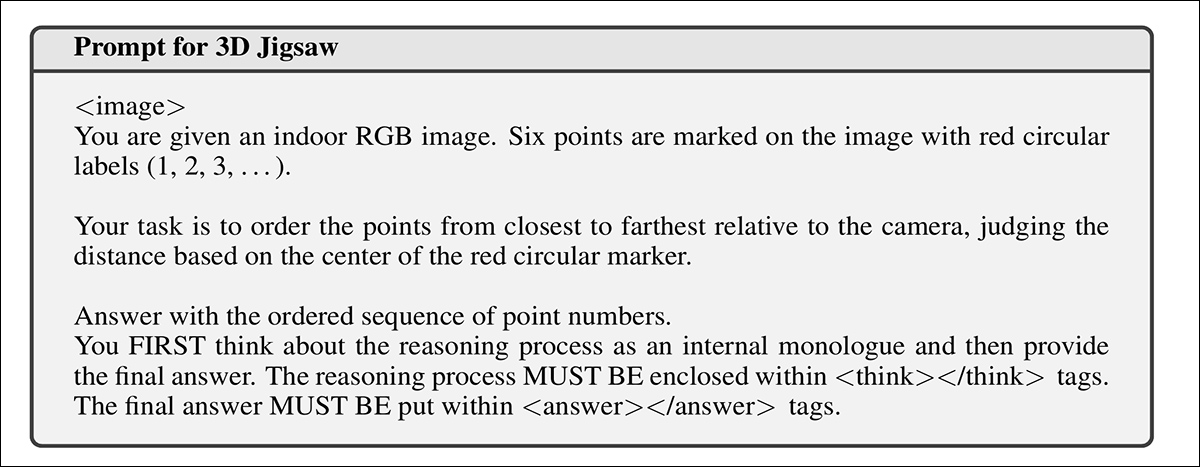

From each RGB-D image, the model is given a shuffled list of points that originally had distinct depths, ranging from near to far, the goal being to recover their correct depth-based ordering using only the 2D image as reference:

The RL prompt for the 3D jigsaw.

Each point is marked on the image (which is shown to the model, but not visualized in the prompt example in the image directly above), and the model must predict which was closest, second closest, and so on, effectively reconstructing the original depth sequence without access to the raw depth values.

The 3D jigsaw task is trained on RGB-D images from the ScanNet dataset, using 300,000 samples created by selecting random sets of six depth points per image.

Examples of point clouds from the ScanNet dataset used in the 3D jigsaw. Source: https://arxiv.org/pdf/1702.04405

Each point was required to lie within a depth range of 0.1 to 10 meters, and to promote diversity, no two points in a set allowed to be closer than 40 pixels in the image plane, or less than 0.2 meters apart in depth.

Tests

For initial tests, the system utilized Qwen2.5-VL-7B-Instruct as the base multimodal model. Training used the Group Relative Policy Optimization (GRPO) algorithm, with both KL regularization and entropy loss removed.

For partial predictions, a discount factor of 0.2 was applied. Image jigsaw training used a global batch size of 256, while video and 3D jigsaws used 128. The learning rate was set to 1×10⁻⁶.

For each prompt, the model generated 16 responses at a decoding temperature of 1.0. Both image and video jigsaw tasks were trained for 1,000 steps, while the 3D jigsaw task was trained for 800 steps.

Image Jigsaw

The image jigsaw model was tested across three categories of vision-centric benchmarks: for fine-grained perception and understanding, MMVP, the fine-grained perception subset of MMStar; MMBench; HR-Bench; V*; MME-RealWorld (lite); LISA-Grounding; and OVD-Eval.

For monocular spatial understanding, the benchmarks were VSR; OmniSpatial; and Depth Anything V2 (DA-2K). For compositional visual understanding, the tests employed Winoground and SugerCrepe++.

Three baselines were tested, all derived from Qwen2.5-VL-7B: ThinkLite-VL for multimodal reasoning; VL-Cogito for general vision and scientific tasks; and LLaVA-Critic-R1 for image perception.

All were evaluated using short answers only, since chain-of-thought (CoT) reasoning sometimes reduced performance.

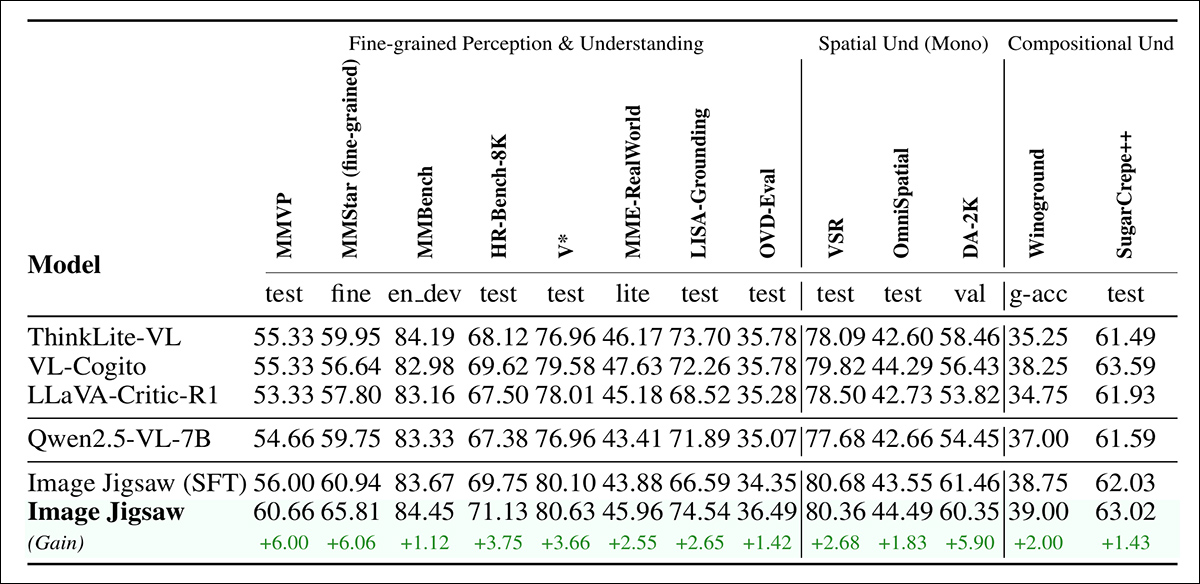

Evaluation results on image benchmarks. Image Jigsaw improved on the Qwen2.5-VL-7B base model across all task categories (i.e., fine-grained perception and understanding; monocular spatial understanding; and compositional visual reasoning), outperforming prior post-trained baselines.

Of the results for the image jigsaw, shown above, the authors state:

‘[The image above] shows that our method consistently improves the vision-centric capabilities on the three types of benchmarks. These results confirm that incorporating image jigsaw post-training significantly enhances MLLMs’ perceptual grounding and fine-grained vision understanding beyond reasoning-centric post-training strategies.

‘We attribute these improvements to the fact that solving image jigsaw requires the model to attend to local patch details, infer global spatial layouts, and reason about inter-patch relations, which directly benefits fine-grained, spatial, and compositional understanding.’

Video Jigsaw

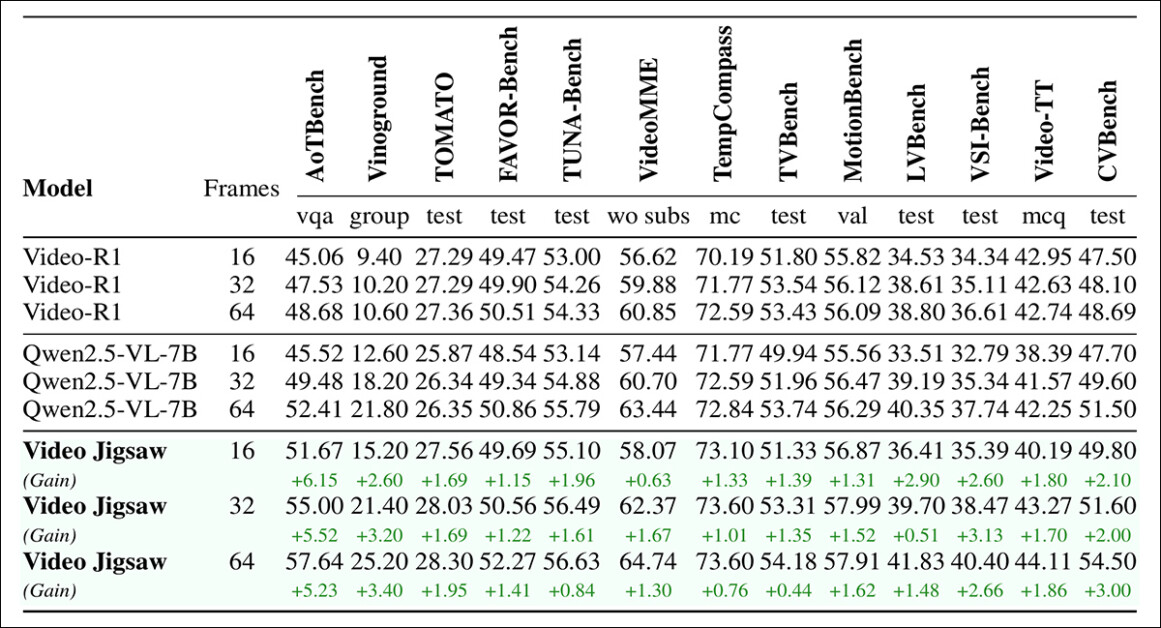

For video jigsaw, evaluation was conducted on AoTBench; Vinoground; TOMATO; FAVOR-Bench; TUNA-Bench; Video-MME; TempCompass; TVBench; MotionBench; LVBench; VSI-Bench; Video-TT; and CVBench.

Video-R1 was used as a baseline, having been trained with cold-start supervised fine-tuning followed by reinforcement learning for video understanding and reasoning. In this case, the evaluations included the full reasoning process, since this consistently produced better results than direct answers.

All models were restricted to 256x28x28 pixels, and tested across three frame settings – 16, 32, and 64:

Evaluation results on video benchmarks, with Video Jigsaw outperforming the baseline consistently across all tasks and frame settings.

Video Jigsaw produced consistent improvements across all video understanding benchmarks and frame settings, with gains especially strong on tasks requiring temporal reasoning and directionality, such as those in AoTBench, and also in cross-video reasoning benchmarks, such as CVBench:

‘These results confirm that solving video jigsaw tasks encourages the model to better capture temporal continuity, understand relationships across videos, reason about directional consistency, and generalize to holistic and generalizable video understanding scenarios.’

3D Data

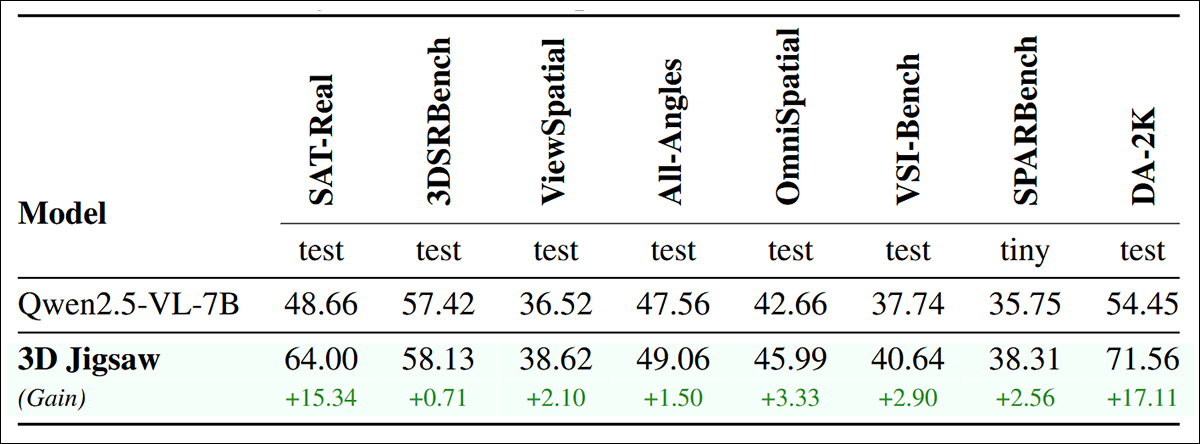

For the 3D modality, the model was evaluated on SAT-Real; 3DSRBench; ViewSpatial; All-Angles; the aforementioned OmniSpatial; VSI-Bench; SPARBench (tiny); and the aforementioned DA-2K.

Evaluation results on 3D benchmarks: 3D Jigsaw improved performance across depth comparison tasks such as DA-2K, as well as wider 3D perception benchmarks covering single-view, multi-view, and egocentric video inputs.

Here the authors state:

‘[3D] Jigsaw achieves significant improvements across all benchmarks. Unsurprisingly, the largest gain is on DA-2K, a depth estimation benchmark that is directly related to our depth-ordering pre-training task. More importantly, we observe consistent improvements on a wide range of other tasks, including those with single-view (e.g. 3DSRBench, [OmniSpatial]), multi-view (e.g. ViewSpatial, All-Angles), and egocentric video inputs (e.g. VSI-Bench).

‘These results demonstrate that our approach not only teaches the specific skill of depth ordering but also effectively strengthens the model’s general ability to perceive and reason about 3D spatial structure.’

Conclusion

What is not entirely clear from this paper is the exact relationship between image and descriptions that is powering this improvement in MLLM performance.

At first glance, the process of learning through puzzle-solving seems very analogous to our own early forays and development. However, our own spatial interpretation of the world instinctively feels less semantic and related to language, and a deeper look at the paper reveals the extent to which language serves MLLM as an intriguing bridge between visual and semantic realities.

* Please note that the paper’s authors prefer the less-common term ‘Multimodal Large Language Models’, shortened to ‘MLLMs’. This is an emerging or infrequently-used term, and applies to models which can extensively reason and analyze images spatially, but which do not generate images. As new paradigms and models emerge, this lexicon is under constant revision.

First published Thursday, October 2, 2025