Anderson's Angle

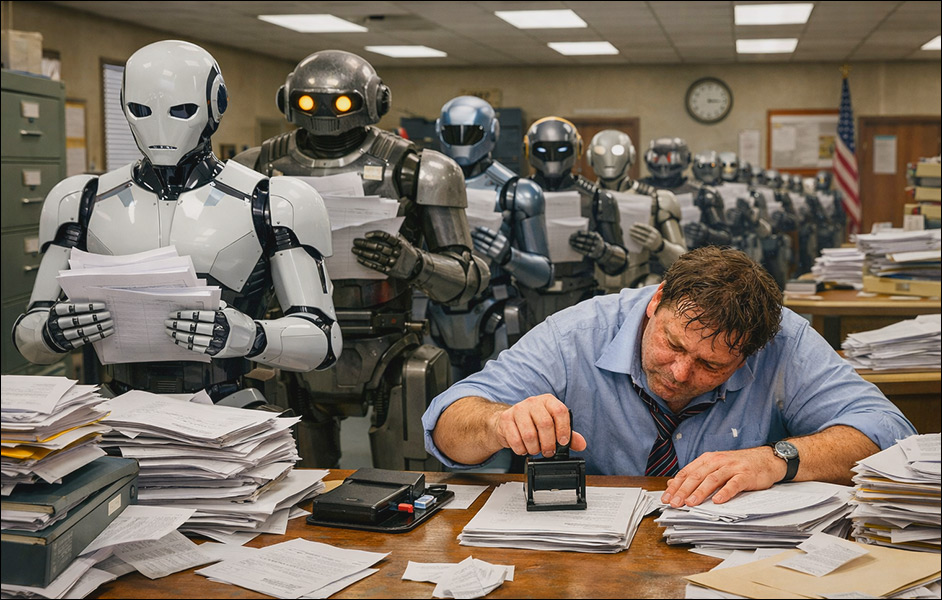

Transitioning to the Verification Economy

Checking AI’s work may become a significant sector in the new machine learning economy; one that will have to significantly scale, and that cannot be automated. But as the years pass, human ‘experts’ are likely to deteriorate in quality.

Opinion. My wife is an architect in one of the most log-jammed and intense bureaucracies in Europe. A significant part of the value of her education lies in the obtaining and maintenance of her right of signature – an expensive credential that must be re-subscribed to each year, and which allows her to literally ‘sign off’ on proposals whose implementation may be in the hundreds of thousands, even millions of euros.

She tells me that this is not the hardest part of her work, since it only formalizes her own calculations, or those of others, and that for this purpose, external work is not usually difficult to check.

Essentially – as is so often also the case when appointing CEOs – this stamp (it is literally a stamp) mainly provides stakeholders with an ass to kick if things go wrong. In assuring accountability, it also facilitates insurance coverage and investor confidence, which would not be obtainable without such vouchsafes.

It’s the second time in my life I have seen this process directly in action; 25 years ago I was affianced to an oncologist in another notoriously glutinous EU bureaucracy, Italy, and saw the extent to which her expert signature was the last stage in a chain of trust to which many others besides herself had to contribute their expertise.

I heard from both my ex-fiancée, in that period, and more recently my wife, that their professions were/are riddled with qualified hacks selling their stamp and eschewing more original or useful work as less profitable. Such cynical practitioners can charge high sums because they represent relatively scarce and essential resources.

Check It Out

This topic came to mind as I stumbled across a new and sprawling paper today, titled Some Simple Economics of AGI. In it, three researchers spanning MIT, Washington University in St. Louis, and UCLA, depict a near-future where the terrifying, job-destroying impetus towards AI-driven automation collides with the need for real-world asses to kick in high-stakes scenarios – thus leading to a new economy of human verification, ratification and responsibility*.

The paper contrasts with the media’s current imagining of shriven business sectors with extensive offices reduced down to single-person ‘overseers’, whose decisions are being used as training data to (hopefully) fire even this last shred of meatware†.

Rather, the authors believe that practical considerations and compliance requirements will focus enormous attention on the ‘rubber-stamping’ humans that placate a company’s (AI/human/AI-aided) legal department:

‘For companies, the core strategic insight is that verification is no longer a mere compliance function, but a primary production technology—and increasingly, their most defensible one. This dictates a structural shift: investing heavily in observability, expanding verification-grade ground truth, and reorganizing around a “sandwich” topology (human intent → machine execution → human verification and underwriting).

‘In an economy where raw output is commoditized, competitive advantage migrates to the scarce talent and data capable of reliably steering and certifying agentic systems—generating network effects not in sheer output, but in trusted outcomes.’

The authors hypothesize that the defining constraint on growth may not be intelligence – which AI has now ‘decoupled from biology’ – but verification bandwidth.

Value Shifts Towards Human Verification

The paper describes the move toward AGI as a widening divergence between the expense of producing machine output and the expense of checking that output – the latter of which remains tied to finite human time and lived experience.

Generating plans, reports, designs, and recommendations would in this scenario become cheap and abundant, while determining which of them are sound, aligned, and safe enough to act on would become the ‘scarce function’. The effective limit on deployment would therefore not be how much output systems can produce, but how much of that output can be credibly verified.

Thus, instead of rewarding ever more specialized skill in measurable tasks, the system, the authors predict, will begin to reward measurability itself: work that can be parametrized will drift toward commoditization as its execution cost nears the marginal cost of compute, with value accruing instead in high-quality ground truth, reliable audit trails, and institutional mechanisms for assigning and absorbing responsibility.

Therefore, in a verification economy, the advantage would lie less in producing content, and more in certifying outcomes, and underwriting the risks attached to them.

If automation keeps accelerating while verification stays limited by human time and attention, the paper predicts that a Hollowed Economy would emerge, where, as the cost of automating work falls, more and more agents would be deployed because it makes economic sense to do so – even though the ability to properly check their output would not grow at the same speed. In that scenario, the share of work that is genuinely verified would shrink, with all the negative consequences that entails.

Conversely, an Augmented Economy would ensure that verification capacity would expand in tandem with automation. This would involve a deliberate investment in structured training to preserve expertise, as well as new liability frameworks that can absorb risk. Deployment would then be tied to what can actually be checked and insured – effectively, a very old bottleneck brought center stage by an unprecedented scale of technological development:

‘In the technology sector, the dominant revenue model will shift from monetizing software access (Software-as-a-Service) to monetizing outcomes (“Software-as-Labor”). Consequently, firms will be valued primarily on their capacity to absorb tail risk through Liability-as-a-Service.

‘Execution is now infinitely scalable; the legal and financial capacity to absorb its inevitable failures is the new bottleneck.’

Diminishing Returns

Indeed, the preservation of domain expertise in humans is critical to the problem, since a culture of industrialized oversight, according to the authors, would risk over time to degrade the quality of those performing the oversight – because subsequent generations of overseers would no longer possess direct and lived experience of the domains requiring verification.

Arguably, at that stage, the quality of oversight would truly be susceptible to automation, since new decisions would be formed solely on the basis of prior decisions. However, that would leave stakeholders without a kickable ass, or a viable business model. It would also render such a role so volatile and risk-strewn as to be unappealing, even in a climate of low employment.

Sequestering credentialed professionals such as doctors and architects into a well-paid but highly-burdened ‘rubber-stamping’ position is likely to erode their value in such a role, over time: the further in the past their actual field-experience recedes, the more ‘theoretical’ their decisions could become, as their abandoned domain continues to evolve in their absence.

(This is familiar even in pre-AI business culture, in the form of skilled staff who progress into management and become increasingly out-of-touch with novel developments, eventually undermining their worth as overseers and organizers. It’s also familiar to Star Trek: TNG fans, in the form of the Pakleds – a race who use advanced technology extensively, but no longer know how to create or to fix it.)

Entry-level execution has historically served as the training ground for future experts; but if automation eliminates the routine tasks through which judgment is cultivated, the future supply of capable verifiers will shrink, the authors suggest.

Thus the paper augurs a paradox: the more powerful agentic systems become, the more society will depend on a stock of human expertise that those same systems may erode.

And let’s remember that this is not in any way a technical problem, nor susceptible to a technological solution. In many ways this syndrome suggests the logistical equivalent of AI model collapse – except that here we are considering the undermining of an economic model.

‘From a policy perspective, the core challenge is a profound structural asymmetry: the gains of AI deployment are aggressively privatized, while the systemic risks are socialized. Firms and individuals capture the upside of automation while externalizing catastrophic tail risks.

‘Without shared verification infrastructure and rigorous liability pricing, the market will rationally drift toward a Hollow Economy—an equilibrium characterized by explosive measured activity, but fundamentally hollowed-out human control.’

Conclusion: A Different Crisis

The authors define the predicted crisis as a measurability gap, wherein quantifiable processes can be automated away from all human contribution, leaving n-hard or n-legal processes that still require human expertise.

However, my wife’s experience suggests that the complexity or difficulty of a process is not necessarily related to the need for accountability in that process; many of the things that she ‘signs off’ represent trivial problems or calculations in themselves, but are consequential in the breach. And the more litigious business culture becomes, the more underwriters and investors will require human accountability across a wider range of processes.

So, transitioning to the verification economy could cause a different crisis to the one that is currently garnering headlines. The issue in such a case would not be whether AI can produce more, but whether institutions can verify enough of what is produced to translate machine intelligence into durable value.

Since machine intelligence may soon be scaling without precedent, and the availability of case-applicable human time cannot keep up with that pace, the issues outlined in the new work seem likely to loom up very quickly – even if they may be initially drowned out by the wider economic ramifications of AI adoption.

* The paper is too long to break down in the usual manner, and in any case structurally unsuited for that kind of analysis. Therefore I decided to comment on it and consider its significance instead, and refer the reader to the source work so that they can do likewise.

† /s

First published Wednesday, February 25, 2026