Thought Leaders

Why AI Agents in Enterprise Run Into a Knowledge Problem, Not a Technology One

Last year, S&P Global reported that the share of companies abandoning most of their AI initiatives more than doubled, from 17% to 42%. Before that, Gartner published a forecast on agentic AI projects: 40% of them will be shut down by the end of 2027.

According to McKinsey & Company, nearly half of all companies are experimenting with AI agents. But how many have moved beyond the pilot stage and are actually operational? About one in ten.

The industry has no shortage of explanations: model hallucinations, lack of governance, high GPU costs, and a shortage of specialists. All of these are real challenges. But after three years of working with knowledge management systems and AI agents, I am increasingly seeing a different pattern: companies passing incomplete data to their agents.

As a Doctor of Pedagogical Sciences, I view this as a knowledge transfer problem. If a person cannot explain how they make decisions, their logic cannot be transferred to a new employee — let alone to an AI agent. Let’s explore why this happens and what can be done about it.

Where knowledge about how a company actually operates resides

Ask a large company where employee knowledge is stored, and you’ll hear a long list: Confluence, SharePoint, LMS platforms, FAQ bots, Slack archives. It may seem like this is exactly the stack a RAG system can use to retrieve everything it needs. But one crucial element is missing — the knowledge that lives in people’s heads. Knowledge that no one has ever written down.

Why is this a problem?

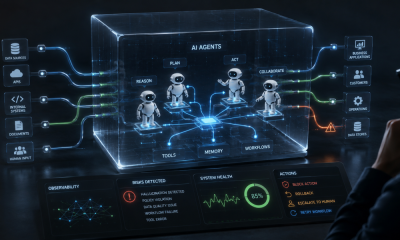

Because for an AI agent to take over part of a workflow — understand the context, choose an action, and carry a task through to completion — it needs not only access to a knowledge base, but also the decision-making logic used by an experienced specialist.

Imagine a new support agent receiving a request: a customer claims they have paid for a service, but access has not been activated. The script includes a standard set of steps that ends with asking the customer to wait. However, the agent notices that the situation is unusual: the customer has already contacted support twice, and there are several similar cases in the system over the past hour. They reach out to a more experienced colleague, who explains that they have seen this before and that the issue is most likely a failure at the intersection of the payment gateway, the bank, and the internal activation system — so the case should be escalated to another department.

For an AI agent, this logic is invisible. It may have access to the script, the ticket history, and the payment status if these data sources are connected, but it does not know which signals an experienced operator considers decisive. It’s not that experts are intentionally withholding this knowledge. They simply cannot formalize it or break it down into steps: which options were ruled out, why a particular action was chosen, and at what point it became clear that the standard scenario did not apply. Cognitive scientists refer to this phenomenon as tacit knowledge — implicit knowledge that even its holder may not be fully aware of.

This is why the bottleneck does not arise at the level of access to documents, but at the stage of converting expert experience into a format suitable for training an AI agent.

What to do about it

To make an AI agent work effectively, it is not enough to simply connect an LLM to a corporate knowledge base, because successful decisions often rely on tacit knowledge. A knowledge layer must first be created, including structured decision-making criteria.

In knowledge management, this process is called externalization — converting tacit knowledge into explicit knowledge. In other words, a company needs to understand not only what an expert does, but how they think. This is typically done through a series of in-depth interviews with a top expert. Alongside them should be someone skilled in asking the right questions: a methodologist, knowledge engineer, or instructional design specialist. Their task is not to write down an “instruction based on what the expert says,” but to reconstruct the criteria for choosing between options, break down edge cases, and surface typical mistakes that the expert already handles automatically.

Here, AI can help significantly: transcribing interviews, grouping similar cases, turning expert explanations into draft scenarios, and generating situations for validation. However, the final structure still needs to be reviewed and approved by the expert.

The result should be a working knowledge corpus. It can be used in two directions at the same time — to train new employees and to configure an AI agent. Both scenarios rely on the same foundation: structured experience from top specialists.

The alternative is to continue relying on the assumption that RAG over Confluence will somehow reconstruct logic that was never documented. In practice, this almost never works: the system may retrieve a relevant document, but it will not learn how to make decisions in situations where the correct action depends on context and experience.

How to check that an agent is ready to work

You have transformed expert knowledge into scenarios and configured the agent. But there is a gap between the agent’s plausible answers and real operational performance — and this gap only becomes visible during validation. At this stage, it is important to determine whether you have actually captured all the necessary knowledge.

A practical approach is scenario-based testing. You give the agent real cases from an expert’s daily work: a customer disputes a charge, an unusual email arrives, or a request appears that does not fit the basic script. The results should not be evaluated by another LLM, but by the same expert who helped build the knowledge corpus. If the agent takes a different path from the experienced specialist, it does not always mean the model is weak. More often, it indicates that a critical rule, exception, or example is missing. In that case, the process goes back to the beginning: the methodologist clarifies the logic with the expert, the knowledge corpus is updated, instructions are refined, and the test is repeated.

This cycle is not an optional step, but a stage that defines the difference between an agent that merely “demonstrates potential” and one that actually performs work. It is a slow and not very impressive part of the process: it does not produce a flashy demo and requires the involvement of experts. But those who go through it systematically end up with agents that truly reduce routine workload for specialists. Those who skip it, within six months often find themselves in the statistics of Gartner, which predicts that 40% of projects will be cancelled.

Agentic AI does not fail because of technology — modern models are already capable of performing complex tasks. It fails because companies “feed” it incomplete knowledge. In 2024–2025, this could still be explained by the experimental stage. In 2026, this mistake already comes at a high cost.