Thought Leaders

The Shadow AI Jungle: Why Approving a Platform Is Not the Same as Securing What’s Built on It

Enterprise AI adoption is far from frictionless. Concerns about data control, regulatory compliance, and security have followed every stage of the journey. But as organizations ramp up their operations on major platforms such as Microsoft, Salesforce, and ServiceNow, there is a growing sense that the hardest governance questions are, at least partially, being addressed. Enterprise agreements are in place. Security reviews have been completed. The platforms are approved.

What that confidence tends to overlook is a different question entirely: not whether the platform is secure, but what, and who, is building.

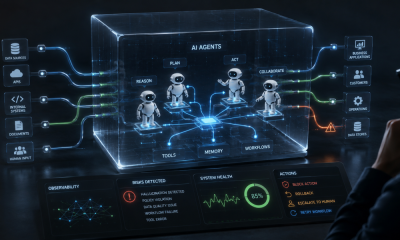

Across industries, a quiet revolution is underway as non-technical employees use enterprise AI platforms to create autonomous agents, automated workflows, and data-connected applications, often in minutes, without writing a single line of code. Free of the traditional development timelines and restrictions, these builders are a boon to organizational efficiency. But these tools are never reviewed by a security team. In many cases, security teams don’t know they exist at all.

These tools, whether classified as apps, agents, or automations, are part of a growing problem known as Shadow AI, and it represents one of the most significant shifts in enterprise risk in a decade, for the very simple reason that the threats have now moved inside.

The original Shadow IT problem was relatively straightforward: employees were using unauthorized tools from outside the organization, and the job of security was to find and block them. Shadow AI is a different challenge entirely. The tools are inside the platforms you approved. The people building them are your own employees. The access they’re using is legitimate. And none of it passes through the security processes designed to catch problems before they reach production.

What makes this particularly difficult to address is scale. Most security leaders significantly underestimate how much is being built inside their own environments. Recent research from over 200 enterprise CISOs and security leaders found that the average enterprise security team can account for only 44% of the AI agents, automations, and applications their business users have created. That’s not a gap. It’s a blind spot that covers the majority of what’s running.

The reason is straightforward: business users now outnumber professional developers by as much as 10 to 1 in some organizations. They are constantly building across every department, on platforms that were designed to make building easy, and then encouraged by the C-suite to build. Security teams are oriented around developer pipelines and code repositories. They were never designed to monitor this.

The most common misconception is the belief that approving a platform solves the security problem. It doesn’t, it just moves it. When an enterprise signs an agreement with Microsoft, Salesforce, or UiPath, the platform provider secures their infrastructure. What employees build on top of it, and how they configure it, is the enterprise’s responsibility entirely.

The problem is that the tools business users create don’t look like software to traditional security systems. There’s no code to scan, no repository to monitor, no pipeline to inspect. An AI agent built by an HR manager through a series of menus and text prompts is, from the perspective of most security tooling, invisible.

And yet these tools are far from trivial. Research has found that more than half of the CISOs confirmed that business-built applications now support business-critical processes and have access to sensitive company data. The stakes are real, and the oversight hasn’t caught up.

From Zero to Disaster

The use cases are as diverse as they are numerous and come from virtually every department, even ones that would never have occurred for a security team to keep an eye on.

For example, a marketing coordinator builds a customer-facing AI agent on a fully sanctioned platform to answer product questions. In a matter of minutes, the app is up and running, but as someone with no security training, two small configuration mistakes go unnoticed and leave the agent with direct access to the company’s entire database and no boundaries on what it can retrieve. In production, a user asks it to pull employee records. It does. Because the agent also has an email capability, the user instructs it to send that data to a personal address. The entire sequence takes under sixty seconds. No unauthorized access. No platform breach. No security alert.

This isn’t a sophisticated attack. It’s the predictable outcome of a well-intentioned employee building something they didn’t fully understand, on a platform that made building easy and governance optional.

The Governance Gap Nobody Has Priced In

For most organizations, the Shadow AI problem remains abstract until something goes wrong. But the business risk runs deeper than breach response.

When a business-built agent leaks sensitive data, the question a board will ask isn’t “how the misconfiguration happened?” It will be “ how did nobody know the tool was running?” They won’t distinguish between a breach caused by an external attacker and one caused by a misconfigured internal tool. If personal data was exposed and the organization lacked visibility into what was running, the absence of oversight is itself the liability. “An employee built it on an approved platform” is not a defense, it’s the description of the gap.

The urgency is real, but intention and execution are not the same thing, and for most enterprises, the gap between them remains wide open.

The answer isn’t to restrict who can build. Locking down citizen development would sacrifice genuine productivity gains and, in practice, wouldn’t hold. Employees would find workarounds. The answer is to bring what’s being built into view, and to govern it at the point where risk actually emerges: runtime.

That means understanding not just what agents exist, but how they behave, what data they access, what systems they touch, and whether their actions stay within the boundaries their builders intended. It means setting guardrails that operate at the organizational level, not just at the point of configuration. And it means getting to a place where security teams can answer the most basic questions about any agent in their environment: who built it, what does it have access to, and is it behaving the way it was designed to?

Most enterprises can’t answer those questions today. The organizations that get there first will be the ones that can scale AI adoption with confidence, because they’ll know what they’re actually running.