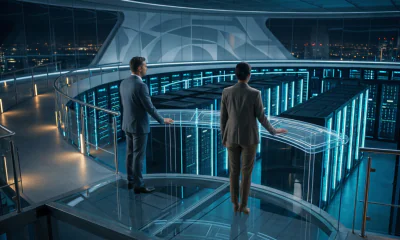

Cybersecurity

Deep Learning Used to Trick Hackers

A group of computer scientists at the University of Texas at Dallas have developed a new approach for defending against cybersecurity. Rather than blocking hackers, they entice them in.

The newly developed method is called DEEP-Dig (DEcEPtion DIGging), and it entices hackers into a decoy site so that the computer can learn their tactics. The computer is then trained with the information in order to recognize and stop future attacks.

The UT Dallas researchers presented their paper titled “Improving Intrusion Detectors by Crook-Sourcing,” at the annual Computer Security Applications Conference in December in Puerto Rico. The group also presented “Automating Cyberdeception Evaluation with Deep Learning” at the Hawaii International Conference of System Sciences in January.

DEEP-Dig is part of an increasingly popular cybersecurity field called deception technology. As evident by the name, this field relies on traps that are set for hackers. Researchers are hoping that this will be able to be used effectively for defense organizations.

Dr. Kevin Hamlen is a Eugene McDermott Professor of computer science.

“There are criminals trying to attack our networks all the time, and normally we view that as a negative thing,” he said. “Instead of blocking them, maybe what we could be doing is viewing these attackers as a source of free labor. They’re providing us data about what malicious attacks look like. It’s a free source of highly prized data.”

This new approach is being used to solve some of the major problems associated with the use of artificial intelligence (AI) for cybersecurity. One of those problems is that there is a shortage of data needed to train computers to detect hackers, and this is caused by privacy concerns. According to Gbadebo Ayoade MS’14, PhD’19, better data means a better ability to detect attacks. Ayoade presented the findings at the conferences, and he is now a data scientist at Procter & Gamble Co.

“We’re using the data from hackers to train the machine to identify an attack,” said Ayoade. “We’re using deception to get better data.”

The most common method used by hackers is to begin with simpler tricks and progressively get more sophisticated, according to Hamlen. Most of the cyber defense programs being used today attempt to disrupt the intruders immediately, so the intruders’ techniques are never learned. DEEP-Dig attempts to solve this by pushing the hackers into a decoy site full of disinformation so that the techniques can be observed. According to Dr. Latifur Khan, professor of computer science at UT Dallas, the decoy site appears legitimate to the hackers.

“Attackers will feel they’re successful,” Khan said.

Cyberattacks are a major concern for governmental agencies, businesses, nonprofits, and individuals. According to a report to the White House from the Council of Economic Advisers, the attacks cost the U.S. economy more than $57 billion in 2016.

DEEP-Dig could play a major role in evolving defense tactics at the same time hacking techniques evolve. The intruders could disrupt the method if they realize they have entered into a decoy site, but Hamlen is not overly concerned.

“So far, we’ve found this doesn’t work. When an attacker tries to play along, the defense system just learns how hackers try to hide their tracks,” Hamlen said. “It’s an all-win situation — for us, that is.”

Other researchers involved in the work include Frederico Araujo PhD’16, research scientist at IBM’s Thomas J. Watson Research Center; Khaled Al-Naami PhD’17; Yang Gao, a UT Dallas computer science graduate student; and Dr. Ahmad Mustafa of Jordan University of Science and Technology.

The research was partly supported by the Office of Naval Research, the National Security Agency, the National Science Foundation, and the Air Force Office of Scientific Research.