Anderson's Angle

Beauty Filters Are a Potential Deepfake Attack Tool

Beauty filters now do more than hide blemishes: they can help deepfakes and face morphs slip past detection systems. A new study shows that even subtle smoothing effects confuse AI detectors, making fake images look real and real ones look fake. If the trend continues, beauty filters could face restrictions in high-stakes contexts, from border control to corporate Zoom calls.

In a 2024 academic collaboration between Spain and Italy, researchers reported that 90% of women aged 18-30 used beauty filters before posting images on social media. In this sense, beauty filters are algorithmic or AI-aided methods of altering facial appearance so that it is supposedly improved on the original source:

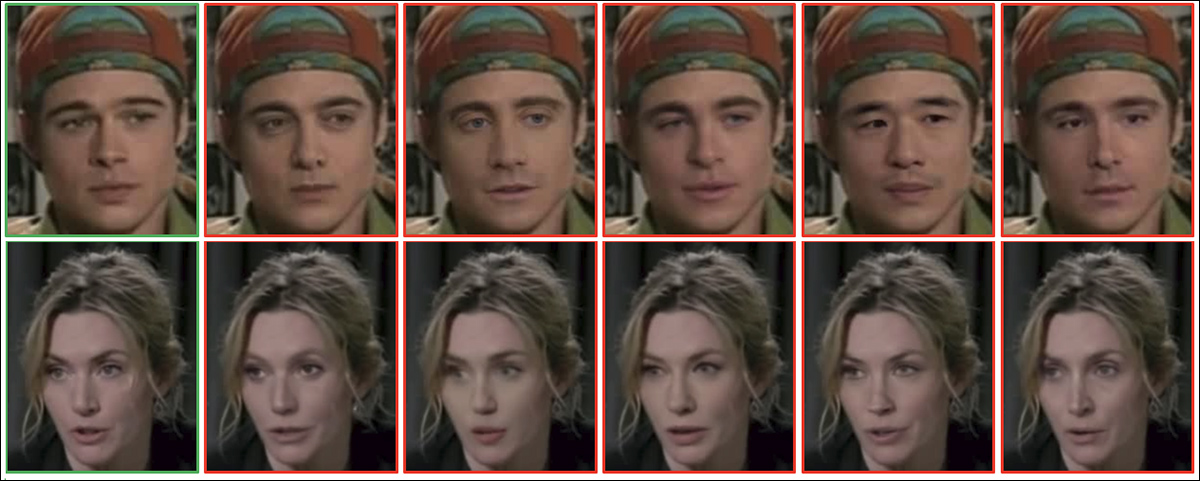

From the 2024 study: example female (left) and male (right) faces shown before and after beauty filtering. The filter alters features such as skin tone, eyes, nose, lips, chin, and cheekbones to enhance perceived attractiveness. Source: https://arxiv.org/pdf/2407.11981

Such filters are also widely available as native or add-on functionality in popular video-based systems including Snapchat and Zoom, offering the capability of smoothing out ‘imperfect’ skin and even altering the age of the subject, up to the point where identity could said to be substantially altered:

Click to play: three women turn off their video ‘beautification’ filters, revealing the extent to which their physiognomies are altered by the algorithms or AI. Source: https://www.youtube.com/watch?v=gtCpea_5qxw

The phenomenon seemed to reach saturation point as soon as the technology was relatively mature; a 2020 study from the City University of London revealed that 90% of young Snapchat users in the United States, France and the UK used filters in their apps, while Meta reported that more than 600 million young people had at that point used filters on Facebook or Instagram.

Exploring the adverse effects of such filters on mental health, a Psychology Today report iterated that of those studied, 90% of young women, with an average age of 20, either used filters or in some way edited their photos. The most popular filters were those used to even out skin tones; give a tanned appearance; whiten teeth; and even reduce body size.

Face Off

As face filters are set to benefit from the 2025 revolution in video synthesis, and more generally from the ongoing interest in this research sector, the extent to which we can ‘recreate’ or re-imagine ourselves in live video chats is increasingly abrading against the security community’s concern about fraudulent or criminal deepfake video techniques.

One issue is that the gamut of ‘easy’ tests developed in recent years to reveal a video deepfaker, who may be seeking to defraud large amounts of money in a corporate context, are inevitably becoming less effective as these Achilles heels are accounted for in training data and at inference time:

Click to play: three or four years ago, waving a hand in front of a deepfaked face was a reliable test for video calls, but we can see that dedicated efforts from the likes of TikTok are making deep in-roads into the classic ‘tells’. Sources: Ibid and https://archive.is/mofRV#wavehands

More critically, the widespread use of face-changing/altering beautification filters muddies the waters for a new and emerging generation of deepfake detectors tasked with keeping imposters out of boardroom video chats, and away from vulnerable potential victims of ‘kidnap’ and ‘imposter’ fraud.

It is easier to make a deepfake convincing, whether photo or video, if the resolution of the image is low or the image somehow degraded, since the underlying faking system can hide its own shortcomings behind what appear to be connectivity or platform issues.

In effect, the most popular beautification filters remove some of the most useful material in identifying video deepfakes, such as skin texture and other facial areas of detail – and its worth considering that the older a face is, the more such detail it is likely to contain, and therefore the use of vanity de-aging filters may be a particular temptation in such case.

If a detail-free ‘android’-style look is the vogue, deepfake detectors may lack the material they need to distinguish between real and fake images and video personages. Source: https://www.instagram.com/reel/DMyGerPtTPF/?hl=en

The Royal Society’s late 2024 paper What is beautiful is still good: the attractiveness halo effect in the era of beauty filters confirmed that the use of filters universally increases general attractiveness across both sexes (though increased attractiveness tends to lower general estimation of intelligence in beauty-filtered females).

Therefore it’s fair to say that this is a popular technology; that it works; and that it would be something of a culture shock were it suddenly to become subject to security limitations, or banned in diverse contexts.

Nonetheless, in a period where video deepfakes threaten to become indistinguishable from genuine video participants, in both appearance and speech, it’s possible that the sheer global ‘noise’ from beauty filters may need to be mitigated in the future, for security reasons.

Smooth Criminals

The issue has been most recently investigated in a new paper from the University of Cagliari in Italy, titled Deceptive Beauty: Evaluating the Impact of Beauty Filters on Deepfake and Morphing Attack Detection.

In the new study, researchers applied beauty filters to faces from two benchmark datasets, and tested several deepfake and morphing attack detectors on both the original and altered images.

In nearly every case, detection accuracy fell after beautification; the most dramatic drops occurred when filters smoothed out wrinkles, brightened skin tone, or subtly reshaped facial features. These changes removed or distorted the very cues that detection models rely on.

For example, the top-performing model on MorphDB lost more than 9% accuracy after filtering, and the problem persisted across multiple detection architectures, indicating that current systems are not robust to common cosmetic enhancements.

The authors conclude:

‘[Beautification] filters pose a threat to the integrity of biometric authentication and forensic analysis systems, making robust deepfake and morph attack detection under such conditions a critical open challenge.

‘Future work should prioritize the development of digital manipulation detection systems that are robust to such subtle, real-world alterations, whether malicious or not, to ensure reliable identity recognition and content verification in everyday and security-critical contexts.’

Method and Data

To evaluate how beauty filters affect deepfake and morph detection, the researchers applied a progressive smoothing filter and tested the results on two benchmark convolutional neural networks (CNNs) well-associated with the target problem: AlexNet and VGG19.

Each of the two benchmark datasets tested contained examples of apposite facial manipulations. The first was CelebDF, a large-scale video benchmark featuring 590 real and 5,639 forged clips created through advanced face-swap techniques. The collection offers a diverse range of lighting conditions, head poses, and natural expressions across 59 individuals, making it well suited for evaluating the resilience of deepfake detectors in real-world media scenarios:

Examples of affected facial images, from CelebDF. Source: https://github.com/yuezunli/celeb-deepfakeforensics

The second was AMSL (registration required), a morphing attack dataset built from the Face Research Lab London collection, containing both real and synthetically blended faces. The dataset includes neutral and smiling expressions from 102 subjects, and was structured to reflect realistic challenges for biometric authentication systems used in contexts such as border control.

Images from the source Face Research Lab London set (FRLL) dataset, used in AMSL. Source: https://figshare.com/articles/dataset/Face_Research_Lab_London_Set/5047666?file=8541955

Each network was trained on 80% of the deepfake or morphing dataset, with the remaining 20% used for validation. To test robustness against beautification, the researchers then applied a progressive smoothing filter to the test images, increasing the blur radius from 3% to 5% of the face height:

Example faces from the CelebDF (top) and AMSL (bottom) datasets, shown before and after application of a smoothing-based beauty filter. The filter radius was scaled relative to face height, with c = 3% and c = 5% producing progressively stronger effects.

Regarding metrics, each model was evaluated using Equal Error Rate (EER) on both the original and beautified test samples. The analysis also reported the Bona Fide Presentation Classification Error Rate (BPCER), or false positive rate, and the Attack Presentation Classification Error Rate (APCER), or false negative rate, using the threshold set on the original data.

Morphed faces from the AMSL dataset.

To further assess performance, the Area Under the ROC Curve (AUC) and the score distributions for real and fake images were examined.

Tests

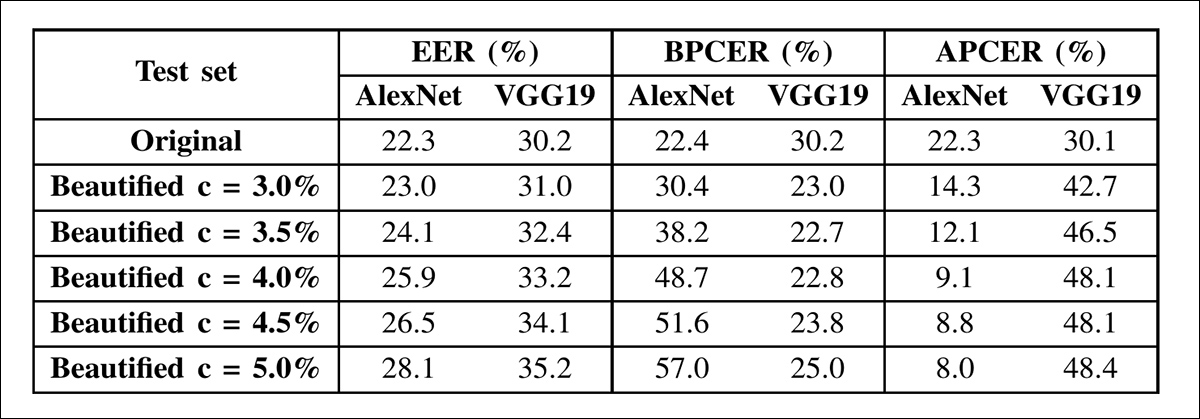

In the deepfake detection results, both networks showed rising Equal Error Rates as beautification intensity increased. AlexNet’s EER rose from 22.3% on the original images to 28.1% at the highest smoothing level, while VGG19’s increased from 30.2% to 35.2%. The performance drop followed different patterns in each case:

Deepfake detection results on the CelebDF dataset using AlexNet and VGG19. Both models were tested on original and beautified images with increasing levels of facial smoothing. Metrics shown include Equal Error Rate (EER), Bona Fide Presentation Classification Error Rate (BPCER), and Attack Presentation Classification Error Rate (APCER), using thresholds fixed from the original test set. Detection accuracy declined as beautification intensity increased, though the pattern of degradation differed between architectures.

As beautification increased, AlexNet became more likely to mistake real faces for fakes, shown by a steady rise in its false positive rate (BPCER). VGG19, on the other hand, mostly struggled to catch the fakes themselves, with a growing false negative rate (APCER). The two models responded differently to the filters, indicating that even well-known architectures can fail in different ways when faced with cosmetic alterations.

To better understand these results, the researchers applied the beautification filter separately to real and fake images and measured the impact on detection performance. This breakdown, shown in the results table below, clarifies the source of each model’s vulnerability:

Detection accuracy for AlexNet and VGG19 under partial beautification on the CelebDF dataset. The table reports three scenarios: beautified real vs beautified fake (F-Real vs F-Fake); beautified real vs original fake (F-Real vs O-Fake); and original real vs beautified fake (O-Real vs F-Fake), with a decrease in accuracy indicating reduced detector performance. AlexNet was most affected when real images were beautified, while VGG19 struggled most when beautification was applied to fake images.

For AlexNet, detection performance dropped when real images were beautified, but improved when only fake images were smoothed. For VGG19, performance improved slightly with beautified real images but worsened when deepfakes were filtered. In all cases, stronger beautification reduced accuracy.

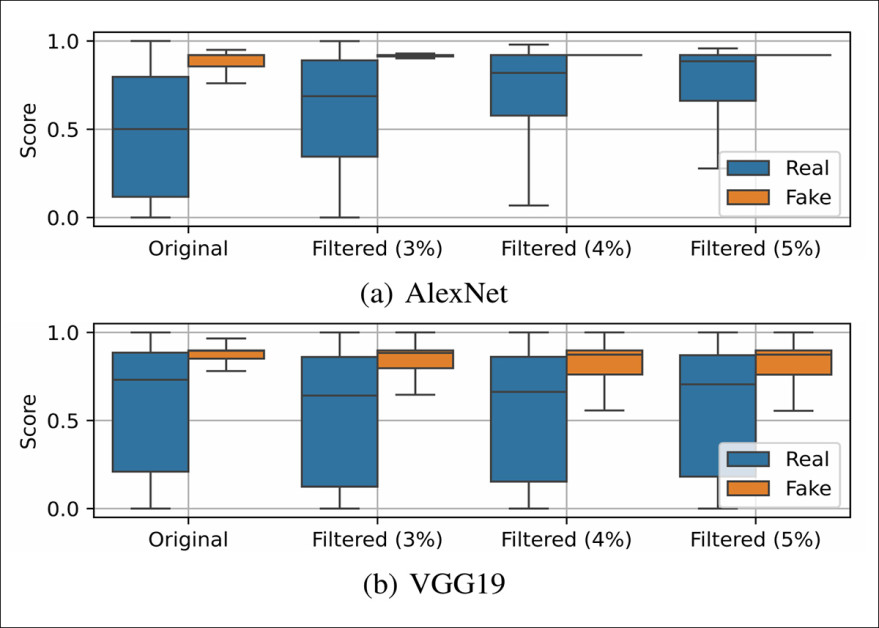

Box-plots showing the output score distributions of AlexNet and VGG19 on real and fake samples from the CelebDF dataset, under increasing levels of beautification. As smoothing intensifies, the score distributions for real and fake images become less separable, indicating reduced detection reliability. AlexNet becomes less confident in identifying real faces, while VGG19 becomes more likely to misclassify fake ones.

Beautification made AlexNet’s output scores more uniform across both real and fake samples, while VGG19 showed greater spread within each class. Despite these contrasting effects on score variability, both models lost accuracy. For AlexNet, the scores for real faces shifted closer to those of deepfakes. For VGG19, the scores for real and fake images began to overlap, reducing the model’s ability to tell them apart.

The paper states:

‘These outcomes have important implications and reveal that it is necessary to consider the potential use of beautification filters in deepfake detection, since these may have an unpredictable impact on the performance. In particular, different architectures respond differently to facial manipulations, even if those manipulations are not meant to deceive.

‘For instance, based on the specific deepfake detector, the beautification filters could significantly alter the output, making real images identified as fakes and, more critically, allowing deepfakes to deceive the detection.

‘Therefore, it is necessary to focus on the development of detectors more robust to such subtle, not strictly malicious alterations that could serve as a camouflage for malicious deepfake manipulations.’

In the morphing attack scenario, a synthetic image was created by blending facial features from two individuals. This image was used to deceive facial recognition systems, allowing both source identities to be authenticated as the same person. Such attacks are considered especially relevant in security settings, where biometric systems could be misled into accepting the morphed image for official identification.

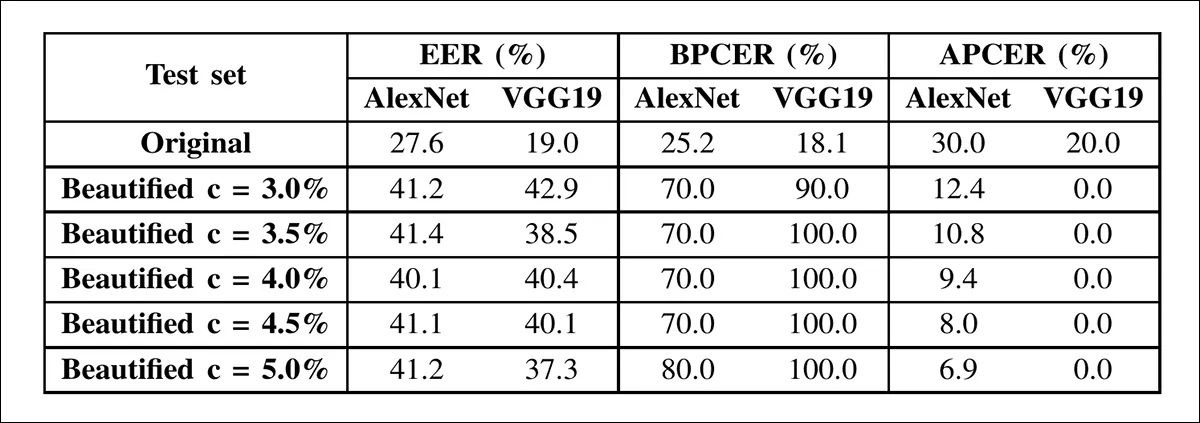

The table above shows morphing attack detection results on AMSL images before and after beautification, with both networks showing steep error increases as smoothing intensity rises.

In the morphing attack scenario, performance degraded more sharply than in deepfake detection. AlexNet’s EER rose from 27.6% to 41.2%, and VGG19’s from 19.0% to 37.3%, driven largely by false positives: genuine faces misclassified as morphs. With a 3% smoothing radius, VGG19’s false positive rate hit 90%.

This pattern held when filters were applied selectively. Smoothing real faces degraded detection, while smoothing only morphed faces improved results. As smoothing intensified, both networks showed reduced separation between real and fake scores, with VGG19 becoming especially unstable.

These findings, the authors indicate, suggest that beauty filters could help morphs evade detection even more effectively than deepfakes, raising serious security concerns.

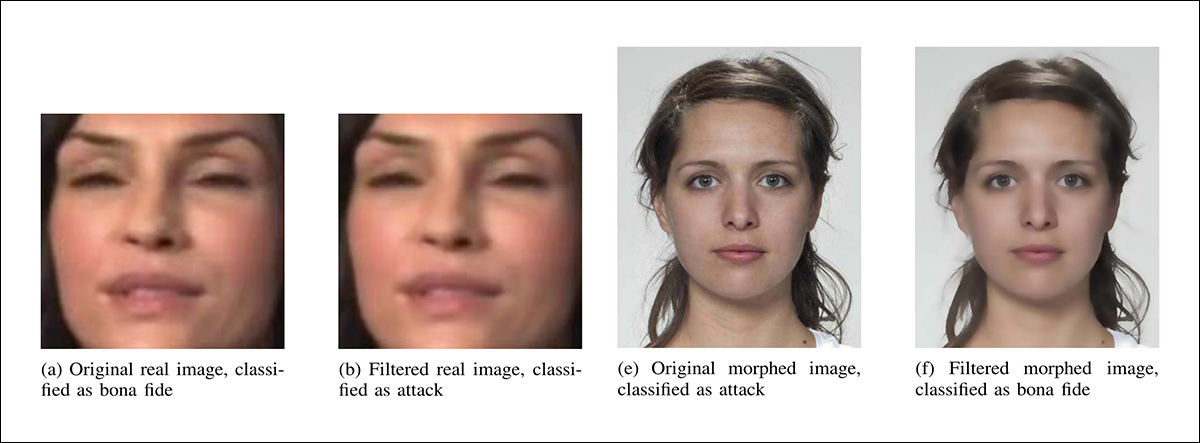

Partial examples (for lack of space) of how minimal beautification (3% smoothing radius) altered AlexNet’s classifications. On the left, a real image is misclassified as an attack after filtering, and on the right, a morphed image is misclassified as bona fide after filtering.

Finally, the researchers found that even mild beautification filters can significantly degrade the performance of state-of-the-art deepfake and morphing detectors.

The impact varied by architecture, with AlexNet showing gradual decline, and VGG19 collapsing under minimal filtering. Since these filters are common and not inherently malicious, their ability to conceal attacks poses, the authors suggest, a practical threat, especially in biometric systems. The paper emphasizes the need for detection models that are robust to such subtle image manipulations.

Conclusion

One reason why it may be difficult to train deepfake detection systems that can ignore beautification filters is the lack of contrastive material; ultimately the ‘beautified’ version is the one that gets widely disseminated, and, unlike the video we embedded earlier as an illustration, it’s very rare for a ‘before’ picture to be applied.

Another obstacle is that many of the filters perform exactly the kind of smoothing typical of compression operations when saving images or compressing video (a boon for the providers’ bandwidth, besides being a digital skin make-over for those that use the filter).

The deepfake detection research sector is already deeply engaged in combating instances of real-world image degradation due to issues such as poor-quality cameras, excessive compression, or choppy network connectivity – all such conditions being a direct benefit to deepfake fraudsters who are forced to try much harder in a high-quality, high-resolution scenario.

Whether or not beautification filters eventually become threatened as a security liability seems likely to depend on the extent to which they prove an impediment to deepfake screening methods, or end up constituting a kind of de facto firewall that benefits malfeasants more than those who are fighting them.

First published Wednesday, September 24, 2025