Anderson's Angle

AI-Generated Ad Images That Target Your Demographic – And, Eventually, You?

Advertisers aim to tailor ads to individual viewers to drive clicks, and while bespoke creatives for each person are currently impractical, new research suggests AI-generated imagery could soon be targeted effectively at specific demographics.

The personalized advertising featured in Steven Spielberg’s 2002 sci-fi actioner Minority Report has left an enduring, even haunting impression on the culture, with its vivid depiction of proactive advertising billboards that recognize people in crowds, and shout out promotional messages directly at them.

Many consumer groups may view this level of viewer recognition as a nightmare, and though progress toward it was slowed down by fallout from the Cambridge Analytica scandal, the ideal of direct, highly targeted engagement remains a prized goal in advertising.

In fact, systems that can drill down to the characteristics of a specific viewer remain in constant development – though in such cases corporate research has to take measures to respect laws around personally identifiable information (PII); laws which have been strengthened in Europe over the last decade, with these improved protections spread elsewhere via the Brussels Effect.

Hey, You!

Now that AI-generated ads and marketing content are on the rise, advertisers must nonetheless face up to the potential cost of AI ads targeted at specific individuals, where the imagery and text are summoned up opportunistically and on-the-fly.

For instance, even if a bespoke image could be generated very quickly, the costs at scale would be significant. Additionally, automatic online ad auction processes operate at critical, millisecond-level time-frames, which makes user-facing custom image content challenging, for the moment; and video content an even more distant prospect.

However, the technical obstacles involved in addressing higher-level demographic cohort groups in a net-based audience (via laptops, phones, smart TVs, etc.) are not so severe – and a new international academic/industry collaboration is proposing a way to create separate ad imagery for different demographics, including factors such as age and location:

From the new work: examples of personalized ad generation, where a single product is rendered in different styles for different viewer groups. The top row shows the original product images. The next three rows show versions adapted to three distinct audience types per product, based on differences in traits like age, lifestyle, or aesthetic preference. These group types are not predefined but are discovered automatically. Source

The new framework – titled One Size, Many Fits (OSMF) – aims to bridge the gap between broad-target advertising and impractically granular personalization, by generating different ad images for automatically discovered audience groups, using product-aware clustering to align visual content with the click preferences of distinct demographics

The authors state:

‘[We] present [a] unified framework that aligns diverse group-wise click preferences in large-scale advertising image generation.

‘OSMF begins with product-aware adaptive grouping, which dynamically organizes users based on their attributes and product characteristics, representing each group with rich collective preference features.’

Tested against comparable frameworks, the authors claim state-of-the-art results.

Though the work identifies diverse cohort groups, the paper is not specific as to which demographic characteristics are represented by each G grouping, though these seem likely to map to traditional market segmentation groups.

Therefore, it is not easy to tell, based on the various examples given in the main paper and the appendix, exactly why certain backgrounds or lighting would appeal to one cohort more than another, since we do not know the traits of any cohort:

There are no consistent ‘blue for boys, pink for girls’ etc. styles, across cohort-specific image styles, that could give away which type of person belongs in which group – the definitions, as evident from the existing literature, are far more complex and subtle.

What is perhaps more concerning, for those wary of ad-targeting practices, is the possibility of exploiting per-user insights in the generation of specific imagery in ads**.

The new paper is titled One Size, Many Fits: Aligning Diverse Group-Wise Click Preferences in Large-Scale Advertising Image Generation, and comes from 17 researchers across the National Laboratory of Pattern Recognition at Beijing; the ‘School of AI at UCAS’**; the Chinese e-commerce company JINGDONG; the Hong Kong University of Science and Technology at Guangzhou; and the Pattern Recognition Lab at Nanjing University of Science and Technology.

Method

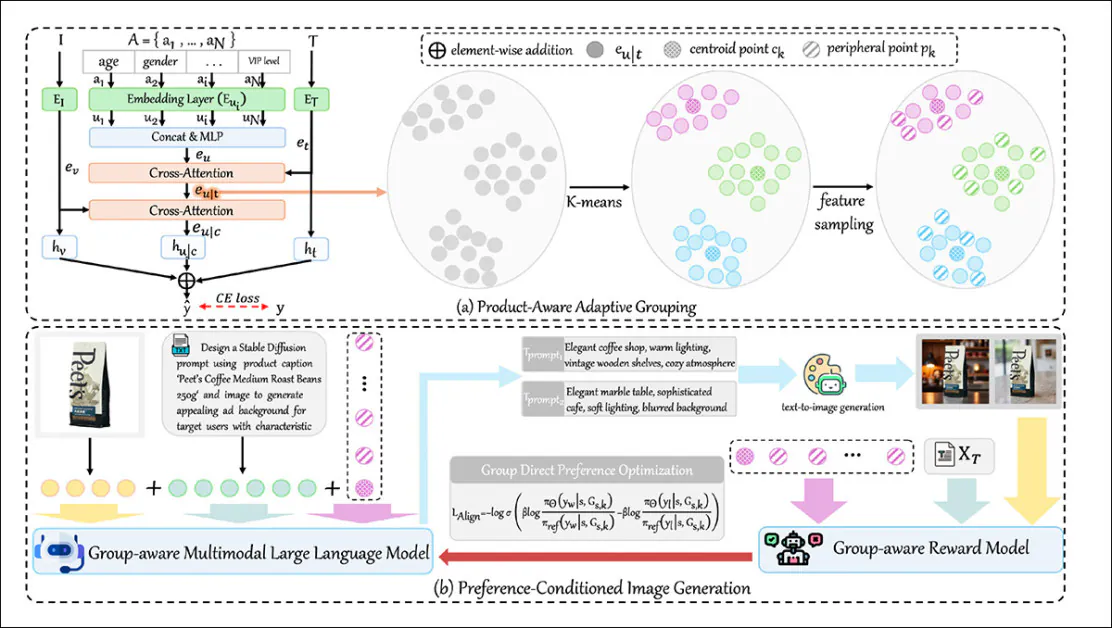

The system uses adaptive clustering (a method that finds natural groupings by linking user traits with how they respond to different products) to group users, based on how their traits shape visual preferences in a given product setting. The authors’ implementation of this approach is called Product-Aware Adaptive Grouping (PAAG).

These groupings are not fixed in advance, but are discovered from patterns in the data.

A conditional image generator, titled Preference-Conditioned Image Generation (PCIG), then uses each group’s profile to create advertising images that match the group’s likely tastes:

The OSMF framework groups users by how their traits shape product preferences, then uses those group profiles to generate ad images matched to each group’s tastes. PAAG handles the grouping, and PCIG creates the images using prompts and feedback tuned to each group.

The image generator utilizes an unspecified version of Stable Diffusion, together with an apposite ControlNet suite (the latter, to help maintain consistency among the various cohort generations).

In the workflow, PAAG first encodes the relationship between user features and both text and image aspects of the product, using a set of dedicated encoders and a cross-attention mechanism to merge them into a unified preference embedding that reflects how likely a user is to click on a particular ad.

PAAG then models how different combinations of user attributes interact with both product titles and product images. Text and image features are extracted using CLIP and ResNet-based encoders, and user traits such as gender, location, age, or device are passed through an MLP, that enables cross-attention over product text and image features.

The resulting embedding represents each user’s click likelihood for a particular product in a specific visual context. Once these user-product preference embeddings are obtained, PAAG uses K-means clustering to group together users who respond similarly to a given product.

PAAG picks the best number of user groups for each product by checking how well the clusters separate. Instead of using just one average point per group, it samples several at different distances to capture a wider range of preferences.

These group profiles are then passed as tokens to the group-aware multimodal large language model (G-MLLM), which uses them to generate ad images tailored to each group.

Image Generation Based on User Preferences

On the user side, G-MLLM learns to predict which group members are likely to click next and how to describe common traits in natural language. On the product side, it learns to summarize the product shown in an image and to generate ad-style captions that match both the item and the group.

To reflect real user behavior, the model is extended into a group-aware reward model (GRM). GRM is trained on the researchers’ own Grouped Advertising Image Preference (GAIP), dataset† (see below) to compare pairs of images for the same product and identify which one worked better with a given group, using real click-through data.

This reward signal is then used to fine-tune G-MLLM with Group-DPO, a method that teaches it to favor prompts that lead to better group-level engagement.

Data and Tests

Developing GAIP

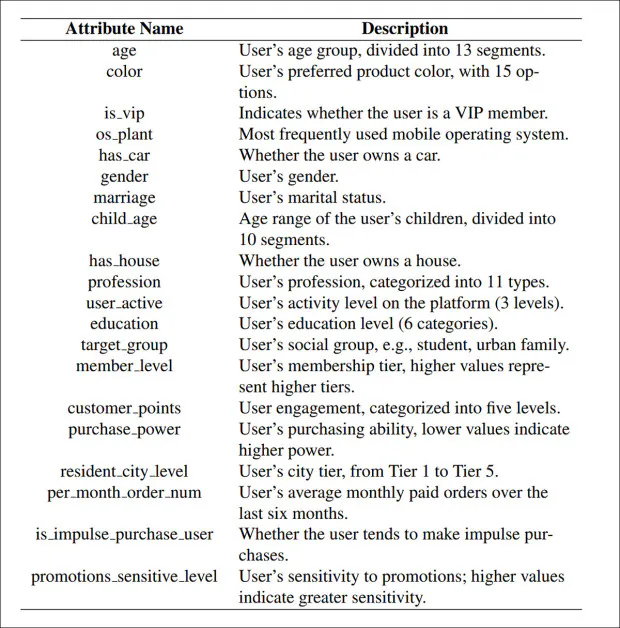

Noting a historic lack of datasets related to group-based advertising preferences, and that prior collections such as Personalized Soups and CG4CTR are either too small-scale or too ill-specified, the researchers developed their own collection, the aforesaid GAIP, derived from the ‘industrial advertising logs’ of an unspecified e-commerce platform.

The logs were collected over a three-week period, with each entry recording the product image and title, the viewer’s profile (including age, spending level, and sensitivity to promotions), and whether the ad was clicked.

The dataset includes over 40 million users, 2 million products, and nearly 10 million advertising images, with high visual variety across items.

Users were grouped by PAAG into distinct clusters for each product, and the click-through rate (CTR) calculated per image within each group:

From the new paper’s supplementary material, a small peek at some of the defining criteria for GAIT.

GAIP is then formed as a set of tuples (advertising image, product title, group embedding, group-specific CTR) pairing each image and title with its CTR and the embedding of the group that saw it.

To ensure reliability, only products with enough exposure are retained, resulting in a dataset of 610,172 group-level samples.

GAIP is substantially larger than earlier datasets: while most prior benchmarks involve fewer than ten user groups, GAIP includes nearly 600,000 real-world group-wise preference records, offering deeper insights into group-level preferences.

Tests

To train the PCIG pipeline, the researchers extracted image and text features using ResNet and the CLIP text encoder, then mapped them to 128-dimensional embeddings through learnable linear layers. To maintain efficiency, PAAG was restricted to five user groups per product.

The group embeddings were constructed using a percentile-based sampling strategy, drawing multiple points from the 15th, 55th, and 95th percentiles, to capture both core and peripheral preferences.

LLaVA was used as the backbone for G-MLLM, and pretraining was conducted over ten epochs with a cosine learning schedule at a learning rate of 2e-6, requiring a formidable five days of training on a cluster of eight NVIDIA H100 GPUs, each with 80GB of VRAM.

GRM was trained by reconstructing GAIP with matched product image pairs, then initialized with the same weights as G-MLLM. During the final Group-DPO stage, GRM was frozen, and G-MLLM fine-tuned using LoRA for three epochs – again, at a learning rate of 2e-5, on the same NVIDIA cluster.

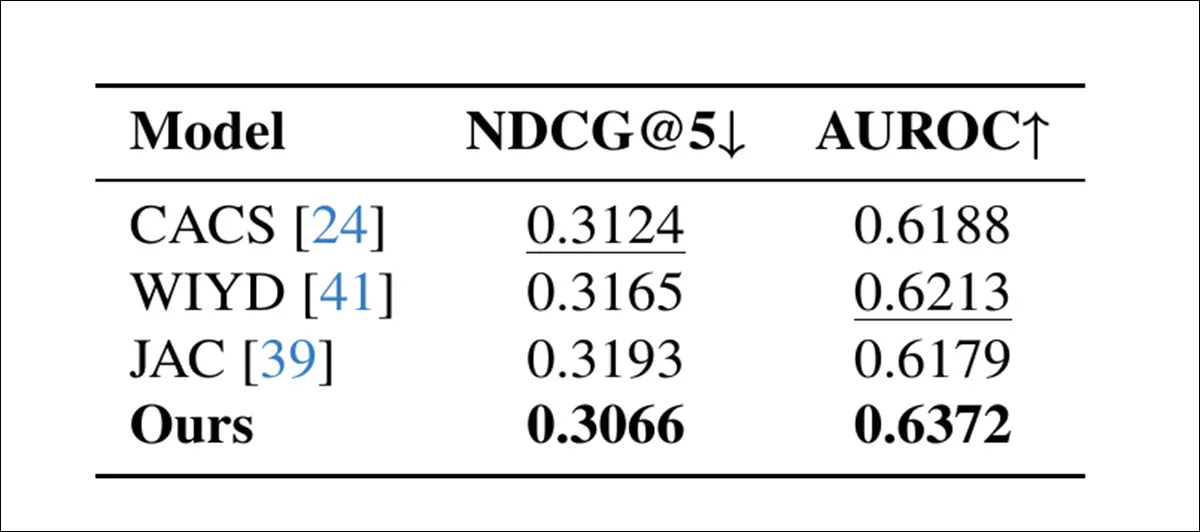

Metrics used for the first evaluation were NDCG@5 and AUROC. NDCG@5 measured how differently each group ranked the same set of ad images, with lower values indicating clearer separation in preferences; and AUROC was used to evaluate how well each model distinguished clicked from non-clicked content.

All metrics were computed on clustering results from 1,000 products, totaling around 100,000 samples, and were used to compare PAAG against three prior systems: CACS; WIYD; and JAC:

Preference modeling results compared to prior methods. Lower NDCG@5 and higher AUROC indicate better performance. Best scores are in bold, second-best underlined.

Of these results, the authors comment:

‘[Our] method achieves superior performance on both metrics. Concretely, PAAG attains the lowest NDCG@5 (0.3066), outperforming the best baseline (CACS) , indicating more distinct inter-group preference patterns for effective group-wise advertising generation.

‘Additionally, PAAG achieves the highest AUROC (0.6372), improving over the strongest baseline (WIYD) by 0.0159.’

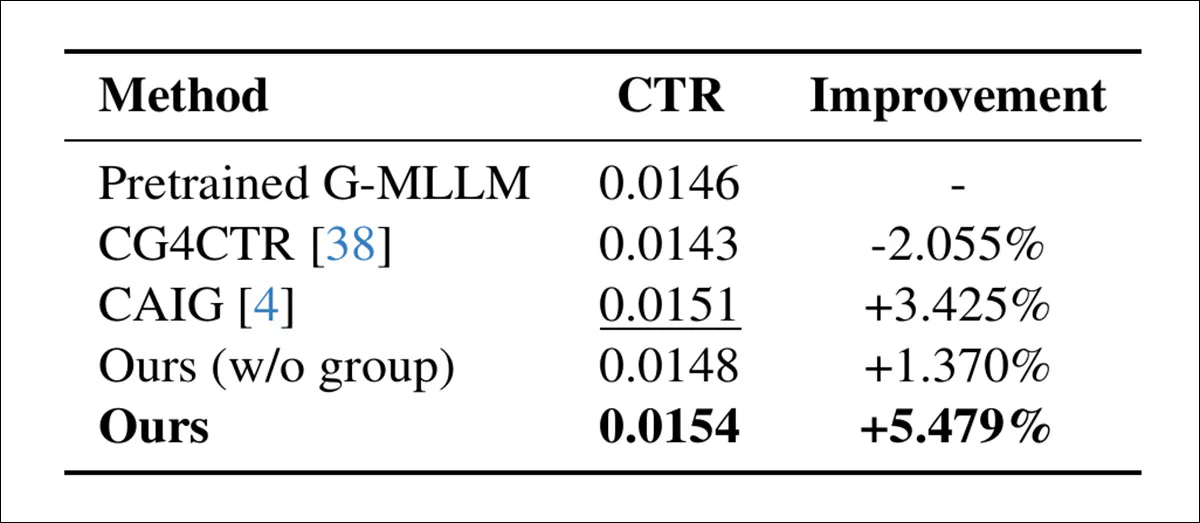

A second round of tests checked whether the system could better match ads to the right user groups:

Online CTR comparison showing that group-personalized generation (‘Ours’) outperforms all baselines, including CAIG and pretrained G-MLLM.

Here, PCIG showed stronger click rates than older models like CAIG and G‑MLLM, with a 5.5% improvement. GRM was also tested offline by checking if it could correctly choose the better ad in a pair, based on group preferences. It beat all baselines, including general-purpose models, with a 4.7% gain over CAIG.

A final qualitative test was conducted to evaluate whether PCIG could reflect group-level preferences in the style of its generated images. As shown in the figure below, the same product was rendered differently for each group, with changes in palette, tone, and visual composition:

Full results for the qualitative tests, previewed earlier in the article.

These variations aligned, the authors contend, with inferred click preferences for each group, showing that PCIG could produce stylistically distinct outputs while preserving relevance and appeal. The authors state:

‘[PCIG] ensures the stylistically diverse images to accommodate the click preferences of distinct user groups, thereby demonstrating its strong capability to adapt generation to heterogeneous user demands and capture subtle, fine-grained preference differences across varied user groups, highlighting its potential for group-aware advertising image generation at scale.’

Conclusion

Perhaps the most intriguing aspect of this project is the unknown correlation between output styles across group-targeted images for the same product (of which there are several pages’ more examples in the paper’s supplementary materials than we can reproduce here).

Can we assume that urban backgrounds are related to age, i.e., to graduates starting out, and that rural environments are aimed at more prosperous Gen X types who identify the open road as a kind of ‘final freedom’? One can Rorschach these test outputs all day.

The potential of such systems rests on two factors: insight and latency. Insight depends on whether emerging tracking systems can still extract enough meaningful information from users to support effective cohort-based advertising, while also laying the groundwork for more precise, individually targeted ads in the future.

Latency poses a greater challenge, since these custom ad images must be generated and delivered almost instantly; although some recent text-to-image models can produce results in just a few seconds, even that delay may be too long for real-time ad auctions.

One possible remedy is to produce the images locally, on the browser’s GPU, avoiding network round-trips; or to create a raft of images pre-emptively, pre-cached on the client.

** This aspect is elided in the new paper, much as new AI frameworks’ potential for deepfake abuse is often softened by the use of cuddly animal figures (rather than AI porn) in new studies. Nonetheless, the kind of imagery shown in the work represents advertisers on their very best behavior, rather than depicting quite how personal visual ads could eventually become, as consumer targeting methods team up with rapid-response generative AI.

** I cannot identify this named institution, since ‘UCAS’ generally resolves to a well-known UK university application clearing-house. I welcome clarification.

† Which the researchers promise to release at the associated GitHub repo.

First published Thursday, February 5, 2026