Thought Leaders

What Every Data Scientist Should Know About Graph Transformers and Their Impact on Structured Data

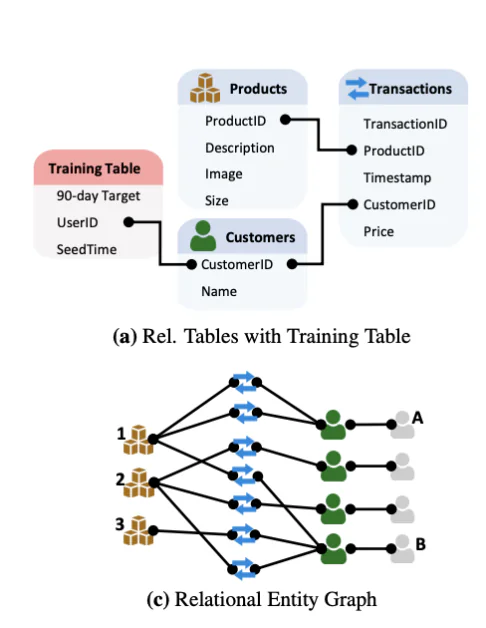

I co-created Graph Neural Networks while at Stanford. I recognized early on that this technology was incredibly powerful. Every data point, every observation, every piece of knowledge doesn’t exist in isolation; it is part of a graph connected to other pieces of knowledge. Importantly, most valuable business data, often stored as tables in databases and data warehouses, can naturally be represented as a graph. Harnessing this relational structure is key to building accurate and non-hallucinating AI models.

Graph neural networks (GNNs) introduced message-passing architectures that could reason over graphs capturing connections between pieces of knowledge. But just as Transformers transformed language understanding, a new class of models, Graph Transformers, is bringing similar gains to graph-based data. These models combine the flexibility of attention mechanisms with structural graph priors to model complex relationships more effectively than their GNN predecessors.

Why graphs need more than message passing

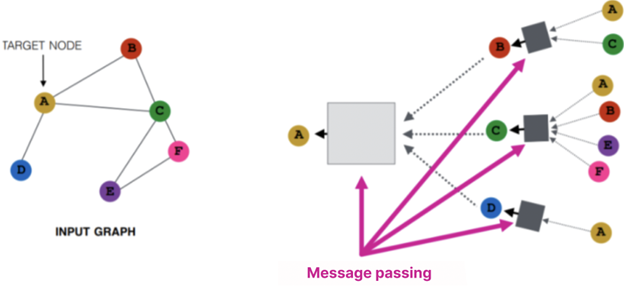

Traditional graph neural networks (GNNs) rely on message passing, a process where each node updates its internal state by aggregating information from its neighbors. Think of it as each node exchanging summaries with nearby nodes, then using those summaries to refine its own understanding. Over multiple layers, this allows information to propagate through the graph.

While powerful for learning local patterns, message passing has important limitations:

- Over-squashing: As information is aggregated over many hops, it can become compressed, losing meaningful detail. This is especially problematic in deep GNNs.

- Limited context: Standard message passing can’t easily capture long-range dependencies without many layers, which increases complexity and noise.

- Expressiveness: Many graph structures can’t be differentiated using only local neighborhood information, limiting model performance on tasks requiring fine structural distinctions.

This is where Graph Transformers step in. By replacing or augmenting message passing with attention mechanisms, they allow each node to directly attend to others (even distant ones) based on learned importance. The result is richer representations, better scalability, and the ability to reason over complex structures more flexibly.

From GNNs to Graph Transformers

The original Transformer model, introduced in the iconic paper, Attention Is All You Need, was designed to model relationships between tokens in a sequence. Its success lies in self-attention, a mechanism that allows each input to consider every other input, weighted by learned relevance.

Graph Transformers adapt this paradigm by allowing nodes to attend not only to their neighbors but to any node in the graph, either through fully connected attention or a hybrid approach that balances global and local signals. The challenge is introducing a notion of structure into a model designed for unstructured sequences.

Graph-Specific Positional Encodings

Unlike text, graphs don’t have an inherent order, making positional encoding, which refers to techniques for injecting structural or location-based information into a model, non-trivial. Graph Transformers tackle this with various methods:

- Laplacian Eigenvectors: Derived from the graph Laplacian matrix, these provide a spectral embedding that captures global structure.

- Random Walks: Capture the probability of traversing from one node to another over multiple hops.

- Structural Encodings: Include distance metrics, node degrees, or edge types.

These positional encodings, whether spectral, probabilistic, or structural, give Graph Transformers a way to understand where each node sits within the broader graph. This structural awareness is essential for enabling attention mechanisms to operate meaningfully across irregular, unordered data, ultimately allowing the model to capture relationships that would be invisible to simpler, purely local methods.

Real-World Implementations and Use Cases

Bringing Graph Transformers into production requires infrastructure that can scale to real-world data sizes. Libraries like PyTorch Geometric (PyG) are making that possible. Built on PyTorch, PyG provides a modular framework for implementing GNNs and Graph Transformers across a range of applications, from molecule modeling to recommendation systems. It supports mini-batch training on both many small graphs and single large graphs, with multi-GPU and torch.compile support, making it well-suited for research and enterprise workflows alike.

These tools are already powering a wide range of real-world applications. In drug discovery, Graph Transformers help predict molecular properties by modeling atomic interactions as graphs. In logistics and supply chain optimization, they can represent and reason over dynamic networks of shipments, warehouses, and routes. E-commerce companies use them to improve recommendations by understanding product co-purchase and browsing behavior as relational graphs. And in cybersecurity, graph-based models are used to detect anomalies by analyzing access patterns, network topology, and event sequences.

In each of these settings, the ability to learn from complex, interconnected structures, without relying solely on handcrafted features, is proving to be a major advantage.

Technical Considerations

Despite their potential, Graph Transformers come with real engineering trade-offs. Full self-attention scales quadratically with the number of nodes, making memory and compute efficiency a top concern, especially for large-scale or dense graphs. Many real-world graphs also have directional edges, introducing asymmetries that complicate how structural information is encoded. And in practical deployments, inputs are rarely uniform: combining graph-structured data with text, time series, or images demands careful architectural choices and robust data preprocessing.

These challenges aren’t insurmountable, but they do require thoughtful system design, especially when transitioning from research prototypes to production-ready models.

What’s Next: LLMs Meet Graphs

A major research direction is the integration of large language models (LLMs) with graph structures. These hybrid systems use LLMs to encode textual context or extract entities, then ground that information in a graph for reasoning and decision-making.

In biology, this has powered tools like AlphaFold. In enterprise AI, it enables customer support systems that combine documentation and behavioral graphs. Graph Transformers are also playing a growing role in enabling AI agents to make smarter, more actionable decisions by allowing them to reason over structured state representations and prioritize interactions dynamically. This fusion helps agents better understand hierarchical relationships, track dependencies over time, and adapt their behavior in complex environments.

The field is still emerging, but the potential is significant.

Conclusion

Graph Transformers aren’t just the next iteration of GNNs; they represent a convergence of attention, structure, and scalability. Whether you’re working in finance, life sciences, or recommender systems, the message is clear: your data forms a graph, so your models should too.