Artificial Intelligence

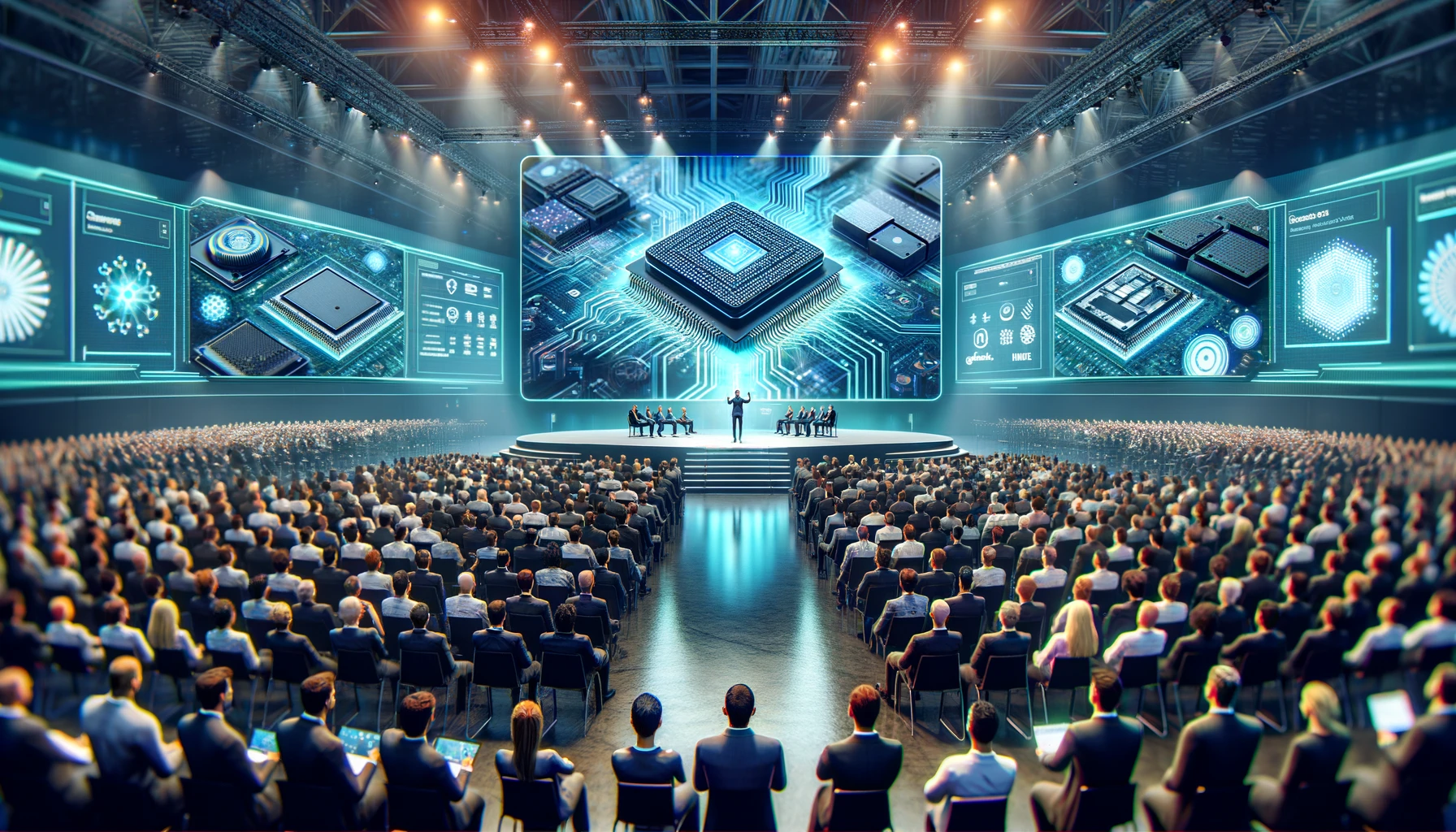

The Rise of Neural Processing Units: Enhancing On-Device Generative AI for Speed and Sustainability

The evolution of generative AI is not just reshaping our interaction and experiences with computing devices, it is also redefining the core computing as well. One of the key drivers of the transformation is the need to operate generative AI on devices with limited computational resources. This article discusses the challenges this presents and how neural processing units (NPUs) are emerging to solve them. Additionally, the article introduces some of the latest NPU processors that are leading the way in this field.

Challenges of On-device Generative AI Infrastructure

Generative AI, the powerhouse behind image synthesis, text generation, and music composition, demands substantial computational resources. Conventionally, these demands have been met by leveraging the vast capabilities of cloud platforms. While effective, this approach comes with its own set of challenges for on-device generative AI, including reliance on constant internet connectivity and centralized infrastructure. This dependence introduces latency, security vulnerabilities, and heightened energy consumption.

The backbone of cloud-based AI infrastructure largely relies on central processing units (CPUs) and graphic processing units (GPUs) to handle the computational demands of generative AI. However, when applied to on-device generative AI, these processors encounter significant hurdles. CPUs are designed for general-purpose tasks and lack the specialized architecture needed for efficient and low-power execution of generative AI workloads. Their limited parallel processing capabilities result in reduced throughput, increased latency, and higher power consumption, making them less ideal for on-device AI. On the hand, while GPUs can excel in parallel processing, they are primarily designed for graphic processing tasks. To effectively perform generative AI tasks, GPUs require specialized integrated circuits, which consume high power and generate significant heat. Moreover, their large physical size creates obstacles for their use in compact, on-device applications.

The Emergence of Neural Processing Units (NPUs)

In response to the above challenges, neural processing units (NPUs) are emerging as transformative technology for implementing generative AI on devices. The architecture of NPUs is primarily inspired by the human brain’s structure and function, particularly how neurons and synapses collaborate to process information. In NPUs, artificial neurons act as the basic units, mirroring biological neurons by receiving inputs, processing them, and producing outputs. These neurons are interconnected through artificial synapses, which transmit signals between neurons with varying strengths that adjust during the learning process. This emulates the process of synaptic weight changes in the brain. NPUs are organized in layers; input layers that receive raw data, hidden layers that perform intermediate processing, and output layers that generate the results. This layered structure reflects the brain’s multi-stage and parallel information processing capability. As generative AI is also constructed using a similar structure of artificial neural networks, NPUs are well-suited for managing generative AI workloads. This structural alignment reduces the need for specialized integrated circuits, leading to more compact, energy-efficient, fast, and sustainable solutions.

Addressing Diverse Computational Needs of Generative AI

Generative AI encompasses a wide range of tasks, including image synthesis, text generation, and music composition, each with its own set of unique computational requirements. For instance, image synthesis heavily relies on matrix operations, while text generation involves sequential processing. To effectively cater to these diverse computational needs, neural processing units (NPUs) are often integrated into System-on-Chip (SoC) technology alongside CPUs and GPUs.

Each of these processors offers distinct computational strengths. CPUs are particularly adept at sequential control and immediacy, GPUs excel in streaming parallel data, and NPUs are finely tuned for core AI operations, dealing with scalar, vector, and tensor math. By leveraging a heterogeneous computing architecture, tasks can be assigned to processors based on their strengths and the demands of the specific task at hand.

NPUs, being optimized for AI workloads, can efficiently offload generative AI tasks from the main CPU. This offloading not only ensures fast and energy-efficient operations but also accelerates AI inference tasks, allowing generative AI models to run more smoothly on the device. With NPUs handling the AI-related tasks, CPUs and GPUs are free to allocate resources to other functions, thereby enhancing overall application performance while maintaining thermal efficiency.

Real World Examples of NPUs

The advancement of NPUs is gaining momentum. Here are some real-world examples of NPUs:

- Hexagon NPUs by Qualcomm is specifically designed for accelerating AI inference tasks at low power and low resource devices. It is built to handle generative AI tasks such as text generation, image synthesis, and audio processing. The Hexagon NPU is integrated into Qualcomm’s Snapdragon platforms, providing efficient execution of neural network models on devices with Qualcomm AI products.

- Apple’s Neural Engine is a key component of the A-series and M-series chips, powering various AI-driven features such as Face ID, Siri, and augmented reality (AR). The Neural Engine accelerates tasks like facial recognition for secure Face ID, natural language processing (NLP) for Siri, and enhanced object tracking and scene understanding for AR applications. It significantly enhances the performance of AI-related tasks on Apple devices, providing a seamless and efficient user experience.

- Samsung’s NPU is a specialized processor designed for AI computation, capable of handling thousands of computations simultaneously. Integrated into the latest Samsung Exynos SoCs, which power many Samsung phones, this NPU technology enables low-power, high-speed generative AI computations. Samsung’s NPU technology is also integrated into flagship TVs, enabling AI-driven sound innovation and enhancing user experiences.

- Huawei’s Da Vinci Architecture serves as the core of their Ascend AI processor, designed to enhance AI computing power. The architecture leverages a high-performance 3D cube computing engine, making it powerful for AI workloads.

The Bottom Line

Generative AI is transforming our interactions with devices and redefining computing. The challenge of running generative AI on devices with limited computational resources is significant, and traditional CPUs and GPUs often fall short. Neural processing units (NPUs) offer a promising solution with their specialized architecture designed to meet the demands of generative AI. By integrating NPUs into System-on-Chip (SoC) technology alongside CPUs and GPUs, we can utilize each processor’s strengths, leading to faster, more efficient, and sustainable AI performance on devices. As NPUs continue to evolve, they are set to enhance on-device AI capabilities, making applications more responsive and energy-efficient.