Thought Leaders

Promoting Dignity and Independence Through Ethical Robotics: What Your Business Can Do

Robotics is no longer confined to factory floors or research labs; it’s on a much more exciting path. More and more, robotics are enabling individuals to maintain autonomy and quality of life. The opportunity for businesses is real, but so is the responsibility to ensure these systems are designed, deployed, and maintained in ways that respect humanity.

This isn’t about lofty mission statements or futuristic visions. It’s about practical choices your organisation can make now to ensure robotics works for people, not at their expense.

Start with the people, not the machine

The temptation in robotics is to begin with the capabilities of the hardware or AI and then look for somewhere to deploy it. Ethical design turns that process on its head.

If you’re developing or procuring assistive robots, for example, mobility aids, social companions, or devices that help with daily tasks, start by mapping the actual needs of the intended users in their specific cultural and regulatory context.

A robot designed for a Finnish retirement community will face different expectations than one used in an Italian rehabilitation clinic. Language support, physical interaction norms, and privacy expectations vary widely across the globe. Involving representative users in workshops and prototypes isn’t just good ethics; it’s a competitive advantage.

PAL Robotics is an example of a company that has done localisation successfully. Their pilot scheme exemplified localisation from user interface down to physical behaviours and cultural relevance—resulting in robots that feel more intuitive and effective in different European contexts.

Prioritise transparency over cleverness

Many European regulators are already signalling that “black box” robotics will not be acceptable in contexts involving vulnerable populations. Users and their families need to understand in plain, local language, what the robot can and cannot do, how it makes decisions, and what happens to the data it collects. Similarly PSTI legislation introduced into the UK in 2024 dictates that standard default network access passwords are no longer allowed – given every robot needs to be connected to a Wi-Fi network this legislation suggests even further regulation at every level will only increase and understandably so.

As an example, if a care-assist robot uses machine vision to detect falls, be clear about its accuracy rates, what happens when it makes a mistake, and who gets alerted. Don’t bury this in a technical PDF; make it accessible on-device and in multiple formats, and more importantly accept user feedback and continuously improve.

Transparency also builds trust with professional staff who work alongside the robot. If they understand its limitations, they’re far more likely to integrate it effectively rather than work around it.

Design for graceful failure

No system is perfect. Ethical robotics doesn’t just plan for success; it plans for the inevitable moments when things go wrong.

A well-designed assistive robot should fail in a way that does not compromise safety or dignity. For instance, if a robot arm used for feeding malfunctions, it should default to a safe, stationary position and alert a human operator immediately. If a mobility robot loses connectivity, it should stop in a stable configuration rather than attempting risky manoeuvres.

Businesses MUST run “failure drills” during testing, simulating sensor faults, power loss, or unexpected human actions, and document how the system responds. This not only reduces harm but can also protect against liability claims in markets with strict product safety regimes like Germany or the Netherlands within the wider EU and UK.

Be deliberate about data ethics

In assistive and healthcare robotics, the data collected can be intimate: movement patterns, speech, facial expressions, and even emotional states.

Store only the data you need, for as long as it’s genuinely useful. Make deletion easy and verifiable. Avoid repurposing data for commercial analytics without explicit, informed consent. And remember: “consent” in a care setting is not a tick-box but a legal requirement as part of an ongoing conversation, especially when users’ cognitive abilities may change over time.

If your robotics system uses third-party AI services, ensure their data handling meets the same ethical and legal standards e.g. those laid out in by The Information Commissioners Office in the UK. A breach by a subcontractor can still damage your brand and attract regulatory attention.

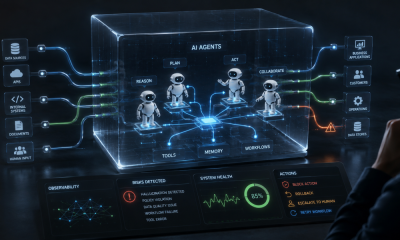

Learn from seasoned robotic providers like Stealth Robotics. They maintain detailed incident logs, conduct regular penetration tests, vulnerability assessments, and recovery drills as part of their proactive process.

Support the human workforce, don’t sideline it

One of the fastest ways to destroy any level of trust in robotics is to frame it as a replacement for human care. This is a mistake I am keen to prevent others from making. In the UK and much of Europe, the care and rehabilitation sectors already face severe staff shortages. The UK, for instance, has 157,000 vacancies. Robots can relieve physical strain, automate repetitive tasks, and free up staff time for human interaction, but only if deployed thoughtfully.

Work with care professionals, relevant industry associations and local training bodies to create clear protocols on how robots and humans collaborate in specific contexts. Training is vital to ensure staff feel confident operating and troubleshooting the technology. Highlight stories where robotics has allowed staff to focus more on emotional and complex care rather than less.

Invest in local compliance and cultural fit

A robotics solution that works smoothly in one country may face barriers in another. Electrical safety standards, wireless spectrum allocations, medical device classifications, and even accessibility requirements can differ.

Equally, cultural fit matters. A humanoid (or anthropological) robot that makes light conversation may be welcomed in one region but seen as intrusive in another; equally an anthropological robot may not be well received by different age groups within a community Test across diverse user groups before rolling out at scale. Localisation is something I know is important, so be extremely thorough in your research.

Measure what matters

Ethical robotics isn’t just a launch checklist; it’s a lifecycle commitment. Build metrics that track the actual impact on dignity and independence over time. Are users reporting greater confidence in daily activities? Are incidents of embarrassment or discomfort declining? Are staff reporting less stress and more job satisfaction?

Publish these findings, even if they’re imperfect. In a market crowded with claims about “human-centred” design, evidence-based results will set you apart.

For example, the IEEE, via ThinkMetrics, has released standards like P7010. This establishes wellbeing metrics that are grounded in validated indices to measure how robotic systems affect users’ physical, emotional, and social welfare.

The bottom line

For UK and EU businesses, promoting dignity and independence through robotics isn’t a niche “corporate social responsibility” exercise; it’s an essential part of building sustainable, trusted technology in a region with some of the world’s most robust human rights frameworks.

Ultimately, the companies that succeed will be those that design with empathy, test with rigour, adapt for local needs, and remain transparent about both the potential and the limits of their machines. A robot’s true value is not its sophistication, but its ability to preserve dignity and provide companionship.