Partnerships

Infineon Technologies and d-Matrix Partner on Low-Latency AI Infrastructure

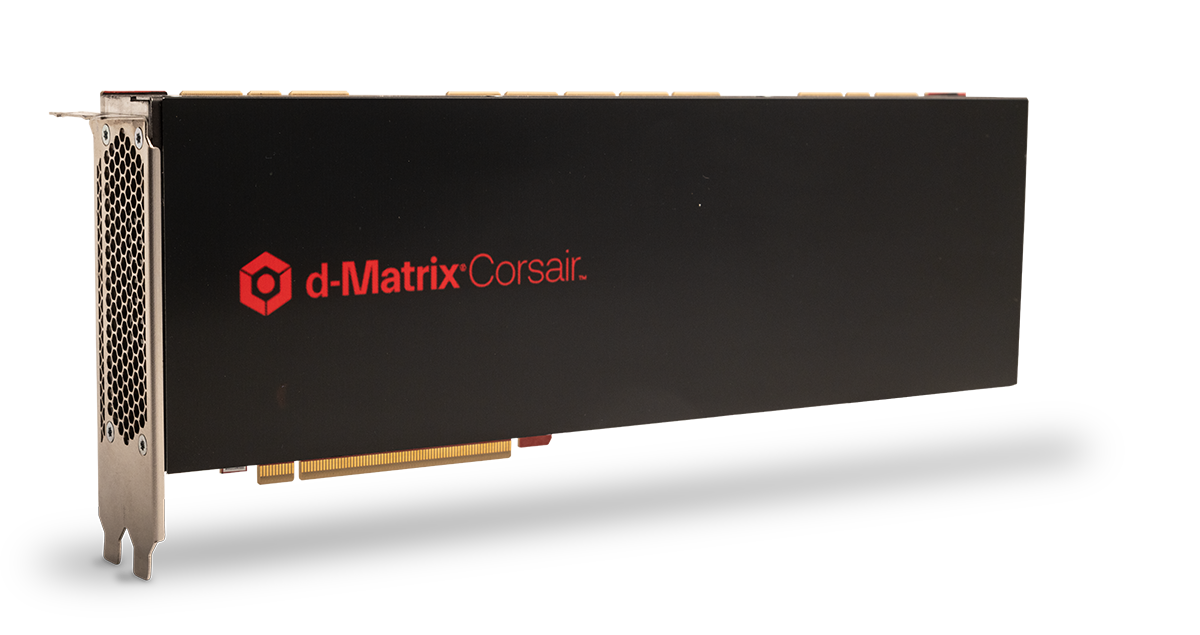

Infineon Technologies has announced a collaboration with d-Matrix focused on improving the performance and energy efficiency of AI inference systems used in modern data centers. The partnership centers around d-Matrix’s Corsair AI inference accelerator platform and Infineon’s OptiMOS dual-phase power modules, which are designed to support high-density compute environments for interactive AI workloads.

The announcement highlights a growing shift within the AI hardware industry. While much of the infrastructure boom over the past several years focused on training increasingly large AI models, the industry is now rapidly expanding into inference — the process of actually running models in real-world applications such as chatbots, agentic AI systems, copilots, search, financial analytics, and healthcare decision support. These workloads place different demands on hardware, particularly around latency, responsiveness, and power consumption.

Why AI Inference Is Becoming a Major Hardware Battleground

AI Inference has emerged as one of the fastest-growing segments of the AI infrastructure market because interactive AI systems require responses in milliseconds rather than seconds. d-Matrix has been positioning Corsair specifically for these workloads, emphasizing ultra-low latency and energy-efficient inference for large language models and AI agents.

According to d-Matrix, Corsair was designed around a digital in-memory compute architecture intended to reduce the memory bottlenecks that often slow down generative AI inference. The company claims the platform can significantly lower latency and improve throughput compared to traditional GPU-centric inference systems, particularly for interactive applications.

The partnership with Infineon addresses another increasingly critical challenge: power delivery.

As AI servers continue to increase in density, efficiently delivering power to accelerators has become a limiting factor for scaling infrastructure. Infineon’s OptiMOS TDM2254xx modules are designed for vertical power delivery architectures that help reduce electrical losses while improving power density inside compact server systems.

The Shift Toward Real-Time AI Systems

The companies framed the collaboration around the rise of “interactive AI,” where inference systems must continuously generate outputs with extremely low delay. That includes conversational AI, AI agents, real-time reasoning systems, and applications requiring rapid token generation from large language models.

d-Matrix founder and CEO Sid Sheth said the architecture behind Corsair was built specifically for sub-2 millisecond token latency, a metric that has become increasingly important as enterprises move AI systems from experimentation into customer-facing environments.

The broader AI industry is also beginning to recognize that inference infrastructure may evolve differently from training infrastructure. While GPU clusters dominated the first phase of generative AI expansion, inference increasingly rewards architectures optimized around memory bandwidth, latency, networking, and energy efficiency rather than raw compute alone.

Power Efficiency Is Becoming Central to AI Scaling

One of the biggest constraints facing hyperscalers and AI cloud providers is electricity demand. AI inference workloads can run continuously across millions of requests per day, making operational efficiency critical for deployment costs.

Infineon has been aggressively expanding its position within AI infrastructure through semiconductor technologies based on silicon, silicon carbide (SiC), and gallium nitride (GaN). The company has increasingly focused on supplying the power delivery layer underneath AI accelerators and server infrastructure.

The collaboration with d-Matrix reflects how semiconductor firms are becoming more tightly integrated with AI accelerator startups as the industry searches for alternatives to conventional GPU-heavy architectures.

AI Infrastructure Is Expanding Beyond Traditional GPUs

The partnership also arrives during a broader wave of experimentation in AI hardware. A growing number of startups are developing specialized accelerators focused specifically on inference, memory-centric computing, or AI networking.

d-Matrix has differentiated itself through its emphasis on compute-in-memory technologies and low-latency inference systems tailored for generative AI. The company has also expanded its infrastructure strategy beyond accelerator chips alone, recently emphasizing networking, composable infrastructure, and full-system optimization for inference clusters.

As AI applications become increasingly agentic and interactive, infrastructure providers are expected to place greater emphasis on reducing latency, lowering energy consumption, and improving system-level efficiency across entire data center stacks rather than focusing solely on raw processing power.