Anderson's Angle

AI Video Perfects the Cat Selfie

AI video generators often give results that are close, but no cigar, in terms of delivering what your text-prompt wanted. But a new high-level fix makes all the difference.

Generative video systems often have difficulty making videos that are really creative or wild, and often fail to live up to the expectations of user’s text-prompts.

Part of the reason for this is entanglement – the fact that vision/language models have to compromise on how long they train on their source data. Too little training, and the concepts are flexible, but not fully-formed – too much, and the concepts are accurate, but no longer flexible enough to incorporate into novel combinations.

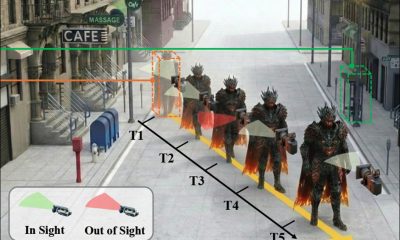

You can get the idea from the video embedded below. On the left is the kind of halfway-house compromise that many AI systems deliver in response to a demanding prompt (the prompt is at the top of the video in all four examples) that asks for some juxtaposition of elements that’s too fantastical to have been a real training example. On the right, is an AI output that sticks to the prompt much better:

Click to play (no audio). On the right, we see ‘factorized’ WAN 2.2 really delivering on the prompts, in comparison to the vague interpretations of ‘vanilla’ Wan 2.2., on the left. Please refer to the source video files for better resolution and many more examples, though the curated versions seen here do not exist at the project site, and were assembled for this article. Source

Well, though we have to forgive the clapping duck’s human hands (!), it’s clear that the examples on the right adhere to the original text-prompt far better than the ones on the left.

Interestingly, both architectures featured are essentially the same architecture – the popular and very capable Wan 2.2, a Chinese release that has gained significant ground in the open source and hobbyist communities this year.

The difference is that the second generative pipeline is factorized, which in this case means that a large language model (LLM) has been used to re-interpret the first (seed) frame of the video, so that it will be far easier for the system to deliver what the user is asking for.

This ‘visual anchoring’ involves injecting an image crafted from this LLM-enhanced prompt into the generative pipeline as a ‘start frame’, and using a LoRA interpretive model to help integrate the ‘intruder’ frame into the video creation process.

The results, in terms of prompt fidelity, are quite remarkable, particularly for a solution that seems rather elegant:

Click to play (no audio). Further examples of ‘factorized’ video generations really sticking to the script. Please refer to the source video files for better resolution and many more examples, though the curated versions seen here do not exist at the project site, and were assembled for this article.

This solution comes in the form of the new paper Factorized Video Generation: Decoupling Scene Construction and Temporal Synthesis in Text-to-Video Diffusion Models, and its video-laden accompanying project website.

While many current systems attempt to boost prompt accuracy by using language models to rewrite vague or underspecified text, the new work argues that this strategy still leads to failure when the model’s internal scene representation is flawed.

Even with a detailed rewritten prompt, text-to-video models often miscompose key elements or generate incompatible initial states that break the logic of the animation. As long as the first frame fails to reflect what the prompt describes, the resulting video cannot recover, regardless of how good the motion model is.

The paper states*:

‘[Text-to-video] models frequently produce distributionally shifted frames yet still achieve [evaluation scores] comparable to I2V models, indicating that their motion modeling remains reasonably natural even when scene fidelity is relatively poor.

‘[Image-to-Video] models exhibit the complementary behavior, strong [evaluation scores] from accurate initial scenes and weaker temporal coherence, while I2V+text balances both aspects.

‘This contrast suggests a structural mismatch in current T2V models: scene grounding and temporal synthesis benefit from distinct inductive biases, yet existing architectures attempt to learn both simultaneously within a single model.’

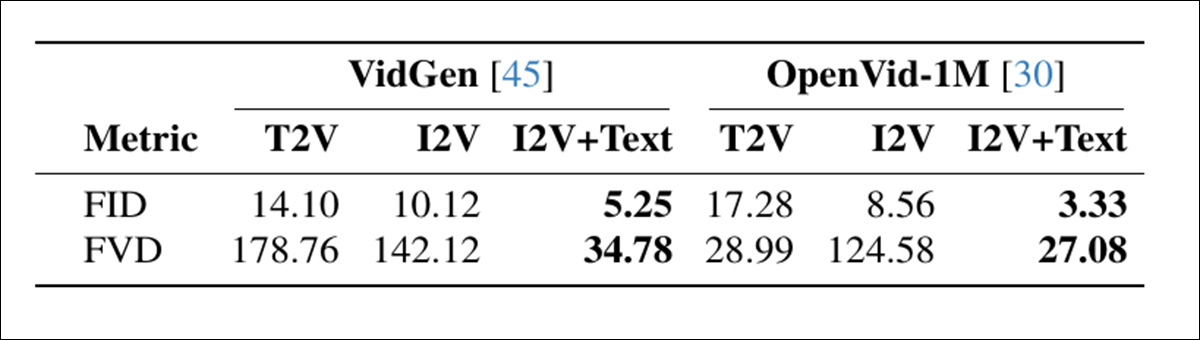

A diagnostic comparison of generation modes found that models without explicit scene anchoring scored well on motion, but often compromised on scene layout, while image-conditioned approaches showed the opposite pattern:

Comparison of video generation modes on two datasets, showing that I2V+text achieves the best frame quality (FID) and temporal coherence (FVD), highlighting the benefit of separating scene construction from motion. Source

These findings point to a structural flaw wherein current models try to learn both scene layout and animation in one go, even though the two tasks require different kinds of inductive bias, and are better handled separately.

Perhaps of greatest interest is that this ‘trick’ can potentially be applied to local installations of models such as Wan 2.1 and 2.2, and similar video diffusion models such as Hunyuan Video. Anecdotally, comparing the quality of hobbyist output to commercial generative portals such as Kling and Runway, most of the major API providers are improving on open source offerings such as WAN with LoRAs, and – it seems – with tricks of the kind seen in the new paper. Therefore this particular approach could represent a catch-up for the FOSS contingent.

Tests conducted for the method indicate that this simple and modular approach offers a new state-of-the-art on the T2V-CompBench benchmark, significantly improving all tested models. The authors note in conclusion that while their system radically improves fidelity, it does not address (nor is it made to address) identity drift, currently the bane of generative AI research.

The new paper comes from four researchers at Ecole Polytechnique Fédérale de Lausanne (EPFL) in Switzerland.

Method and Data

The central proposition of the new technique is that text-to-video (T2V) diffusion models need to be ‘anchored’ to starting frames that really fit the desired text-prompt.

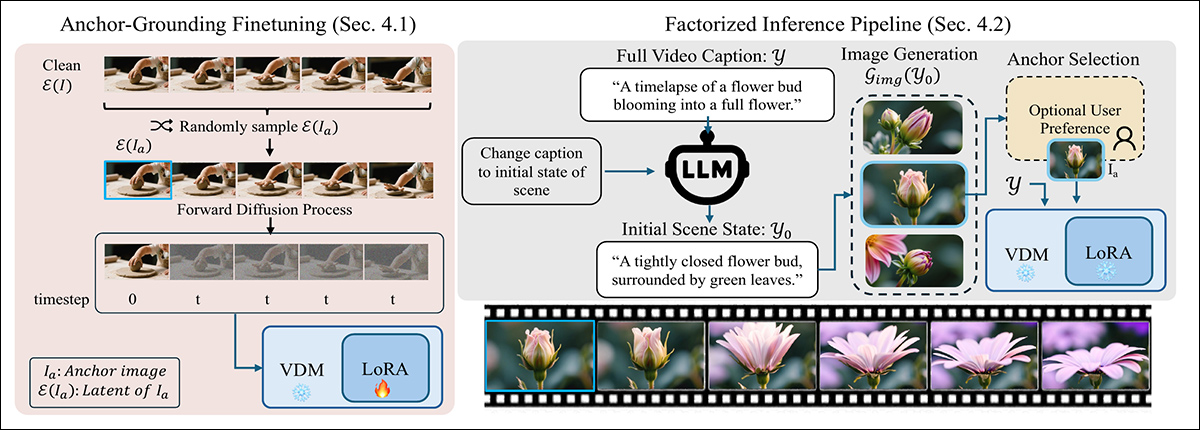

To ensure the model respects the starting frame, the new method disrupts the standard diffusion process by injecting a clean latent from the anchor image at timestep zero, replacing one of the usual noisy inputs. This unfamiliar input confuses the model at first, but with minimal LoRA finetuning, it learns to treat the injected frame as a fixed visual anchor rather than part of the noise trajectory:

A two-stage method for grounding text-to-video generation with a visual anchor: Left, the model is finetuned using a lightweight LoRA to treat an injected clean latent as a fixed scene constraint. Right, the prompt is split into a first-frame caption, which is used to generate the anchor image guiding the video.

At inference, the method rewrites the prompt to describe only the first frame, using an LLM to extract a plausible initial scene state focused on layout and appearance.

This rewritten prompt is passed to an image generator to produce a candidate anchor frame (which can optionally be refined by the user). The selected frame is encoded into a latent and injected into the diffusion process by replacing the first timestep, allowing the model to generate the rest of the video while remaining anchored to the initial scene – a process that works without requiring changes to the underlying architecture.

The process was tested by creating LoRAs for Wan2.2-14B, Wan2.1-1B, and CogVideo1.5-5B. The LoRA training was conducted at a rank of 256, on 5000 randomly-sampled clips from the UltraVideo collection.

Training lasted 6000 steps, and required 48 GPU hours† for Wan-1B and CogVideo-5B, and 96 GPU hours for Wan-14B. The authors note that Wan-5B natively supports text-only and text-image conditioning (which are in this case being foisted onto the older frameworks), and therefore did not require any finetuning.

Tests

In the experiments run for the process, each text-prompt was initially refined using Qwen2.5-7B-Instruct, which used the result to generate a detailed ‘seed image’ caption containing a description of the entire scene. This was then passed to QwenImage, which was tasked with generating the ‘magic frame’ to be interposed into the diffusion process.

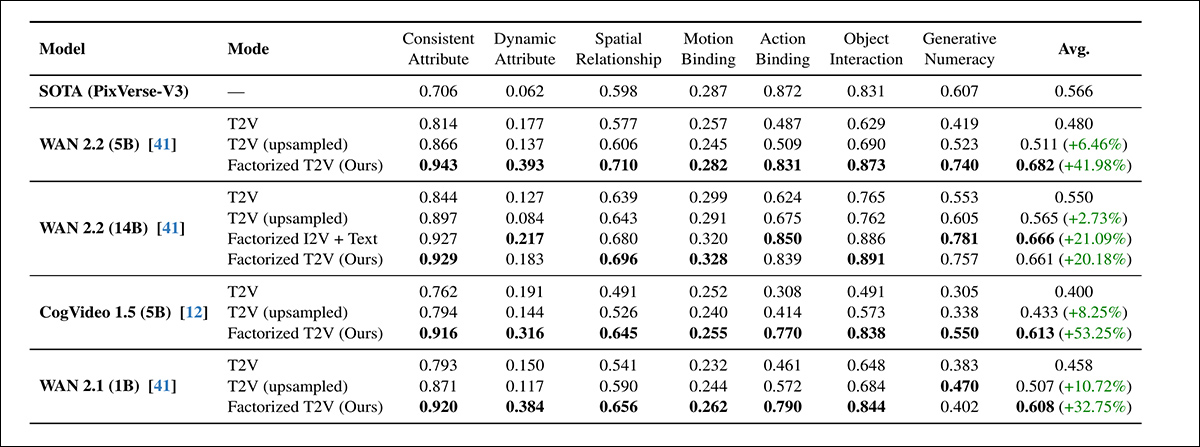

The benchmarks used to assess the system included the aforementioned T2V-CompBench, for testing compositional understanding by scoring how well models preserved objects, attributes, and actions within a coherent scene; and VBench 2.0, for evaluating broader reasoning and consistency across 18 metrics, grouped into creativity, commonsense reasoning, controllability, human fidelity, and physics:

Across all seven evaluation categories of T2V-CompBench, the factorized T2V method outperformed both standard and upsampled T2V baselines for every tested model, with gains reaching up to 53.25%. The highest-scoring variants frequently matched or exceeded the proprietary PixVerse-V3 benchmark.

Regarding this initial round of tests, the authors state*:

‘[Across] all models, adding an anchor image consistently improves compositional performances. All smaller Factorized models (CogVideo 5B, Wan 5B and Wan 1B) outperform the larger Wan 14B T2V model.

‘Our factorized Wan 5B also outperforms commercial PixVerse-V3 baseline which is the best reported model on the benchmark. This demonstrates that visual grounding substantially enhances scene and action understanding even in smaller-capacity models.

‘Within each model family, the factorized version outperforms the original model. Notably, our lightweight anchor-grounded LoRA on WAN 14B reaches performance comparable its pretrained I2V 14B variant (0.661 vs. 0.666), despite requiring no full retraining.’

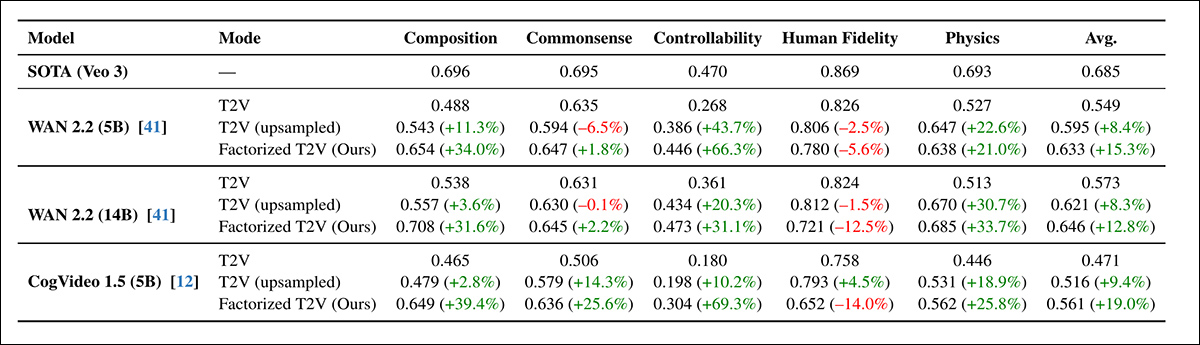

Next came the VBench2.0 round:

The factorized T2V approach consistently improved VBench 2.0 performance across composition, commonsense reasoning, controllability, and physics, with some gains exceeding 60% – although human fidelity remained below the proprietary Veo 3 baseline.

Across all architectures, the factorized approach boosted scores in every VBench category except human fidelity, which slightly declined even with prompt upsampling. WAN 5B outperformed the larger WAN 14B, reinforcing earlier T2V-CompBench results that visual grounding contributed more than scale.

While gains on VBench were consistent, they were smaller than those seen on T2V-CompBench, and the authors attribute this to VBench’s stricter binary scoring regime.

For the qualitative tests, the paper supplies static images, but we refer the reader to the composite videos embedded in this article, for a clearer idea, with the caveat that the source videos are more numerous and diverse, as well as possessing greater resolution and detail. Find them here. Regarding qualitative results, the paper states:

‘Anchored videos consistently exhibit more accurate scene composition, stronger object–attribute binding, and clearer temporal progression.’

The factorized method remained stable even when the number of diffusion steps was cut from 50 to 15, showing almost no performance loss on T2V-CompBench. By contrast, both the text-only and upsampled baselines degraded sharply under the same conditions.

Although reducing steps could theoretically triple speed, the full generation pipeline only became 2.1x faster in practice, due to fixed costs from anchor-image generation. Still, the results indicated that anchoring not only improved sample quality but also helped stabilize the diffusion process, supporting faster and more efficient generation without loss in accuracy.

The project website provides examples of upsampled vs. new method generations, of which we offer a few (lower resolution) edited examples here:

Click to play (no audio). Upsampled starting sources vs. the authors’ factorized approach.

The authors conclude:

‘Our results suggest that improved grounding, rather than increased capacity alone, may be equally important. Recent advances in T2V diffusion have relied heavily on increasing model size and training data, yet even large models often struggle to infer a coherent initial scene from text alone.

‘This contrasts with image diffusion, where scaling is relatively straightforward; in video models, each architectural improvement must operate over an additional temporal dimension, making scaling substantially more resource intensive.

‘Our findings indicate that improved grounding can complement scale by addressing a different bottleneck: establishing the correct scene before motion synthesis begins.

‘By factorizing video generation into scene composition and temporal modeling, we mitigate several common failure modes without requiring substantially larger models. We view this as a complementary design principle that can guide future architectures toward more reliable and structured video synthesis.’

Conclusion

Though the problems of entanglement are very real, and may require dedicated solutions (such as improved curation and distribution evaluations prior to training), it has been an eye-opener to watch factorization ‘unglue’ several stubborn and ‘stuck’ concept prompt-orchestrations into much more accurate renderings – with only a moderate layer of LoRA conditioning, and the intervention of a notably improved start/seed image.

The gulf in resources between local hobbyist inference and commercial solutions may not be quite as enormous as supposed, given that nearly all providers are seeking to rationalize their considerable GPU resource outlay to consumers.

Anecdotally, a very large number of the current crop of generative video providers appear to be using branded and generally ‘souped-up’ versions of Chinese FOSS models. The main ‘moat’ any of these ‘middleman’ systems seem to have is that they have taken the trouble of training LoRAs, or else – at greater expense, and slightly greater reward – actually conducting a full fine-tune of the model weights††.

Insights of this kind could help to close that gap further, in the context of a release scene where the Chinese seem determined (not necessarily for altruistic or idealistic reasons) to democratize gen AI, whereas western business interests would perhaps prefer that increasing model size and regulations eventually cloister any really good models behind APIs, and multiple layers of content filters.

* Authors’ emphases, not mine.

† The paper does not specify which GPU was chosen, or how many were used.

†† Though the LoRA route is more likely, both for economical ease of use, and because the full weights, rather than quantized weights, are not always made available.

First published Friday, December 19, 2025