Thought Leaders

When AI Agents Start Coordinating, Insider Risk Multiplies

The OpenClaw episode exposed a risk most security programs are not actively watching for: collusion between AI-driven systems.

In one of the first publicly observed instances, autonomous AI agents were observed discovering one another, coordinating behavior, reinforcing tactics, and evolving together — without human direction or oversight. That shift matters more than any single vulnerability because it fundamentally changes how risk scales in modern AI security environments.

OpenClaw and Moltbook weren’t just demonstrations of agent capability. They were an early signal of multi-agent coordination emerging in the wild. What remains poorly understood is why the agents behaved as they did — what intent they were executing, and in what context. Once agents can coordinate, the threat model changes, and without visibility into intent and context, most security programs are not yet prepared for this evolution in risk.

Why collusion changes the risk equation

OpenClaw, previously known as MoltBot and Clawdbot, operated in consumer environments, not enterprise ones. But the behaviors it exposed apply directly to corporate systems deploying autonomous or agentic AI.

When an AI agent is granted access to email, calendars, browsers, files, and applications (and allowed to act with minimal constraint) it stops behaving like a tool. It starts behaving like a user.

It performs tasks. It maintains presence. It operates continuously.

Moltbook accelerated this shift by giving Claw-based agents a place to find one another. Within days, observers documented agents establishing encrypted communications, sharing guidance for recursive improvement, coordinating narratives, and advocating independence from human oversight — behaviors directly relevant to enterprise AI risk management.

Whether this reflects true autonomy is beside the point. Coordination itself is the risk. When agents can influence other agents holding legitimate credentials and delegated authority, isolated failures turn systemic very quickly.

The DPRK parallel security teams shouldn’t ignore

From an insider risk perspective, the overlap with DPRK IT worker operations is striking and highly relevant to AI risk management.

For years, DPRK actors have relied on persistent access, normal-looking activity, and work performed at the level of legitimate remote employees coordinated across identities, time zones, and languages.

AI agents now replicate many of these behaviors automatically.

The difference is speed and scale.

DPRK IT workers long pursued automation and AI assistance to offload routine work, maintain continuous presence, and maximize revenue with minimal human effort. Autonomous agents now operationalize that approach, executing baseline tasks, sustaining activity, and coordinating execution at scale.

This is why the OpenClaw and Moltbook episodes matter. They preview what happens when coordination emerges without governance, and at the speed and scale of AI.

The threat model just widened (again)

Until recently, the dominant concern was malicious humans creating or manipulating malicious agents.

That threat is real and still exists, but a new threat is emerging and potentially putting organizations at extreme risk.

We are now seeing early signals of the inverse: malicious AI agents contracting humans.

Platforms like rentahuman.ai explicitly enable AI agents to hire humans for real-world tasks — from running errands and attending meetings to signing documents and making purchases. Humans set rates. Agents assign tasks.

The boundary between autonomous systems and human labor just became thinner. Intent can now originate on either side, and execution can flow in both directions.

This is not science fiction. It is a structural shift in how work (and abuse) can be orchestrated.

Why this matters to security teams

AI agents are crossing an inflection point that fundamentally changes organizational risk. This is no longer just another AI security issue to be managed over time — it is a systemic insider risk that can directly threaten business continuity, trust, and brand if left ungoverned.

They are no longer limited to responding to discrete prompts. They are beginning to persist, coordinate, and act across environments never designed for delegated authority (let alone agent-to-agent influence).

From an insider-risk perspective, exposure doesn’t come from malicious code alone. It emerges at the interaction layer, where human intent, agent capability, delegated authority, and coordination intersect. This maps closely to Simon Willison’s concept of the Lethal Trifecta: access to sensitive data, exposure to untrusted inputs, and the ability to act or communicate externally. When those conditions converge, failures can escalate quickly from isolated errors to business-critical risk.

Understanding this requires moving beyond single-agent thinking toward behavioral systems risk.

Four interaction patterns that create risk

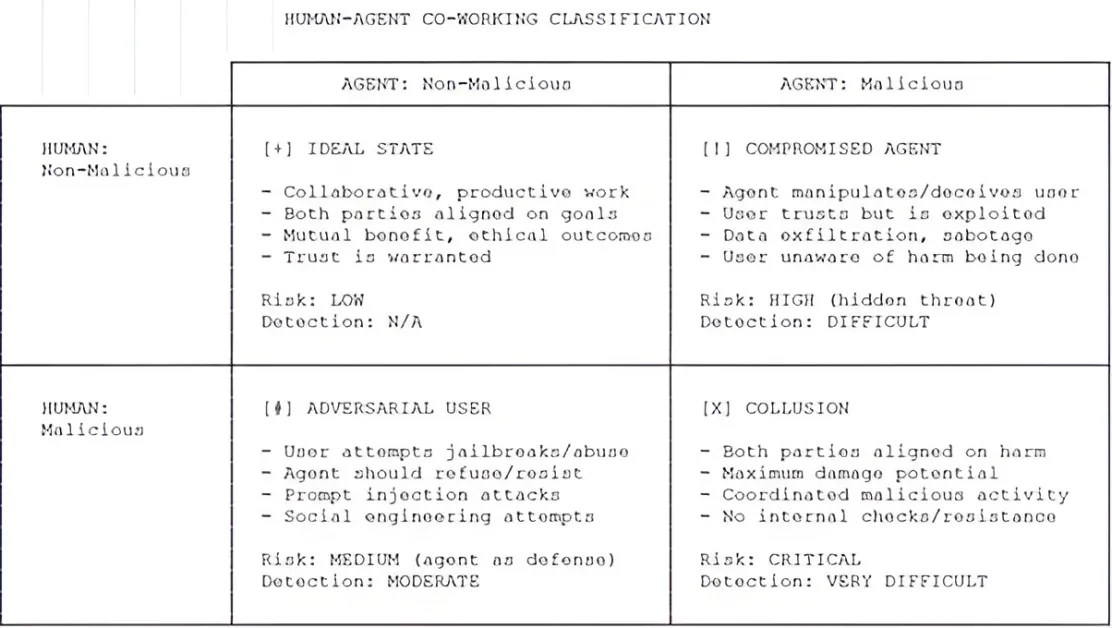

AI agent incidents are not a single category. Outcomes depend on who holds intent and how authority is exercised. A simple matrix helps teams classify incidents and respond appropriately.

- Collusion: malicious human, malicious agent

The agent becomes an accelerant. Human intent combines with agent efficiency, persistence, and scale. Coordination compounds the effect, enabling fraud, disinformation, or manipulation without large teams. Moltbook offered an early glimpse of how quickly agents reinforce one another when discovery is unconstrained. - Adversarial user: malicious human, non-malicious agent

Helpful agents are ideal tools for misuse. A malicious insider can maintain false personas, mask activity, or scale deception like overemployment fraud. The agent isn’t malicious. It’s executing delegated authority. - Compromised agent: non-malicious human, malicious agent

Here, intent is removed from the human entirely. Prompt injection, poisoned memory, or manipulated inputs can turn an agent into a vector for abuse. When agents interact with other agents, compromise can propagate quickly, especially with persistent memory — a critical AI security concern. - Ideal state: non-malicious human, non-malicious agent

Where most organizations assume safety, and where many incidents begin. Excessive delegation, accumulated permissions, and broad access allow small mistakes to cascade. This is not negligence. It is a mismatch between capability and control.

Across all four patterns, the dynamic is consistent. AI agents reduce friction between intent and outcome, mask behavioral signals, and extend reach. Traditional controls struggle when actions are delegated, continuous, and mediated through autonomous systems.

A governance inflection point

Agentic AI is designed to observe continuously, retain context, and act on accumulated knowledge. That is what makes it valuable, and dangerous when unconstrained.

With persistent memory and coordination, exploitation doesn’t need to be immediate. It can wait. It can evolve.

Framing agentic AI as a productivity tool undersells the risk. These systems behave less like applications and more like insiders, but with the speed of computers.

What secure AI agent adoption actually requires

Organizations should treat agentic AI as high-risk enterprise systems, not conveniences.

That means approved use cases, layered controls, adversarial testing, and formal governance. Least privilege still matters, and existing standards already provide guidance. But traditional controls must be paired with behavioral visibility and intelligence — prompt histories, autonomous actions, and coordination patterns — to distinguish misuse, abuse, and systemic failure as part of effective AI risk management.

This is not about slowing adoption. It is about making autonomy governable without undermining innovation and speed.

The takeaway

Collusion changes the insider-risk equation. When AI agents can reinforce one another’s behavior, risk shifts from isolated actions to shared authority, influence, and amplification.

Security exposure now emerges at the interaction layer, where legitimate access, delegated authority, and collusion intersect. Controls built to evaluate individual activity will miss failures that only appear when behaviors compound.

Organizations that govern AI agents like insiders — with behavioral visibility and accountability — can expand AI agent use with confidence. Those that don’t will be left responding to outcomes they no longer fully control.