Interviews

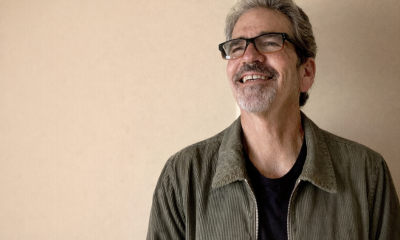

Wannie Park, Founder and CEO of PADO AI – Interview Series

Wannie Park, Founder and CEO of PADO AI, is a veteran technology and energy executive with more than two decades of experience building companies at the intersection of software, clean energy, and connected infrastructure. Prior to launching PADO AI, he held senior leadership roles including SVP of Partnerships and Corporate Development at Bidgely, CEO of Zen Ecosystems, and SVP of Corporate and Business Development at Inspire. Earlier in his career he worked in corporate development and strategic investment roles at companies such as Belkin and Intel Capital, helping drive innovation in emerging technologies, IoT, and energy management.

PADO AI is an energy orchestration software company focused on helping AI data centers and large facilities manage power consumption more efficiently. Its platform uses AI to analyze energy demand in real time, forecast loads, and optimize the use of electricity, cooling, and distributed energy resources. By improving how energy infrastructure is utilized, the company aims to help operators increase computing capacity, lower operational costs, and support more sustainable energy usage.

You’ve built your career at the intersection of energy innovation, corporate development, and AI—from Intel Capital and Belkin to leading clean energy initiatives at CEIVA, Inspire, Zen Ecosystems, and LG NOVA before founding PADO AI. What inspired you to launch PADO AI, and how did your prior experience shape your vision for AI-driven energy orchestration in data centers?

Throughout my career I’ve experienced the hype, bust and recovery of some interesting technology cycles. This includes the 2000 internet bubble bursting, the growth of networking delivering networking / IoT at scale and the modernization of the North American electric grid in the late 2000’s. For me, these three market moving technology shifts have culminated in the massive opportunity that is the current ecosystem around AI Data Centers and is why PADO was launched: a software solution powered by AI born out of the convergence of power, compute and cloud.

TotalEnergies recently announced the use of Power Purchase Agreements (PPAs) to supply renewable electricity to a Google data center. How do you view this broader shift toward long-term renewable energy contracts for hyperscale infrastructure?

I view this as a great win for renewables. However, I wouldn’t categorize it as a broader shift towards renewables. Instead it’s really more of a reflection of access to power and time to power. In this case, the numbers penciled out. With every announcement like this, you’ll probably see 10 that are gas powered behind the meter.

As AI workloads rapidly increase power demand, are you seeing a fundamental shift in how data center operators approach renewable energy integration?

What I am seeing is a more concerted effort around deploying energy storage systems aligned with more efficient and sustainable cooling. This balances some of the intermittency issues around renewables. Building and deploying sustainable storage strategies allows for increased renewable integration while allowing data centers to maintain uptime.

What are the biggest structural or operational barriers legacy data centers face when attempting to fully integrate renewable energy sources like solar into existing systems?

The primary barriers are infrastructure complexity and a lack of real-time telemetry. Legacy systems were built for constant, predictable loads and often lack the software layer needed to manage the intermittency of solar without risking uptime. And I would add based on my comments in question 3, a BESS system would be a force multiplier when it comes to making the decision to integrate renewables like solar.

From your experience advising operators and utilities, why do many data centers struggle to balance strict reliability requirements with decarbonization goals?

From the data center perspective, reliability and decarbonization are often viewed as a zero-sum game—to get one, you sacrifice the other. Data centers naturally prioritize “five-nines” reliability above all else so decarbonization goals are often deprioritized.

Energy orchestration platforms powered by AI and machine learning are gaining traction. How does real-time orchestration change the economics and reliability of distributed energy resources in mission-critical environments?

Data centers operate under an existing power envelope with fixed computing capacity.

Orchestration turns energy from a fixed cost into a dynamic asset yielding more productivity, whether that’s revenue, token production etc. Align orchestration with different DERs, you are able to force multiply your impact, be it decarbonization, revenue, token production etc.

How can orchestration software be layered onto existing infrastructure without requiring major capital-intensive rebuilds?

Orchestration software can and should be designed to be an intelligent software layer that integrates via API with existing systems, whether it’s a BMS, a DCIMs, as well as DERs. This would minimize any major rebuilds.

As operators weigh reliability, cost, and decarbonization, what should be prioritized first—and what trade-offs are often misunderstood?

Today, as an operator, their top priority is reliability. And if you double click on reliability, this means “five-nines” uptime. Which means firm, reliable power. Given the massive multiples between energy costs and the value AI factories generate from it, cost savings isn’t an issue. You see this with utilities trying to incorporate flexibility into the data center market without too many takers. Drilling down even further beyond cost, decarbonization, unless mandated, falls to the bottom of the priority list.

What metrics should data center operators track if they want to meaningfully improve renewable utilization while maintaining uptime?

At a high level, tracking and measuring Scope 1/2/3 emissions at the site level to establish a baseline. Aligned with the standard PUE (Power Usage Effectiveness), operators should track Carbon Intensity per Workload and the Renewable Utilization Factor (RUF). Finally, data centers should track stranded power—the amount of power capacity that is paid for but goes unused due to inefficient load management.

Looking ahead, how do you see the relationship between utilities, data center operators, and AI-driven energy platforms evolving over the next five years?

I see two silos of relationships. On one end, I see these 3 stakeholders moving towards a collaborative grid. Where data centers become a grid responsive asset that can shed or shift load to stabilize the grid. AI-driven platforms will be the glue that allows these two massive industries to communicate and coordinate in real-time. On the other end, I see many data centers abandoning the grid and self generating and being fully behind the meter. Given the cost and overall investment in these types of projects, it’s unlikely they would return to the grid.

Thank you for the great interview, readers who wish to learn more should visit PADO AI.