Anderson's Angle

Virtual Try-on of New Clothes Through AI

An AI model can now turn a single photo and clothing images into a moving video of a person wearing new outfits, avoiding the glitches common in older, two-step systems.

The ‘virtual try-on’ (VTON) category in computer vision research is among the best-funded and most prolific pursuits in the literature – mainly because, as can be gleaned from the frequent industry/academic collaborations published every year, this objective receives significant funding from the well-heeled fashion industry:

From the paper ‘Image-Based Virtual Try-On: A Survey’, examples of person representation types, and some of the filtering and refining stages that even basic images must go through for a virtual try-on (VTON). Source

There are many variations on the aim, such as extracting clothes from images of people, and accommodating the fuller figure when necessary. Some image-based systems have been commercially implemented, at platforms such as veesual.ai, wanna.fashion, and fashn.ai.

For video, Google Labs’ experimental Doppl app experimented with this functionality, launching last summer:

Please click to play if video is not autoplaying. Excerpts from the abandoned Google Doppl video try-on project. Source

However, Doppl shuts down in April 2026 after a lukewarm reception, with viewers now referred to the company’s image-only try-on service†:

Google’s image-only try-on program, where users are referred to from the company’s abandoned Doppl platform. Source

Though there are a small number of platforms that offer virtual try-on with video, none of them seem to be affiliated with an actual outlet; and they are all ‘bleeding edge’, marginal (and often ‘questionable’) token-touting products.

While there are a number of interesting forays from the research sector, they are traditionally complex architectures that are difficult to implement for low latency and high quality:

Please click to play if video is not autoplaying. From the 2024 Fashion-VDM project, an example of ‘headless’ clothes transference. Source

The truth is that the task of conforming clothes to a real person, without distorting either the clothes or the person, all-the-while maintaining some kind of useful demonstrative motion (which accurately shows the back of the product when the person turns their back), is a formidable challenge for the current state of the art.

Vanast

It’s a challenge that a new paper from Korea is attempting to rise to, by using a novel and entirely integrated solution to parsing clothes + person + movement:

Please click to play if video is not autoplaying. Examples from the supplementary materials site for the Vanast project. Source

The new system, titled Vanast, leverages a custom-made dataset featuring the incorporation and orchestration of all three factors necessary to accomplish the task: the clothes; the person; and the movement:

Click to play. More examples from the Vanast project site.

The system leverages frameworks as diverse as Flux, Qwen, and ChatGPT, to generate a ‘triplet’ dataset capable of informing an end-to-end architecture:

From the new paper, examples of the dataset data points used for generation and training. Source –

The new paper is titled Vanast: Virtual Try-On with Human Image Animation via Synthetic Triplet Supervision, and comes from four researchers at Seoul National University. There is also a video-laden project site.

Method

The authors’ stated objective in the work is coalesce the three aforementioned facets in a single-stage framework – not only because the process would be discrete, but also because it gives the various facets more opportunity to intertwine and interact during training, with a view towards a more cohesive generation:

Vanast combines a single human photo, separate clothing images, and a motion reference to generate a moving sequence in which the same person wears the new outfit, with pose-guidance ensuring consistent movement, while identity and garment details are preserved across frames.

To achieve this, the system takes images of the target clothing items; a photo of the person wearing different clothes; a motion reference video defining how the person should move; and a text prompt describing the action and setting; and produces a full video sequence in which that same person appears to wear the new outfit, while following the imposed motion, with each frame kept visually consistent over time.

Rather than separating dressing and animation into different stages – which has been the approach in most similar prior works – Vanast handles clothing, identity, and movement together in a single process, allowing these elements to interact during generation, and reducing the kinds of mismatches and instability evidenced in earlier methods.

Dataset

Training for the project is based on paired examples of a person image, the corresponding clothing items, and a video of that person moving while wearing those clothes, with motion extracted using a previous architecture, to provide stable pose-guidance across frames.

In the absence of a publicly-available dataset fulfilling the project’s requirements, data was scraped from (unspecified) online shopping platforms, providing a cache of videos with diverse clothing. However, the task required videos of the same person wearing multiple outfits, which is a rare find in wild data, and which necessitated the creation of synthetic data.

The three-stage process involved selecting suitable candidate frames from the scraped videos, handled via the Qwen2.5-VL Vision-Language Model (VLM), with appropriate cropping and evaluation of suitability (i.e., no occlusions, subject in correct position, etc.); and creating apposite inpainting masks to isolate the affected areas – which (in line with the prior work PERSE) is handled by the now-venerable SDXL diffusion model.

Overview of the Vanast pipeline, where a human image, target garment images, and a motion guidance video are encoded and processed in a unified video diffusion model. The system generates an animation that preserves identity, follows the pose sequence, and applies the target garments, while synthetic triplet generation supports training, and a dual-module design separates animation from garment transfer, to maintain consistency.

In the third stage, Qwen pulls duty again in order to classify images by gender, and the popular Flux image diffusion framework is then used to create alterations to the clothing in an image (since Flux is capable of coalescing multiple input elements). The inpainting text prompts were curated by ChatGPT (version unspecified).

To further increase pose and background diversity, a pipeline was introduced to construct training triplets from in-the-wild videos, using the HumanVid dataset. The same process was used to generate the identity-preserving human image.

Since no standalone garment image existed in these videos, garment images were synthesized directly from the footage. Frames were sampled from each video, and Qwen used to score them for frontal visibility, before selecting the most suitable candidate, based on full-body visibility, image clarity, minimal occlusion, lighting quality, and overall composition.

An upper-clothing region was then extracted using SegFormer, and the background removed to isolate the garment.

To avoid positional bias, the garment region was randomly shifted within its bounding box, and Qwen used again to filter out unreliable segmentations. This process produced synthetic garment images paired with motion and identity, allowing large-scale triplet construction from unstructured video data, while improving robustness across varied real-world conditions.

Architecture

A dual-module architecture was introduced to address the slow convergence and weak control balance seen in those prior methods that had sought to fuse all conditions. The approach leveraged the text-to-video diffusion transformer from Wan, and drew also on the VACE project (see below).

The model was divided into a Human Animation Module (HAM), which handled motion and identity from human and pose inputs; and a Garment Transfer Module (GTM), which handled clothing from garment images. Both shared access to the backbone, while integrating features in a distributed, cascaded manner, to improve conditioning.

Training was performed by freezing the backbone and optimizing only HAM and GTM parameters, with their contributions balanced during feature integration. Inputs from the synthetic triplet dataset were converted into latent representations using WAN’s variational autoencoder (VAE).

Motion-aware context was constructed by combining human and pose information across time, while garment features were processed separately, and aligned through projection into token embeddings.

The model was also extended to support garment interpolation. Here, representations from two garments were combined to generate smooth transitions, allowing consistent and coherent blending between clothing items, without additional optimization.

Data and Tests

The model was trained on 9,135 videos, with lengths varying from three to ten seconds, sourced from the aforementioned ‘shopping mall sites’; the authors’ own generated dataset; and the HumanVid dataset.

From these, two evaluation datasets were established: the ‘Internet dataset’, featuring videos and product images from malls; and the official test split of Alibaba’s ViViD dataset.

Since the ViViD data lacks faces (see earlier video above, for an example of this, which is very common in the virtual try-on literature), these were added via Flux outpainting.

Metrics used were L1 loss; Peak Signal-to-Noise Ratio (PSNR); Structural Similarity Index (SSIM); Learned Perceptual Image Patch Similarity (LPIPS); Fréchet Inception Distance (FID); and Fréchet Video Distance†† (FVD)

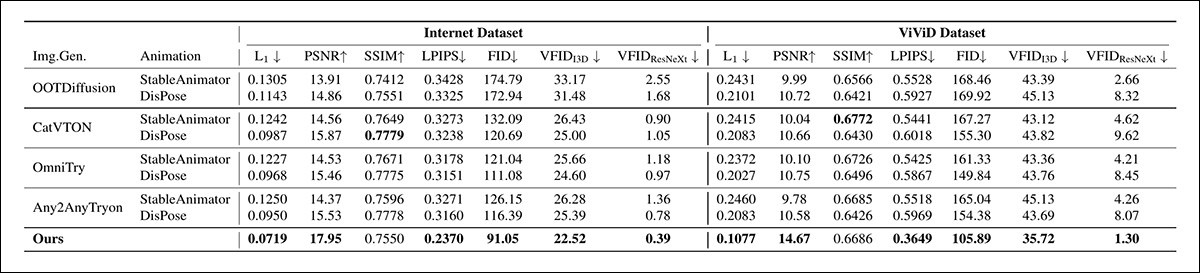

Systems tested for garment transfer were OOTDiffusion; CatVTON; OmniTry; and Any2AnyTryon. Subject-to-image generation models trialed were VisualCloze; MOSAIC; and ByteDance’s UNO.

For the second-stage human image animation, the StableAnimator and DisPose frameworks were used.

In a more limited context (because it does not directly support the objective), VACE was also tested, with some effort to balance up the missing functionality:

Quantitative comparison against combinations of subject-to-image and animation models on the Internet and ViViD datasets, where the proposed method achieved the best performance across all reported metrics. Bold values indicate the top score in each column.

Of the initial results shown above, the authors state:

‘[Our] model achieves the best performance across all metrics when compared with combinations of subject-to-image generation models and animation models.

‘Qualitative results [shown below] further confirm that our approach produces the most accurate pose following and garment transfer, while preserving identity more faithfully than all subject-to-image-based baselines.’

Qualitative comparison on Internet and ViViD datasets against subject-to-image and animation baselines, where the proposed method, the authors contend, offers more accurate pose alignment and garment transfer, while preserving identity more consistently than VisualCloze, MOSAIC, UNO, and VACE.

For the second category of tests, where combinations of image virtual try-ons and animation models were tested, the new work was able again to achieve the highest score:

Quantitative comparison with combinations of image-based virtual try-on and animation models on the Internet and ViViD datasets. The proposed method achieved the best overall performance across metrics, with SSIM remaining comparable to the strongest baseline. Bold values denote the highest score.

The authors add:

‘Qualitative comparisons [shown below] demonstrate that our results most closely resemble the ground truth among all image virtual try-on–based baselines.’

Qualitative tests using baselines created by combining VTON models with animation models.

Conclusion

Though the Vanast project achieves a discrete end-to-end solution, the paper’s lack of detail around training and inference resource requirements indicate that this might not be the most agile or nimble solution. In truth, the challenge itself is egregiously difficult to achieve even in a non-optimized system – and how much more in a commercial deployment that would need low latency and affordability/ROI at scale..?

Virtual try-on is one of a number of ‘moonshot’ AI goals where the current state-of-the-art, as diffused through various headlines and cherry-picked results, belies the actual difficulty of the task, which perhaps will eventually be resolved by later and lighter technologies than Transformers.

† Available in the USA, geo-blocked in many other regions, if not all.

†† The authors refer to ‘VFID’, but link only to the ViViD paper, which does not justify the reference, as far as I can tell, with limited time to track it down. I have assumed that they actually meant Fréchet Video Distance (FVD), and were likewise short on time. Please contact me for amendments, as necessary.

First published Wednesday, April 8, 2026