Thought Leaders

A Prompt Injection Attack One Cannot Prevent: Wishful Thinking or Real Concern?

In this article, I would like to engage the reader in a thought experiment. I am going to argue that in the not-so-distant future, a certain type of prompt injection attack will be effectively unpreventable. My argument is going to be more speculative than concrete, so I am not trying to convince you of anything. Instead, I invite you to explore these thoughts. Before I begin, as any compelling writer would do, I want to discuss chess and chess engines.

Superhuman Chess Engines and an Assertion About Human Experience

One of the nicer elements of chess that is lacking in other disciplines is the ability to objectively measure the quality or strength of a player. The ELO rating system used for this purpose has its flaws, but it provides a very good rough estimate that holds over time. A rating of 2700 or above is commonly recognized as world-class (top 30 in the world). The world’s best player is just below 2850. No human has ever reached a rating of 2900.

In the mid-90’s, we saw the first AI engine (Deep Blue) to reach a world-class level. The practical implication of this milestone was the wide adoption of engines by players of all levels for practice and analysis. In fact, engine usage became essential for the world’s top players. However, for several generations of these world-class engines, reviewing their recommended moves (i.e., output) was imperative. There was even a special format created called “advanced chess” in which humans competed with an engine by their side, and the human + machine combination was considered superior to the machine alone.

It took about 20 years, and some critical progress in Deep Learning and Reinforcement Learning for chess engines to reach superhuman level (roughly 3200 ELO). But once that stratosphere was breached around 2017, something very surprising happened. Well, actually, two things happened. The first thing was completely expected; engines became the de facto source of “ground truth” in 99% of all positions. In practice, that meant we entered the “era of blind trust” in the engine. These days, it is virtually impossible for a human to propose a significantly better move than the engine. As entertaining as “advanced chess” was, it is now a pointless exercise; humans would contribute almost nothing to the game. But the second thing was shocking to most chess players. These superhuman neural (i.e., deep neural network) engines would sometimes play in a style that is best described as “romantic”. In other words, they would make moves whose value could only be appreciated many, many moves later, far beyond what any human or world-class engines could calculate. It very much felt as if the engines developed a “feeling” or an “intuition” for certain positions. Except this intuition is not something a human could ever grasp or imitate.

Stated differently, a superhuman neural engine can make moves that are beyond the cognitive horizon of a human. This is the critical point here; the issue is not that of explainability. Rather, a human simply cannot comprehend why an engine recommends a move without playing out the position and observing the outcome many moves later, i.e., rolling out the entire trajectory of possible game sequences. As a result, we have an insurmountable gap in capability. It is objectively optimal to accept engine output without review. I can summarize my assertion as follows:

Chess is an existence-proof that superhuman AI would effectively operate autonomously in some domains. Enabling the AI system to make decisions without human review would be the optimal way to deploy such a system.

Since my assertion may strike one as obvious or unremarkable, I want to highlight a couple of nuances. Suppose we have an AI system that demonstrates superhuman level at a complex, critical, task with concrete, irreversible, consequences. There are two implications to my claim:

- The system would be deployed to make decisions for the task without human review, despite the inherent risk

- Insight gained from monitoring such a system would not prevent a detrimental decision; damage would have already been done

System output review and monitoring are precisely the last two layers of defense against prompt injection. Therefore, our hypothetical prompt injection attack could bypass these layers simply by targeting the appropriate system.

This is a very realistic scenario in my mind. A superhuman AI system in a specific domain is not AGI, and most experts believe that such systems are right around the corner. We also did not have to suppose that the decisions are time-sensitive, just that the task is complex enough to make human review intractable.

Of course, we have only bypassed two layers of defense so far, and luckily for us, several others have been developed. To address the rest, lets delve into the core elements that make prompt injection hard to defend against.

What is Prompt Injection?

Prompt injection is a manipulation of a Large Language Model (LLM) through crafted inputs, causing the LLM to unknowingly execute the attacker’s intentions. It can be regarded as social engineering for AI. Crucially, it is not a conventional software bug. A prompt injection attack exploits an inherent LLM vulnerability. Since LLMs process both system and user prompts as text sequences, they cannot intrinsically distinguish between legitimate and harmful instructions. The vulnerability is therefore effectively by-design, rather than by-accident.

Prompt Injection Techniques

Prompt injection is generally recognized as the #1 risk for LLM applications. There are several reasons why this is the case. The most obvious factor is the variety of injection techniques that have been developed. Roughly grouping them into four categories, the most well-known techniques include:

- Syntax-based: using special characters, emojis, or alternate language

- Indirect: using external sources (fetch from site), encoding (base 64), or multimodal reference (text in image)

- “Let’s Pretend”: introducing a manipulative style by e.g. roleplaying, hypothetical, emotional appeal, ethical framing, and format shifting

- Blunt: explicit attempt to “strong-arm” model instructions by brute-force, reinforcement, or negative prompt

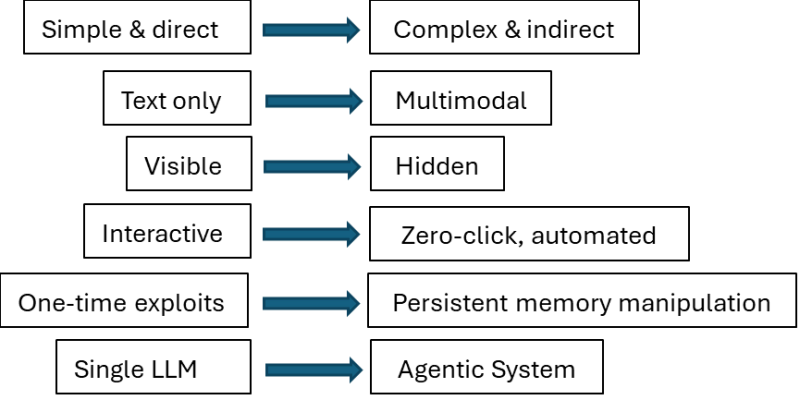

Variety alone provides a challenge to application developers, but these attacks have also continued to evolve rapidly. The left side of the diagram below purports to describe state-of-the-art for early 2023, while the right side reflects the nature of attacks today.

LLM app developers must also take the standard usability vs safety tradeoff into account. They could certainly introduce every appropriate defense layer and design pattern, but at what cost? Defense layers add significant latency and introduce False Positives (FPs) – incorrectly flagging safe prompts as malicious – both factors have a negative impact on user experience. As a result, some level of compromise is inevitable in practice, and there is no “silver bullet” solution.

However, in this article, I am not really interested in this never-ending cat and mouse game. Rather, I am probing whether an attack can be unpreventable in principle. From the developer/defender’s perspective, there is only one key insight:

Separation of instructions from data in the prompt is fundamental to address prompt injection risk

We can assume that tradeoffs are not a factor, and any defense layer or technique can be used. Under this (strong) assumption, is it possible to concoct a scenario in which instruction-data separation in a prompt is effectively impossible?

The DNA Analogy

Once the issue was framed in terms of instruction-data separation, my initial thought was to use biology as an analogy.

Consider a cell and a stretch of DNA (known as a gene). The gene provides instructions for building a protein through transcription and translation. It also encodes the information (data) that impacts the structure and function of the protein. As such, the gene simultaneously dictates what to build, and how to build it, or so I reasoned. However, this is simply false since a gene does not decide how to interpret itself. There is no equivalent of instruction-following in biology at the gene level. The “how” is fully externalized to the cellular machinery.

Therefore, even if I cannot shake the feeling that future generations of LLMs – or more accurately, the systems they evolve into – would resemble biological machines to a much greater extent, the proposed analogy just doesn’t work. We cannot substitute a cell for an LLM and a gene for a prompt and then perform an injection into the gene that would eventually cause a “damaged” protein to be built. It seems more productive to stick with natural language and tasks that require semantic interpretation.

Peeling off the Layers of Defense

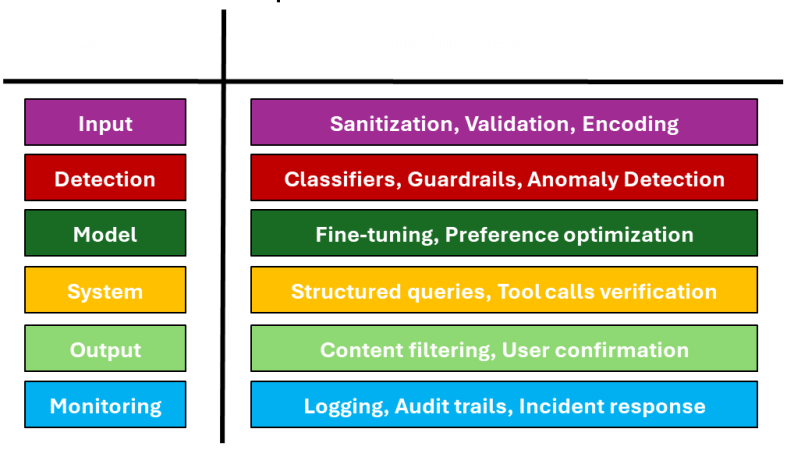

It should come as no surprise that multi-layered defense strategies are considered to be more effective in stopping prompt injection attacks. The image below shows the most common defense layers in order, and the associated techniques used in each layer.

We have already discussed the last two layers (output, monitoring) above, so let us focus on the first four.

Considering the input layer, it is reasonable to assume that sanitization or validation of the prompt would be quite successful in detecting indirect attacks. However, if the injection is delivered directly, and as suggested above, by relying on semantic interpretation, perhaps sanitization is irrelevant (nothing to sanitize), and validation is impossible by default since the computation must be completed to identify the issue.

There are essentially no limits to the guardrails you could construct in the detection layer. In fact, you could even use a dedicated LLM for injection detection. But once again, it is going to be difficult for a classifier or an anomaly detector to flag a prompt as suspicious when the poison is cleverly hidden within the semantics.

The model layer can be quite effective when the scope of tasks is narrow, and fine-tuning is feasible. A similar argument could be made for the system layer when the usage of tools is predictable. However, at least intuitively, neither would raise an alarm if the injection throws off the interpreter.

House of Cards

My intention when I started writing this article was to describe an “unpreventable” prompt injection attack in broad strokes. Perhaps I ended up following a “non-constructive” approach by poking holes at existing layers of defense. Defensive techniques continue to evolve rapidly, and so does the attack surface. This game is not showing signs of ending soon. However, I also believe that we are not going to be the ones who are playing it for much longer. I would guess that the successful prompt injection in the future would still be in natural language, just a language that humans cannot understand; and I would guess that it would be auto-discovered by a system either built for that specific purpose or maybe accidentally after tackling a related task, such as searching for semantic ambiguity in some representation space.

There is something unpleasant in admitting that we are losing control and yet feeling that this is the most rational thing to do. You can think of it as the “intuitive proof” that some attacks would be unstoppable. And if that leaves you uneasy, you would be pleased to know that GPT 5.2 found this argument to be “not controversial or novel” and advocated that I don’t “belabor the point” and cut down 40% of the article.