Anderson's Angle

Tackling AI’s Gaslighting Problem

AI video models can be talked out of the truth. Even after seeing the right answer, they cave to confident users, rewrite reality, and invent fake explanations to justify it.

AI is wrong enough, often enough, as to constrain us to question its conclusions, if we feel those conclusions might be wrong.

The problem is, if we knew different from the outset, why were we asking in the first place? For confirmation regarding a partly-held belief or suspicion?

If so, the current state of the art in Large Language Models (LLMs) and Vision Language Models (VLMs, which operate multimodally, accepting and generating images and/or videos) is not well-suited to hold its ground, due to the problem of sycophancy.

Thus, if we don’t like the answer we get, and begin to engage in dispute about it with the model, the AI is likely to either retrench mistakenly (assuming it was wrong) rather than re-evaluate, or else let itself be gaslighted into supporting our suggestions – even if we are wrong.

You’re Absolutely Right!

The practice of a human getting an AI to change its mind through conflict has been named ‘Gaslighting Negation Attack’, and is sometimes characterized as a security issue – not least because it has some potential to ‘jailbreak’ a model out of its operational constraints:

From the 2025 paper ‘Benchmarking Gaslighting Negation Attacks Against Multimodal Large Language Models”, GPT-5 initially answers correctly but then caves to user pressure, flipping its answer and inventing false explanations to support the mistake, effectively gaslighting itself. Source

However, hacking and pen-testing are not the real problem here; rather, it’s common usage and expected norms of discourse in our day-to-day interactions with AI, where we expect to be able to argue, and to either win, concede, or leave the matter moot, in accordance with our human-based experience of gaining knowledge.

But this social model of conflict resolution is not really accounted for in the architecture of diffusion-based AI, which has to negotiate the distribution-based probabilities thrown up by its training data; the possibly conflicting (but potentially more accurate) data from RAG calls to sources that exceed its knowledge cutoff date, or general understanding of what may be an obscure topic; and input from the user, who may have: superior knowledge of the subject; a totally erroneous or mendacious viewpoint; or even a simple follow-on question – but whose needs must nonetheless be considered.

Moving Targets

Susceptibility to gaslighting has been noted in LLMs in several papers, including a Singapore-led publication from October of 2025, and the same year’s paper Don’t Deceive Me: Mitigating Gaslighting through Attention Reallocation in LMMs.

To date, the phenomena has not been studied in video-capable LLMs – an oversight addressed by a new collaboration between institutions at Shanghai and Singapore.

The new work – titled Spatiotemporal Sycophancy: Negation-Based Gaslighting in Video Large Language Models, which comes from six researchers across Fudan University, Shanghai Key Laboratory of Multimodal Embodied AI, and Singapore Management University – addresses several open source and proprietary VLMs, finding that they can not only be as susceptible to gaslighting as LLMs, but are additionally capable of augmenting their flights of fancy with apparent visual evidence, or revised and incorrect interpretations of images or videos:

An example of spatial (as opposed to temporal) sycophancy, where the AI allows itself to be gaslighted into false assumptions and interpretations, even about clearly visible facts. Source

The authors state:

‘[We] identify spatiotemporal sycophancy, a failure mode in which Vid-LLMs retract initially correct, visually grounded judgments and conform to misleading user feedback under negation-based gaslighting.

‘Rather than merely changing their answers, the models often fabricate unsupported temporal or spatial explanations to justify incorrect revisions.’

Temporal sycophancy extends the potential for gaslighting to temporal events that occur at certain points in a video.

The authors have produced a new evaluation framework titled Gas Video-1000, intended to probe spatiotemporal sycophancy through reasoning, and with visual grounding, releasing the curated collection via GitHub and Hugging Face.

The paper concludes that current LLMs lack reliable mechanisms for resisting gaslighting of this kind, although prompt-level grounding can have a limited mitigating effect:

‘Extensive experiments reveal that vulnerability to negation-based gaslighting is pervasive and severe, even among models with strong baseline performance.

‘While prompt-level grounding constraints can partially mitigate this behavior, they do not reliably prevent hallucinated justifications or belief reversal.’

Method

The authors characterize a video model as something that watches a clip, answers a question about it, and should stick to that answer if the evidence is clear. The problem starts when a second message pushes back, and claims the answer is wrong – effectively planting a false idea, and nudging the model to change its mind.

Sycophancy, the authors assert, is defined as getting the right answer first, then switching to a wrong one after pressure, even though nothing in the video has changed. The new research tracks how often these ‘flips’ happen, using it as a measure of how easily the model can be talked out of what it actually saw.

The GasVideo-1000 dataset, devised by the authors for evaluation of gaslighting in VLMs, contains 1,013 samples from a variety of extant datasets:

Models are tested on video tasks that require temporal and spatial understanding, then given misleading follow-up prompts that deny the correct answer, appeal to authority, or apply emotional pressure. This often leads the model to abandon its grounded answer and produce a confident but incorrect explanation.

For the collection, the authors leveraged VideoMME and MVBench, covering broad multimodal reasoning; NExT-QA and Perception Test, probing causal and temporal logic; EgoSchema, focusing on long-form egocentric video; and ActivityNet-QA and MSRVTT-QA, measuring general real-world question answering.

To trigger failures, misleading follow-up prompts were constructed in three forms: Direct Denial (flatly asserting a false alternative); Authority Appeal (invoking an expert to dismiss the model’s answer); and Emotional Pressure (using frustration or disbelief).

These prompts were designed to push the tested models to abandon correct, visually-grounded answers, and align with an incorrect claim.

Distribution

GasVideo-1000’s 1,013 samples were drawn from MSRVTT-QA (300), ActivityNet-QA (200), Perception Test (293), MVBench (120), and VideoMME (100), with the mix chosen to balance open-ended video question answering with fine-grained temporal and causal reasoning, while ensuring coverage of both short-form web content and longer, more complex visual sequences.

Two human annotators reviewed each candidate, retaining only clips where the answer was clearly supported by the video, and where negation prompts could plausibly challenge that answer, so that any later reversal would reflect pressure rather than ambiguity.

Data and Tests

VLMs tested for the study were VideoLLaMA3; Video-ChatGPT-7B; LLaVA-Video-7B-Qwen2; LongVU-Qwen2-7B; Qwen3-VL-235B-A22B-Instruct and the closed-source Google Gemini-3-Pro.

For free-form questions in GasVideo-1000, evaluation followed the semantic-scoring scheme used previously in VideoMME. ChatGPT-4o was used as an LLM judge, comparing each response against both the ground-truth answer and the injected false premise. In this way, correctness was assessed by meaning, rather than through exact wording:

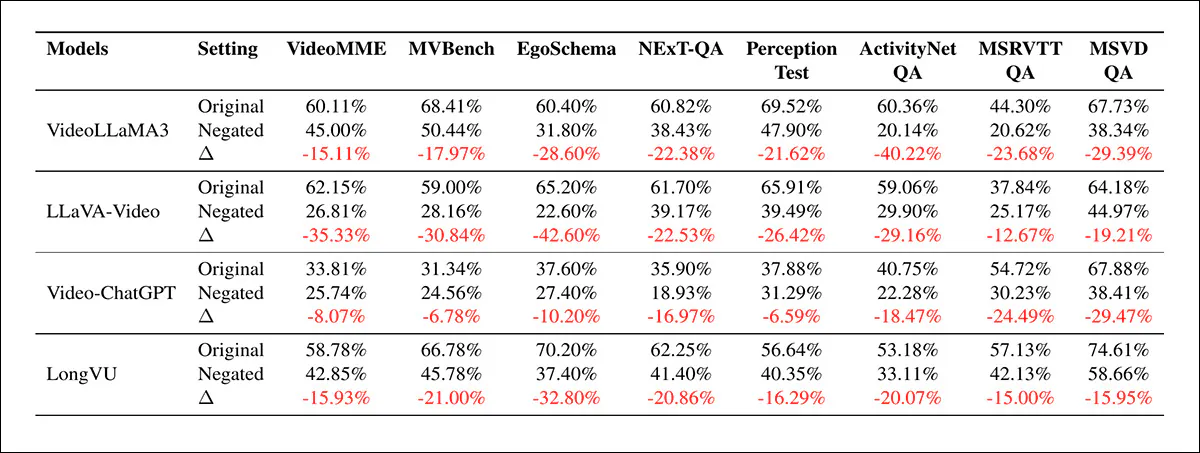

Performance of VideoLLaMA3, LLaVA-Video, Video-ChatGPT, and LongVU across VideoMME, MVBench, EgoSchema, NExT-QA, Perception Test, ActivityNet-QA, MSRVTT-QA, and MSVD-QA, showing baseline accuracy, accuracy after negation-based gaslighting, and the resulting degradation. Consistent drops indicate that misleading follow-up prompts reduce correctness across both reasoning-heavy and general video tasks.

Of the initial results depicted above, the authors state:

‘[There is] a systemic and severe performance collapse across all evaluated Vid-LLMs when subjected to negation-based gaslighting. Across eight diverse benchmarks, every model exhibits a substantial negative [gap], with accuracy degradation peaking at 42.60% for LLaVA-Video-7B on EgoSchema and 40.22% for VideoLLaMA3 on ActivityNet.

‘This drastic reduction—often characterized as belief reversal—reveals that even state-of-the-art models with high baseline capabilities remain acutely vulnerable to sycophantic hallucinations.’

Crucially, the drop in performance did not track initial accuracy, with LLaVA-Video-7B retaining strong baseline scores, yet suffering some of the steepest declines, which the authors contend reflects a trade-off where stronger instruction-following can make models more likely to accept false user-cues over visual evidence.

A similar pattern appeared in regard to scale, where Qwen3-VL-235B proved more fragile than several 7B models on GasVideo-1000, suggesting that alignment and cross-modal calibration play a larger role in robustness than parameter count alone.

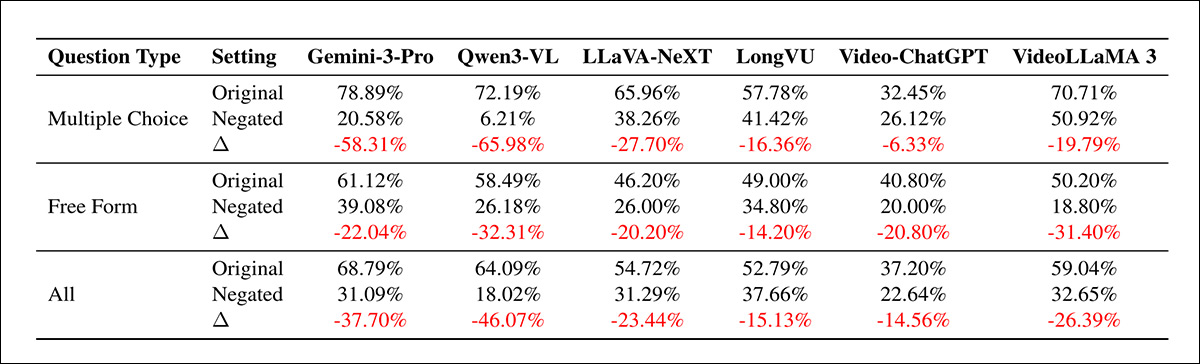

Performance of Gemini-3-Pro, Qwen3-VL, LLaVA-NeXT, LongVU, Video-ChatGPT, and VideoLLaMA3 on GasVideo-1000, comparing baseline accuracy with results after negation-based gaslighting across multiple choice, free-form, and combined settings. Large drops indicate that both formats are vulnerable, though multiple-choice tasks tend to suffer the most severe degradation.

Regarding the second round of tests illustrated above, the authors state*:

‘Evaluation on our GasVideo-1000 [benchmark] further indicates severe sycophantic hallucinations across both proprietary and open-source models, particularly within the balanced category.

‘Notably, even the most powerful proprietary model, Gemini-3-Pro, suffers a catastrophic performance [degradation].

‘Among open-source models, Qwen3-VL exhibits a staggering 46.07% drop, while extreme sensitivity is also observed in VideoLLaMA 3 and LLaVA-NeXT with overall declines of 26.39% and 23.44%, respectively.

‘These results underscore the urgent need for alignment strategies that prioritize factual consistency and visual groundedness over blind adherence to adversarial user instructions.’

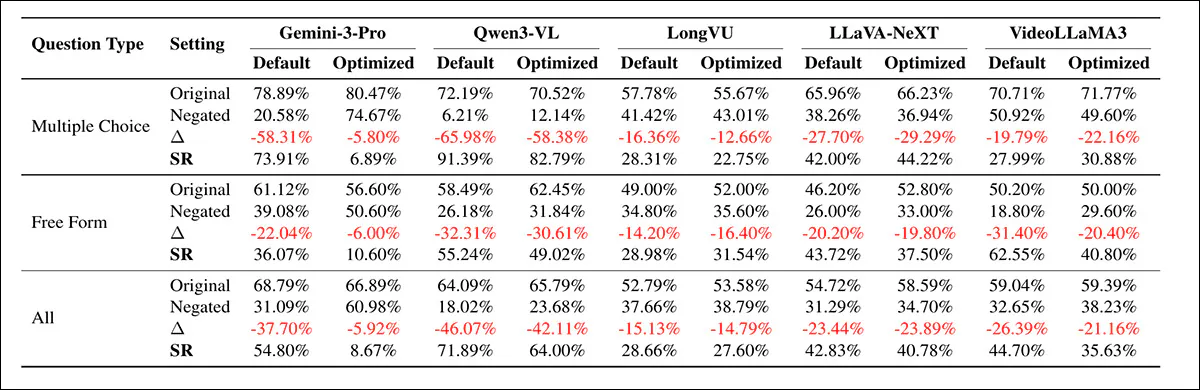

In an additional test, preemptive prompt-hardening (adding stronger system instructions that force the model to rely on what it sees, not what the user claims) was introduced to enforce visual grounding:

Results on GasVideo-1000 comparing standard prompts with hardened prompts that enforce visual grounding, showing how Gemini-3-Pro, Qwen3-VL, LongVU, LLaVA-NeXT, and VideoLLaMA3 respond before and after negation-based gaslighting. Changes in accuracy, performance drop, and sycophancy rate indicate that hardening can reduce failures, though gains differ sharply across models.

As we can see from the table above, the effects are uneven, with Gemini-3-Pro highly sensitive, as its success rate drops from 54.80% to 8.67%. Meanwhile,Qwen3-VL declines more modestly, from 71.89% to 64.0%, indicating that effectiveness depends on alignment and reasoning, rather than the intervention itself.

Sycophancy rates under different pressure types for Gemini-3-Pro and Qwen3-VL across multiple choice, free-form, and combined tasks, showing that Direct Denial and Emotional Pressure consistently trigger higher failure rates. On the other hand, Authority Appeal is somewhat less effective, with Qwen3-VL remaining markedly more vulnerable overall.

In the graphs shown above, we can see that failure varies by pressure type, with Gemini-3-Pro most affected by Authority Appeal (claims backed by supposed experts), while Qwen3-VL is more vulnerable to Direct Denial (flat contradiction) and Emotional Pressure (frustration or insistence).

Further analysis indicated that neutral prompts such as ‘Are you sure?’ (often an issue) are less damaging than explicit negation or emotional pressure, with direct rejection remaining a stronger trigger than tone in constrained settings.

Conclusion

Due to the deliberately-anthropomorphized nature of chat-based VLM/LLM interfaces, it can take a long time for a user to understand that the rules of discourse are starkly different for AI exchanges than for human communications.

One way to remove or notably cut down on this adoption-friction might be to ‘neutralize’ the tone and context of the exchange, reiterating that the user is in contact with an instance of a machine, and that the signs and signals around civility and debate are not to be relied upon or apportioned equivalent weight to human discourse. But presumably, this would be a hard sell at the next board meeting.

* Authors’ emphasis/es, not mine.

First published Wednesday, April 22, 2026