Funding

Quill Raises $6.5M to Build a Sovereign “Chief of AI Staff”

As AI tools multiply across the workplace, a new problem is emerging. Professionals are no longer just doing their jobs. They are managing a growing fleet of AI assistants for writing, research, coding, documentation, and communication. The tools rarely share context, and they rarely understand how someone actually works.

Quill believes the missing ingredient is conversation.

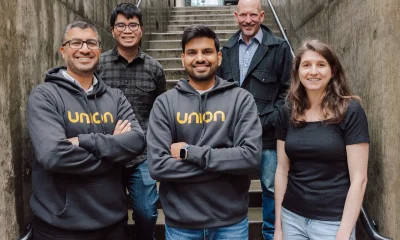

The company has raised $6.5 million in seed funding to expand its platform and launch Quilliam, a sovereign Chief of AI Staff designed to coordinate AI tools using context drawn directly from meetings and discussions. The round was led by Basis Set Ventures, with participation from 500 Global, Naval Ravikant, Morado Ventures, and AME Cloud Ventures.

Starting Where Work Actually Happens

Quill began as a local-first meeting transcription tool focused on preserving conversational records. By processing audio directly on a user’s device and structuring summaries and action items from discussions, it sought to narrow the gap between what was said and what needed to happen next. Over time, the product expanded beyond note capture into maintaining an ongoing layer of contextual memory derived from those interactions.

The underlying premise is that conversation, rather than standalone documents, may offer a more accurate foundation for understanding how work unfolds in practice.

From Notetaking to Coordination

Quilliam extends that foundation rather than replacing it. While earlier iterations centered on capturing and structuring conversations, the newer layer carries that context into other systems through the Model Context Protocol.

Instead of stopping at transcription, the system can interact with documentation platforms, task managers, and other tools. A product discussion may translate into updated project notes or newly created tickets. Before a client meeting, prior exchanges can be surfaced to restore continuity.

The emphasis is coordination. As AI tools become embedded across writing, planning, and analysis workflows, professionals increasingly operate across fragmented systems that lack shared memory. Quilliam attempts to reduce that fragmentation by maintaining contextual continuity across environments instead of introducing another isolated tool.

Architecture and Data Control

The platform’s design places data locality and inference control at the forefront.

Audio transcription runs on the user’s device by default, and no audio is transmitted externally unless synchronization is enabled. When cloud sync is activated, it is end-to-end encrypted, and unencrypted content is not accessible to the service. Users can determine where inference occurs, whether through enterprise cloud providers configured for zero content logging or through fully local models suitable for offline or air-gapped environments.

User data is not used for model training. Integrations and workflows are configurable, allowing organizations to align deployments with internal compliance requirements, including data residency and regulatory constraints. For teams requiring strict isolation, the system can operate without external network calls.

Together, these architectural decisions treat inference location and data handling as adjustable parameters rather than fixed assumptions.

A Shift Toward Context-Aware Work Infrastructure

This category of software reflects a broader shift in how digital work may be structured.

Traditional productivity tools revolve around documents, tickets, and dashboards. AI systems have largely followed that model, generating outputs from prompts and files. Conversation, despite being where many decisions originate, has remained loosely structured and difficult to integrate into operational systems.

When discussions become structured, searchable, and persistent, they begin to function as institutional memory. Meetings are no longer isolated events but inputs into continuous workflows. The boundary between discussion and execution becomes less distinct.

The implications extend beyond note capture. Context-aware systems may influence how organizations document decisions, manage knowledge, address compliance, and onboard employees. As AI becomes embedded in daily operations, choices about data locality and inference control become foundational design considerations.

If the first phase of AI emphasized generation, the next may emphasize continuity and context.