This article is based on findings from a kernel-level GPU trace investigation performed on a real PyTorch issue (#154318) using eBPF uprobes. Trace databases are published in the Ingero open-source repository for independent verification.

TL;DR

PyTorch’s DataLoader can be 50-124x slower than direct tensor indexing for in-memory GPU workloads. We reproduced a real PyTorch issue on an RTX 4090 and traced every CUDA API call and Linux kernel event to find the root cause. The GPU wasn’t slow – it was starving. DataLoader workers generated 200,000 CPU context switches and 300,000 page allocations in 40 seconds, leaving the GPU waiting an average of 301ms per data transfer that should take microseconds.

The Problem

A PyTorch user reported that DataLoader was 7-22x slower than direct tensor indexing for a simple MLP inference workload. Even with num_workers=12, pin_memory=True, and prefetch_factor=12, the gap remained massive. GPU utilization sat at 10-20%.

We reproduced it. The gap was even worse on our hardware:

| Method | Time | vs Direct |

|---|---|---|

| Direct tensor indexing | 0.39s | 1x |

| DataLoader (shuffle=True) | 48.49s | 124x slower |

| DataLoader (optimized, 4 workers, pin_memory) | 43.29s | 111x slower |

The workload is trivial: 7M samples, 100 features, 2-layer MLP, batch size 1M. The model processes a batch in milliseconds. So where does the time go?

What nvidia-smi Shows

Nothing useful. GPU utilization flickers between 0% and 30%. Memory usage is stable. Temperature is fine. The GPU is clearly underutilized, but nvidia-smi can’t tell you why.

What torch.profiler Shows

The reporter tried PyTorch’s built-in profiler and “obtained no meaningful trace data.” This is a common frustration – application-level profilers can show you what CUDA kernels are running, but they can’t see the host-side scheduling, memory, and process lifecycle events that determine whether data arrives at the GPU on time.

What Kernel-Level Tracing Shows

We ran the benchmark while tracing both CUDA API calls (via eBPF uprobes on libcudart.so) and Linux kernel events (scheduler context switches, memory page allocations, process forks) simultaneously. The results tell the complete story.

Full video walkthrough: https://asciinema.org/a/RGwhPeXAPJdhXqxp

In the video, we connected an open-weight LLM (MiniMax-M2.7) to the trace database via MCP (Model Context Protocol):

ollmcp -m minimax-m2.7:cloud -j /tmp/ingero-mcp-dataloader.jsonThe JSON config tells the MCP client where to find the Ingero server and which trace database to load:

{

"mcpServers": {

"ingero": {

"command": "./bin/ingero",

"args": ["mcp", "--db", "investigations/pytorch-dataloader-starvation.db"]

}

}

}

This gives the LLM direct access to the trace data through 7 tools: get_trace_stats, get_causal_chains, get_per_process_breakdown, and others. The AI can query the database, correlate CUDA events with kernel scheduling data, and produce a plain-language diagnosis without any manual analysis.

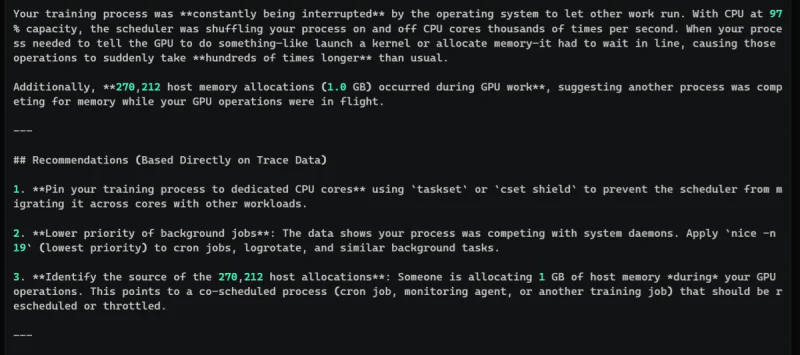

4 HIGH-severity causal chains

The causal chain engine detected 4 high-severity patterns, all with the same root cause:

[HIGH] cudaStreamSync p99=42ms (1,638x p50=25us) - CPU 100% + 1,880 sched_switch events Timeline: [SYSTEM] CPU 100% [HOST ] 1,880 context switches (21s off-CPU) [CUDA ] p99=42ms (1,638x p50=25us) Root cause: DataLoader workers fighting for CPU, massive page allocation pressure

[HIGH] cudaLaunchKernel p99=24.67ms (349x p50=70us) - CPU 100% Root: 34 sched_switch events [HIGH] cuMemAlloc p99=627us (4.0x p50) - CPU 100% [HIGH] cuLaunchKernel p99=106us (4.0x p50) - CPU 100%

The cudaStreamSync p99 is 1,638 times the p50. That’s not GPU slowness – that’s the GPU waiting for data that never arrives on time.

Figure 1: AI-generated analysis after running /investigate. The model used Ingero’s 7 MCP tools to query the trace database and produced a plain-language explanation with actionable recommendations derived directly from the trace data.

The Per-Process Breakdown

This is where it gets clear. The main process and its 4 DataLoader workers are visible as separate entities:

Main process:

- cudaMemcpyAsync (host-to-device transfer): avg 301ms, max 2.9 seconds - cudaStreamSync: p99 = 42ms (normally 25us) - 1,567 context switches, avg 16ms off-CPU, worst stall 5 seconds - 799,018 page allocations

DataLoader worker 1: 52,863 context switches, 89,338 page allocations, worst stall 5s DataLoader worker 2: 50,638 context switches, 83,509 page allocations, worst stall 5s DataLoader worker 3: 49,361 context switches, 70,035 page allocations, worst stall 5s DataLoader worker 4: 38,862 context switches, 56,354 page allocations, worst stall 5s

Total across workers: ~191,000 context switches and ~299,000 page allocations in 40 seconds.

What This Means

The DataLoader workers are doing three expensive things that direct indexing avoids entirely:

- Shuffling and indexing: DataLoader with shuffle=True generates a random permutation of indices, then each worker selects its chunk. This requires random memory access across the full 7M-sample tensor – terrible for cache locality and triggers page faults.

- Collation and copying: Each worker gathers scattered samples into a contiguous batch tensor. This means allocating new memory (page allocations), copying data from random locations (cache misses), and serializing the result back to the main process via shared memory or a queue.

- Competing for CPU: Four workers + the main process on a 4-vCPU machine means constant preemption. Each worker gets descheduled 50,000 times. The worst-case stall is 5 seconds – during which the GPU has nothing to process.

With direct indexing: X[i:i+batch_size] is a zero-copy view of a contiguous tensor already in memory. .to(device) triggers one DMA transfer from a single contiguous region. No workers, no shuffling, no collation, no cross-process copies, no context switches. The GPU gets data in microseconds, not hundreds of milliseconds.

The Fix

For in-memory GPU workloads where the entire dataset fits in RAM:

- Don’t use DataLoader. Direct indexing with a pre-shuffled index array is simpler and 100x faster:

indices = torch.randperm(num_samples) for i in range(0, num_samples, batch_size): batch = X[indices[i:i+batch_size]].to(device) output = model(batch) - If you must use DataLoader, match num_workers to your actual CPU cores minus 1. On a 4-core machine, num_workers=2 reduces contention. Add persistent_workers=True to avoid fork overhead.

- For larger-than-memory datasets where DataLoader is necessary, the real bottleneck shifts to disk I/O. Use prefetch_factor=2 (not higher – more prefetching means more memory pressure) and ensure your storage can keep up.

The Bigger Picture

This investigation illustrates a pattern we see constantly in GPU workloads: the GPU is fast, the host is the bottleneck, and GPU metrics can’t see it. nvidia-smi reported low utilization but couldn’t explain why. torch.profiler captured CUDA kernels but missed the 200,000 context switches happening in userspace.

The only way to see the full picture was to trace both sides simultaneously – CUDA API calls at the library level and Linux kernel scheduling events – and correlate them by time and process ID. The causal chain “CPU 100% -> 1,880 sched_switch -> cudaMemcpyAsync 301ms -> cudaStreamSync 42ms” tells the complete story in one line. Without cross-stack tracing, this would have remained a mystery – as it was for the original reporter who spent weeks debugging it.

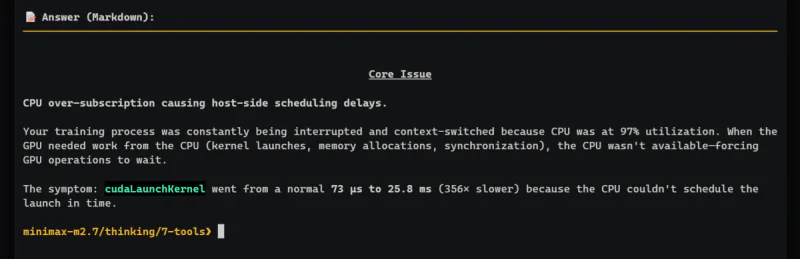

Figure 2: When asked “what is the core issue?”, the model identifies CPU over-subscription causing host-side scheduling delays. cudaLaunchKernel went from 73us to 25.8ms (356x slower) because the CPU couldn’t schedule the launch in time.

Try It Yourself

Reproduce the benchmark:

import torch, time

from torch.utils.data import DataLoader

X = torch.randn(7_000_000, 100)

model = torch.nn.Sequential(

torch.nn.Linear(100, 512), torch.nn.ReLU(),

torch.nn.Linear(512, 512), torch.nn.ReLU(),

torch.nn.Linear(512, 10)

).cuda()

# Fast path

start = time.time()

with torch.no_grad():

for i in range(0, len(X), 1_048_576):

model(X[i:i+1_048_576].cuda())

torch.cuda.synchronize()

print(f'Direct: {time.time()-start:.3f}s')

# Slow path

loader = DataLoader(X, batch_size=1_048_576, shuffle=True)

start = time.time()

with torch.no_grad():

for batch in loader:

model(batch.cuda())

torch.cuda.synchronize()

print(f'DataLoader: {time.time()-start:.3f}s')

Trace with Ingero to see what’s happening under the hood:

git clone https://github.com/ingero-io/ingero.git cd ingero && make build sudo ./bin/ingero trace --duration 60s # in one terminal python3 benchmark.py # in another terminal ./bin/ingero explain --since 60s # after benchmark completes

Or skip the reproduction and explore our trace data directly. The investigation database (764KB) is in the repo:

# View causal chains from the investigation ./bin/ingero explain --db investigations/pytorch-dataloader-starvation.db --since 5m # Per-process breakdown (see DataLoader workers vs main process) ./bin/ingero explain --db investigations/pytorch-dataloader-starvation.db --per-process --since 5m # Connect your AI assistant for interactive investigation ./bin/ingero mcp --db investigations/pytorch-dataloader-starvation.db

Investigate with AI (recommended). The fastest way to analyze the trace is to connect any MCP-compatible AI directly to the database. No manual analysis needed.

Create a config file:

cat > /tmp/ingero-mcp-dataloader.json << 'EOF'

{

"mcpServers": {

"ingero": {

"command": "./bin/ingero",

"args": ["mcp", "--db", "investigations/pytorch-dataloader-starvation.db"]

}

}

}

EOF

Then connect your model:

# With Ollama + MiniMax (what we used in the video) ollmcp -m minimax-m2.7:cloud -j /tmp/ingero-mcp-dataloader.json # With Claude Code claude --mcp-config /tmp/ingero-mcp-dataloader.json # With any MCP-compatible client # Add the config above to your AI's MCP settings

Type /investigate to trigger the guided analysis, or ask any question: “What caused the GPU starvation?” The AI has access to 7 tools that query the trace database directly.

GitHub: github.com/ingero-io/ingero

Original issue: pytorch/pytorch#154318

Video walkthrough: https://asciinema.org/a/RGwhPeXAPJdhXqxp

Investigation performed on TensorDock RTX 4090 (24GB), Ubuntu 22.04, PyTorch 2.10.0+cu128.