Interviews

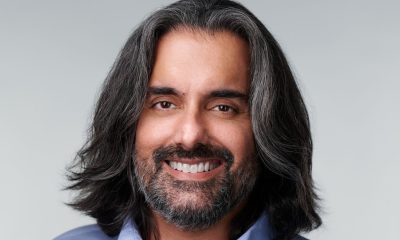

Professor Eran Yahav, Co-Founder and Co-CEO of Tabnine – Interview Series

Professor Eran Yahav, Co-Founder and Co-CEO of Tabnine is a computer science professor at the Technion – Israel Institute of Technology whose research focuses on programming languages, machine learning, and software engineering, particularly program synthesis and large-scale code analysis. Alongside his academic work, he co-founded Tabnine (originally Codota) to apply years of research into practical developer tools, helping pioneer AI-driven code completion and automation. His work bridges academia and industry, with a focus on making AI-generated code more reliable, secure, and context-aware for real-world enterprise environments.

Tabnine is an AI-powered coding platform designed to assist developers throughout the entire software development lifecycle, from writing and debugging code to generating tests and documentation. Originally launched as a code completion tool, it has evolved into a broader enterprise-focused platform that integrates generative AI and agent-based workflows, enabling teams to automate complex development tasks while maintaining strong controls over privacy, security, and compliance. With support for dozens of programming languages and integrations across major IDEs, Tabnine aims to improve developer productivity while ensuring that AI-generated code remains trustworthy and aligned with organizational standards.

You’ve spent years researching program analysis and synthesis at the Technion and previously worked at IBM Research. What problem in software development convinced you to co-found Tabnine, and how did your academic research shape the company’s original vision?

My academic work focused on program analysis and synthesis, which is essentially about teaching machines to understand and generate code. I did my PhD on Program Analysis, and this is also where I spent my first few years of applied research work. Tackling software quality problems with program analysis made it clear that some problems are very hard to solve once the program has been written incorrectly. An ounce of prevention is worth a pound of cure, if you may. This convinced me that the right way to address software quality is via Program Synthesis, which is where I spent the majority of my research time and energy.

I initially worked on Program Synthesis for concurrent programs, trying to automate the creation of concurrent programs from sequential ones. I then switched to a more generally applicable program synthesis using machine learning.

Program synthesis using machine learning was also the fundamental idea powering Tabnine. The idea, which now seems obvious, was that models could learn coding patterns directly from large corpora of code and assist developers in real time. This general idea is applicable throughout all stages of the software development life cycle – from code creation to code review, to deployment, and beyond.

The vision has always been to augment the human developer by giving them tools that accelerate the development process and remove friction. Software development is a creative and problem-solving discipline, and the goal was for AI to remove friction from the process by handling routine tasks and helping developers stay in flow. That vision still guides us today, although the technology has evolved significantly since those early days.

Tabnine pioneered AI coding assistants years before generative AI became mainstream with tools like OpenAI’s models. Looking back, how has the role of AI in software development evolved since those early days, and what lessons did the industry learn from the first wave of coding copilots?

The earliest generation of AI coding assistants focused primarily on prediction. They were essentially advanced autocomplete systems that helped developers write code faster by predicting the next line or function.

What has changed with agent loops is that AI can now handle tasks with greater autonomy, to the point where we can consider agents (with proper guidance) to be independent junior developers.

But this has also taught the industry an important lesson. Raw model capability is not enough for enterprise software development. Models trained on public data can produce impressive outputs, but they often lack awareness of an organization’s architecture, dependencies, and conventions.

That’s why the next stage of evolution is not just about larger models or bigger context windows, but about connecting those models to the real context in which software is built.

Many enterprises are discovering that scaling AI agents requires more than larger models—it requires deeper organizational context. Why do you believe context is becoming the true frontier for reliable AI-driven development?

Software systems are complex networks of relationships. A single change can affect multiple services, APIs, or downstream components.

AI models today are very good at generating plausible code, but they often operate without a structured understanding of those relationships. Without that understanding, the AI cannot reliably reason about the consequences of a change.

What enterprises are discovering is that the reliability of AI systems depends on the quality of the context in which they operate. If an AI system understands the architecture of the system, the dependencies between services, and the organization’s coding standards, it can generate code that aligns much more closely with how that system actually works.

In that sense, context is becoming the next frontier for enterprise AI development.

Your new Enterprise Context Engine aims to give AI agents a structured understanding of an organization’s architecture, dependencies, and engineering practices. How does this approach differ from common methods such as retrieval-augmented generation that many companies currently rely on?

Retrieval-augmented generation is a useful technique. It allows models to pull in relevant documents or code snippets when generating an answer.

But retrieval alone does not create understanding. It provides access to information, not structure.

The Enterprise Context Engine is designed to go further by building a structured representation of the software environment. It analyzes repositories, services, dependencies, APIs, and architectural relationships and organizes them into a model of how the system actually works.

This allows AI systems to reason about the relationships between components rather than just retrieving pieces of text. For complex enterprise environments, that distinction becomes very important.

AI coding tools are evolving from autocomplete suggestions to autonomous agents capable of executing multi-step workflows. How do you see the balance between human developers and agentic systems changing over the next five years?

AI agents will increasingly take on routine development tasks. They are already capable of implementing features end-to-end, including testing and documentation. Every developer will become a team lead of AI developers. The main challenge would be to communicate the requirements to this team and verify that the generated artifacts match the outlined requirements.

However, software development is fundamentally about problem-solving and design. Human developers will continue to define architecture, make tradeoffs, and guide the overall direction of systems.

What will change is the level of abstraction at which developers work. Instead of focusing on code, developers will increasingly orchestrate higher-level workflows and collaborate with AI systems that execute parts of those workflows.

In other words, developers’ role becomes more strategic as AI handles more of the mechanical work.

Tabnine has indicated that enterprise users can see AI-generated code acceptance rates reach around 80% in some environments. What metrics should organizations use to determine whether AI coding tools are actually improving developer productivity rather than simply generating more code?

The key question is not how much code AI generates, but how much useful work it actually produces.

There are several metrics organizations should track. One is first-pass acceptance rates, which measure how often AI-generated code can be used without modification. Another is review cycle time—how many iterations are required before a pull request can be merged.

Organizations should also look at developer time spent on rework, as well as lead time for changes from development to production.

If AI tools are truly improving productivity, you should see improvements across those metrics. Developers spend less time fixing generated code and more time working on higher-value tasks.

Enterprises remain cautious about exposing proprietary code to external models. How does the concept of “Trusted AI Coding” address governance, privacy, and compliance concerns that have slowed enterprise adoption of AI development tools?

Trust is one of the most important factors in enterprise adoption of AI.

Trust is the ultimate challenge for realizing the AI engineer. How do we trust the AI engineer to act autonomously to complete critical software engineering tasks? How do we ensure its actions align with our expectations for quality, security, and compliance with our policies? If the AI engineer is to be an accepted member of our engineering teams, it must be as trusted as our well-vetted and appropriately onboarded teammates.

Addressing this challenge relies on two critical pillars:

- Personalization: Equipping the AI engineer with an intimate understanding of your organization, codebase, and best practices.

- Control: Implementing robust systems to ensure all code—both AI-generated and human-written—meets your organization’s quality, security, performance, and reliability standards.

Additionally, Trusted AI Coding means giving organizations control over how AI is deployed, and ensuring centralized governance and control.

You’ve suggested that organizational context may become a foundational layer in the enterprise AI stack—similar to databases or cloud infrastructure in previous computing eras. What does that future architecture look like?

If you look at how enterprise technology evolves, we often see new infrastructure layers emerge.

Databases became the foundation for managing data. Cloud platforms became the foundation for running applications at scale.

In the AI era, organizations will need infrastructure that allows AI systems to understand the internal structure of the enterprise—its systems, relationships, and operational constraints.

That infrastructure layer will provide structured context that multiple AI systems can use, whether they are coding assistants, support agents, or operational automation tools.

In that sense, context becomes a shared foundation for enterprise AI.

Many companies are building coding assistants tightly coupled to a single foundation model. Tabnine instead allows enterprises to connect different models depending on their needs. Why is model flexibility important for the long-term evolution of enterprise AI development tools?

The AI ecosystem is evolving very quickly. New models are released frequently, and different models often have strengths in different areas.

Enterprises should not have to redesign their development workflows every time the model landscape changes. By allowing organizations to choose and switch between models, we provide flexibility that helps future-proof their AI strategy.

Model flexibility also allows organizations to balance performance, cost, privacy requirements, and deployment constraints.

In the long term, enterprises will likely operate in a multi-model environment, and development platforms should be designed with that reality in mind.

For CTOs and engineering leaders evaluating AI development platforms today, what are the biggest mistakes organizations make when deploying AI coding tools, and how can they avoid them?

One common mistake is focusing only on model capability. Larger models are definitely a critical component, but reliability in real-world environments depends on how well the AI understands the system it operates within.

Another mistake is deploying AI tools without considering governance and security requirements. Enterprises need clear policies around how code is accessed, how models are deployed, and how outputs are validated.

Finally, organizations sometimes expect AI to deliver immediate productivity gains without adapting workflows or providing sufficient context. Successful deployments usually involve integrating AI into existing development processes and connecting it to the organization’s code and architecture.

When those elements come together, AI can become a powerful accelerator for software development rather than just another tool.

Thank you for the great interview, readers who wish to learn more should visit Tabnine.