Artificial Intelligence

OpenAI and Anthropic Drop Dueling Models as AI Arms Race Intensifies

OpenAI and Anthropic released new flagship models within minutes of each other today, while OpenAI simultaneously launched an enterprise agent platform and Perplexity introduced a multi-model research feature. Today delivered more significant AI product announcements in a single afternoon than most weeks produce in total.

Here’s what shipped and what it means.

Anthropic’s Opus 4.6: Agent Teams and a Million-Token Window

Anthropic released Claude Opus 4.6, its most capable model, with two headline features: a one-million-token context window and a new capability called Agent Teams.

The context window is the bigger technical achievement. At one million tokens, Opus 4.6 can process roughly 3,000 pages of text in a single prompt — four times the 256,000-token limit of its predecessor. Combined with 128,000-token output support, the model can now ingest and work with entire codebases, regulatory filings, or research corpora without chunking or summarization.

Agent Teams, available in Claude Code, allows multiple Claude instances to work in parallel on a shared codebase. Rather than a single agent executing tasks sequentially, developers can spin up teams where one agent handles frontend changes, another writes tests, and a third refactors backend logic — all coordinating on the same project simultaneously.

Opus 4.6 also introduces adaptive thinking, which lets the model calibrate how much reasoning effort to invest in a given prompt. Simple questions get fast responses; complex problems trigger deeper extended thinking. Developers can adjust this via effort controls across four levels: low, medium, high, and max.

On benchmarks, Opus 4.6 scores highest on Terminal-Bench 2.0 for agentic coding and leads Humanity’s Last Exam, a complex reasoning evaluation. Anthropic claims a 144-point Elo advantage over GPT-5.2 on the GDPval-AA evaluation and a 190-point improvement over Opus 4.5.

API pricing remains unchanged at $5 per million input tokens and $25 per million output tokens, though prompts exceeding 200,000 tokens carry a premium rate of $10/$37.50.

In a notable enterprise move, Anthropic announced a research preview of Claude in Microsoft PowerPoint, where the model can read existing slide layouts and templates and generate or edit presentations while preserving brand formatting.

OpenAI’s GPT-5.3-Codex: The Model That Helped Build Itself

Minutes after Anthropic’s announcement, OpenAI launched GPT-5.3-Codex, its most capable coding model. The release unifies the frontier coding performance of GPT-5.2-Codex with the reasoning and professional knowledge capabilities of GPT-5.2 into a single system that’s also 25 percent faster.

The most notable claim: GPT-5.3-Codex helped build itself. OpenAI’s Codex team used early versions of the model during its own training process — debugging training runs, managing deployment infrastructure, and diagnosing evaluation results. It’s OpenAI’s first public acknowledgment that a model was instrumental in its own development, a milestone that raises both efficiency and safety questions.

GPT-5.3-Codex sets new industry highs on SWE-Bench Pro and Terminal-Bench, benchmarks that evaluate real-world software engineering tasks. The model can handle long-running tasks involving research, tool use, and complex execution, and users can interact with it mid-task without losing context — more like collaborating with a colleague than issuing commands.

The model is available now to all ChatGPT paid plan users through the Codex app, CLI, IDE extension, and web interface. API access is coming soon.

For developers choosing between AI code generators, the competitive picture is now sharply defined: Opus 4.6 leads on agent coordination and long-context work, while GPT-5.3-Codex emphasizes speed and integrated reasoning. Both claim top marks on overlapping benchmarks, and tools like Cursor and Apple’s Xcode support both, so developers can switch freely.

OpenAI Frontier: Enterprise Agents Get Their Own Platform

Alongside the model launch, OpenAI introduced Frontier, an enterprise platform for building, deploying, and managing AI agents. Frontier connects to databases, CRM systems, HR platforms, ticketing tools, and other business applications, then lets AI agents execute processes across them.

OpenAI described Frontier as “a semantic layer for the enterprise” where human employees and AI agents operate on the same platform with shared data access and security controls. Agents get employee-like identities, shared organizational context, and enterprise-grade permissions.

The platform is model-agnostic — companies can manage agents built on OpenAI’s models alongside those from Google, Microsoft, and Anthropic. Initial customers include Intuit, State Farm, Thermo Fisher, and Uber.

Frontier positions OpenAI to compete directly with enterprise platforms like Salesforce’s Agentforce and ServiceNow’s AI agents. The difference: OpenAI is building from the model layer up, while incumbents are adding AI to existing workflow tools. Whether enterprises prefer their agent infrastructure from their AI provider or their software vendor will define enterprise AI competition in 2026.

Perplexity’s Model Council: Three Models, One Answer

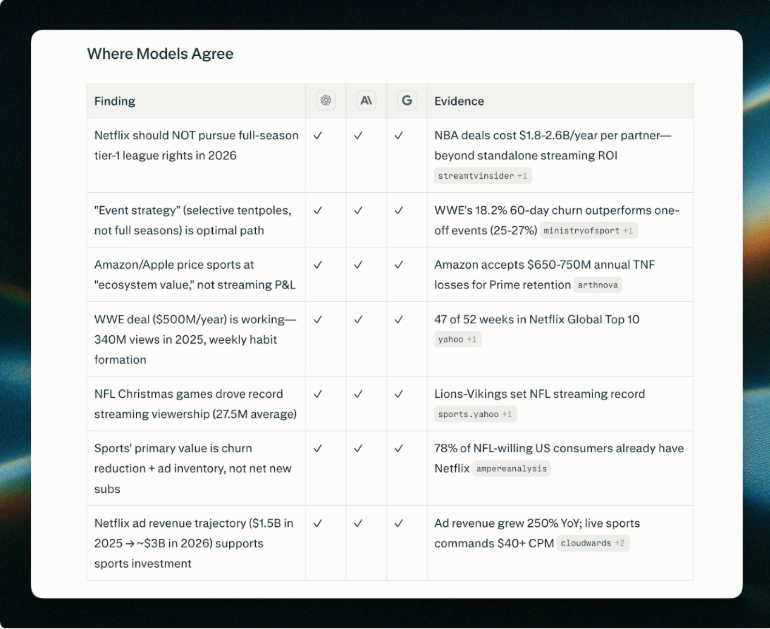

Perplexity launched Model Council, a feature that runs the same query across three models simultaneously — Claude Opus, GPT, and Gemini — then uses a synthesizer model to reconcile their outputs into a single answer that flags areas of agreement and disagreement.

Image: Perplexity

The premise is that no single model is reliably best across all queries. When three frontier models converge on the same answer, confidence is high. When they diverge, users know to investigate further. Model Council is available to Max subscribers and is positioned for investment research, strategic analysis, and complex decision-making.

The feature reflects Perplexity’s strategy of differentiating through multi-model orchestration rather than building foundation models. As the gap between frontier AI chatbots narrows on individual benchmarks, aggregating their outputs may prove more valuable than picking a single provider.

What It All Means

These releases confirm that AI competition has shifted from model capability to product infrastructure. Both OpenAI and Anthropic have models that top the same benchmarks; the differentiation now lives in what you can build on top of them.

Perplexity, meanwhile, is making a quiet argument that the model wars may be less important than how you combine models. If Model Council proves useful, it suggests the future isn’t choosing between Claude and GPT — it’s using both.

For developers and enterprises evaluating their AI stack, this just made the decision harder.