Interviews

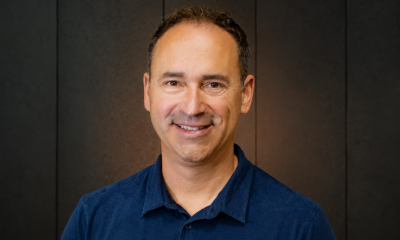

Ofir Mulla, Co-Founder and CTO of Lumana – Interview Series

Ofir Mulla, Co-Founder and CTO of Lumana, brings over a decade of deep expertise in 3D and computer vision technologies, having pioneered and scaled solutions across coded-light, stereo and LiDAR modalities while leading interdisciplinary development in software, electrical systems, robotics, ML/AI and medical devices. Prior to his current role at Lumana, he spent nearly 15 years at Intel where he architected the RealSense 3D platform and led teams spanning hardware, firmware and system architecture.

Lumana is an advanced video-security and visual-intelligence company whose platform transforms existing cameras into smart, perceptive agents by leveraging AI to detect and respond to real-world events in real time—from unauthorized access and safety violations to operational insights—enabling enterprises across education, government, retail, manufacturing and hospitality to unify camera intelligence, automate monitoring and unlock actionable analytics from their video infrastructure.

How did your experiences at Intel prepare you for Lumana and founding the company?

LiDAR technology was a core part of RealSense, an active method of projecting laser light to capture the geometry of the world. It is a beautiful piece of coded light technology that our brilliant engineers at Intel invented. Geometry sensing is critical for moving objects, such as robots and cars, which is why most robotic systems today rely on RealSense devices.

But a question arose: what happens when the sensors are stationary, where navigation and time-to-impact are not the main tasks? We asked ourselves which technology could provide the greatest value to users in that context.

Through deep discussions, we realized that most existing stationary camera systems cannot be scaled naturally. Monitoring each system is cumbersome. At the same time, AI had matured to the point where we began to ask: how can an affordable system at the customer site deliver the most urgent, reliable security responses to critical alerts?

We built a strong AI team that quickly transformed this vision into a working product. The insight was simple: moving vehicles demand geometric sensing, but stationary sensors, focused on monitoring behavior rather than planning movement, benefit more from advanced video analysis without explicit geometry reconstruction.

The RealSense journey taught me that every problem requires its own solution and true disruption demands innovation. My team at Lumana embodies this principle: professional, innovative, and driven. Together we’ve created an on-premise, real-time system that brings cloud-like performance to the edge, affordable, scalable, and responsive.

How does Physical AI go beyond traditional video analytics like object detection and pattern labeling?

When we speak of Physical AI, we mean an AI system that does not stop at perception, but actively interacts with the real world. Traditional video analytics, like object detection or pattern labeling, are only the first layer. The deeper challenge is what comes after: arranging, tracking, aggregating, identifying, retrieving, searching and verifying the detected objects and accelerating response. It also includes enabling text-based access and even searching for objects the system was not originally trained to detect.

All of this must be achieved within a compact, affordable computing device. That is where Physical AI goes beyond traditional analytics: it transforms raw detection into actionable, accessible intelligence. It’s not about discovering the laws of physics, a scientific pursuit still under debate, but about providing practical, efficient ways to access and act upon visual and audio content in real-world environments.

What are the technical pillars that enable Lumana to fuse data from multiple cameras, interpret behavior in real time, and continuously adapt based on contextual and historical inputs?

Great question. One of our core technical pillars is the ability of the on-premise system to continuously adapt to the scene it observes, what is now often called continuous learning. You can think of it as a system that evolves with its environment, improving over time. This approach has allowed us to deliver high performance with very low cost and exceptional agility.

Another key pillar is our hierarchical architecture, which intelligently escalates computational effort only when required. This ensures that complex actions receive the resources they need without burdening the entire system.

Taken together, these principles form a platform that is simple, efficient and highly scalable, enabling users to experience powerful real-time insights and behavioral interpretation at the lowest possible cost.

Can you share one or two real-world deployments where Lumana’s system detected events like violence escalation, safety boundary breaches, or loitering, and explain the impact these had on safety or operational response?

Lumana deployments in cities show clear improvements in real-time awareness and response. In a major city in Israel, the system transformed an existing video network into an intelligent early warning layer that detected loitering in restricted zones, crowd anomalies, after-hours intrusions and erratic movements. This led to fewer break-ins, reduced vandalism and faster intervention in high-risk areas.

A U.S. municipality saw similar gains in a historic district that struggled with vandalism, car break-ins, disturbances and loitering. Lumana delivered continuous monitoring and immediate alerts, enabling proactive patrols and faster response. This resulted in safer public spaces and less operational waste for the city.

These examples illustrate how real-time detection of behaviors, such as loitering and boundary breaches, strengthens public safety and streamlines operations.

With AI systems interpreting sensitive physical behavior, what privacy safeguards are embedded in your design and deployment processes?

Lumana technology and design emphasize strong governance and minimal data movement. Processing is performed on the edge, whenever possible, to limit exposure and strengthen privacy. Access is restricted through clear controls and audit trails so teams can follow every workflow. The system keeps video local, sharing only necessary metadata, which supports privacy expectations in regulated environments.

These safeguards ensure that sensitive visual data is handled responsibly, while maintaining the performance required for real-time operations.

What drives your hybrid‑cloud architecture, and how does it support real‑time processing and continuous learning?

Lumana uses a hybrid approach to combine the performance of on-premise systems with cloud flexibility. Edge processing delivers real-time AI, storage, and video management locally by default. This reduces bandwidth demands and strengthens privacy, while still enabling cloud support when needed for broader coordination or learning across deployments.

This architecture gives users immediate responsiveness while maintaining the ability to scale and improve through continuous adaptation across sites.

How was the self-learning capability architected, and how does it improve over time in multi-site deployments?

The architecture of our self-learning capability is built around scale. The more sites we deploy, the broader our perspective becomes across the landscape of edge devices. Each new environment contributes fresh data, expanding the diversity of scenarios and scenes the system can learn from.

Our continual learning methodology takes advantage of this collective knowledge. As the system refines itself across deployments, the process of on-line training becomes simpler and more efficient. In practical terms, the wider the deployment, the faster and more accurate the adaptation, resulting in a system that continually improves over time across all sites.

Who do you see as your main competitors or collaborators in this space, and what makes Lumana unique?

Our true uniqueness lies in our people. Behind Lumana is a team of brilliant engineers and innovators, starting with our AI group, supported by our cloud specialists, UX/UI designers, and strengthened by customer support and sales. While AI forms the backbone of our technology, it is our human engine that drives our success. The creativity, professionalism, and dedication of our team are what set Lumana apart, whether in competition or collaboration.

Lumana emphasizes “Think big,” “Customer‑first,” “One team,” and “Master your craft.” How do you operationalize those values in recruitment, product development, and daily life?

We hire innovators who think big, solve problems, collaborate and commit to growth.

Product teams develop scalable AI with an ambitious vision, iterate via customer feedback, foster collaborative work and strive for excellence.

Daily operations use agile methods to enable bold ideas, prioritize customer needs, build team unity and support professional development.

These practices drive innovation, customer success, and impact in AI video security.

Looking ahead five years, how do you envision Lumana’s role evolving in the broader AI ecosystem—and what impact do you hope Physical AI will have on industries like security, manufacturing, or smart cities by then?

Looking five years ahead, we see Lumana’s role evolving as a key enabler of practical Physical AI across industries. While deciphering the fundamental laws of physics remains a scientific enigma, our focus today is on customer value, developing tools that allow organizations to better monitor and respond to the world around them, across any application.

We already maintain long-term collaborations with medical centers, and are exploring expansion into moving platforms such as robotics and transportation. Over time, as we grow and scale, we also intend to invest in more fundamental research questions: can AI uncover deeper patterns in nature, or even help us frame new theories about the laws of physics? Could concepts like the dimensionality of time be illuminated by learning systems?

Our ambition is to drive impact across security, manufacturing, and smart cities, while keeping sight of the larger horizon, pushing the boundaries of what AI can ultimately help us discover.

Thank you for the great interview, readers who wish to learn more should visit Lumana.