Interviews

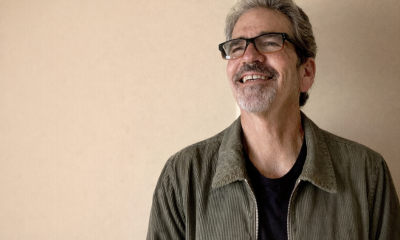

Nikolaos Vasiloglou, VP of Research ML at RelationalAI – Interview Series

Nikolaos Vasiloglou is the VP of Research ML at RelationalAI. He has spent his career on building ML software and leading data science projects in Retail, Online Advertising and Security. He is a member of the ICLR/ICML/NeurIPS/UAI/MLconf/

RelationalAI is an enterprise AI company that builds a decision intelligence platform designed to help organizations move beyond data analysis toward automated, high-quality decision-making. Its technology integrates directly with data environments like Snowflake, combining relational databases, knowledge graphs, and advanced reasoning systems to create a “semantic model” of a business—essentially encoding how a company operates, its relationships, and its logic. This allows AI systems (including decision agents like “Rel”) to reason over complex, interconnected data and generate predictive and prescriptive insights, enabling enterprises to make faster, more informed decisions without moving their data outside secure cloud environments.

You’ve had a rare career path spanning academic machine learning, large-scale industry deployments, and leadership roles across companies like Symantec, Aisera, and now RelationalAI. How did those experiences shape your perspective on where machine learning research meets real-world systems today?

I was fortunate enough to engage with different business domains, from retail and security, all the way to online advertising. That helped me understand how machine learning and AI fits as a common denominator. We knew from the early 2000s that software is eating up the world, data is eating up decision intelligence, yet few companies, Google included, believed that advanced machine learning algorithms would eventually eat up everything. Back in 2008, NeurIPS attendees were considered nerds and dreamers who did not understand the real world, just people who liked to tinker with toys. It was true up to a point, but I believed this was on a trajectory to change. Unlike others, I didn’t give up on actively participating in the transition of academic research into industry.

Your analysis of NeurIPS 2025 used coding assistants like Claude Code, OpenAI Codex, and NotebookLM to process the entire conference. What surprised you most about using AI systems to analyze AI research itself?

It was astonishingly easy to build software to scrape the data, machine-read them, and categorize them into sections and even summarize and explain them in a particularly intuitive way. GenAI systems are amazing at telling a story but not telling the story. NotebookLM is the queen of analyzing any domain and delivering incredible results. However, you have no control over the story, the graphics, or the emphasis. I learned that the tools are not great at creating PowerPoint slides, so I had to resort to building HTML and then converting them to PDFs. The biggest challenge was creating figures – diffusion generation was just too slow, unreliable, and expensive, with no control. Surprisingly the models are pretty good at creating SVGs programmatically with matplotlib, plotly, and other Python libraries. That technique scaled, but it did require several passes to fix visualization errors. The models will be even better by next year.

One of the strongest themes in your analysis is the shift from training-time scaling to inference-time compute. Why is test-time compute emerging as such a powerful lever for improving model performance?

Scaling laws are our compass. Increasing the model size and pretraining data has reached its capacity. The first generation of scaling laws brought us up to GPT-4. They were the ones who helped OpenAI to start the GenAI revolution. We soon realized that there was another dimension that allowed the model to generate many tokens before arriving at an answer. This is another way to improve the efficiency of LLMs. Model size and reasoning length are often expressed as System 1 and System 2 thinking modes (Daniel Kahneman). Reasoning traces is another way of increasing model capacity. If you think about it, the breakthroughs from humans started from instincts (high IQ), but the success was always due to long and painful reasoning. We kind of see this pattern: Smaller models with long thinking windows outperform models that are 100 times bigger. So thinking matters more than IQ in LLMs.

You highlight the transition from monolithic models to agentic systems capable of planning, acting, and verifying their outputs. How close are we to agentic AI becoming a reliable production paradigm rather than a research prototype?

We are making major strides in that direction. The biggest issues are reliability and safety, so that we can trust them to be autonomous. If you look closely at the NeurIPS content, you will see autonomous systems that do research, solve math problems, and solve coding problems, but you don’t see an agentic driverless car, for example. The latest experience with Moltbook (a social network for AI agents) highlighted the issues of autonomous agentic AI. However, discovering new drugs and materials with agentic AI is huge, so let’s celebrate and index on that for the moment.

Efficiency appears to be a major driver of innovation, with smaller models achieving competitive performance through architectural improvements and smarter inference strategies. Are we entering an era where efficiency breakthroughs matter more than raw model size?

As AI scales out to production, engineering becomes more important. Relying on the frontier models is simply not sustainable. It’s great for demos, but companies face the bitter reality when they see the high cost of big models. For the first time smaller models have become a much more viable solution. There is a silent force changing the status quo of the industry. So far, NVIDIA has had the GPU monopoly and kept the prices high. AMD is making its way in the market with high-quality chips and that will force the prices to drop. Energy continues to be an issue, but we are seeing some movement in the market. As frontier labs have become more expensive, the solution of smaller models on rented GPUs has become more viable.

Your presentation suggests that the field has moved from single-axis scaling (parameters) to multi-dimensional scaling involving parameters, data, architecture, and inference. How should researchers and practitioners think about this new scaling paradigm?

For most professionals, architecture and parameters are beyond their control. The producers of the models that have the necessary capital will drive innovation. Token inference length will be dedicated by the capex spend of their organization. What remains under their control is data. We will see more focus on creating, curating, and debugging data (reasoning traces most of the time). This will be the focus of the day-to-day operations. Of course they will need to follow NeurIPS and the other big conferences to stay up to date with the trends of the new architectures.

In your NeurIPS synthesis, you point out that a growing share of research focuses on AI-driven scientific discovery, ranging from biology to climate modeling. Do you see AI-for-science as the next major frontier for machine learning research?

I think it goes beyond academic research. We are looking at the next gold rush. In 1849 the gold rush in California reached its peak. All people had to do was endlessly strain river water to find gold. We know now that many did not find gold, but what we see today is very real. I can see a big wave of two to three-person startups using language models to find new materials, drugs, and product components. Burning tokens in the smartest way can bring big yields. Coding assistants like Claude Code, OpenAI Codex, and Google Antigravity can remove the moat for SaaS companies, leaving a whole generation of very capable computer scientists in scientific search. If you work for a non profit organization like First Principles, or Bio[hub], there are opportunities to find new physics laws and theories, or other contributions in biology. If you want to generate revenues, you will work on inventing new products based on science, like pharmaceuticals, materials, batteries, etc.

Your work also highlights a growing verification gap, where models achieve strong benchmark scores but fail on simple real-world variations. What does this gap reveal about the current limitations of large language models?

They seem to have an amazing memory, and can generalize well. Benchmarks are good at the beginning of research. Once you cross a threshold you learn the benchmark and not the problem. We have been very good over the years to reset benchmarks and make them even harder to push the limits. The problem with benchmarks is that at some point, we start overindexing and eventually cheating. The whole trend here is to make competitors more honest. I personally don’t pay too much attention to the benchmarks after a few leaps happen. You can have a good product that is not even in the top ten of the leaderboard. I have also seen many subpar products that are good at the benchmarks.

The presentation suggests that small language models combined with inference scaling and agentic architectures could enable powerful AI systems that run outside hyperscale data centers. Could this decentralization reshape how AI is deployed across industries?

We saw a big emphasis on edge deployment. We are going to see smarter devices around us for sure. Microsoft has been working for years on the 1bit LLM, which achieves about 30x compression, allowing it to run even frontier models on a single chip in the future. We have been tracking this work for years and the progress is stunning . Especially in the wearable domain.

Something that was covered in last year’s NeurIPS was the idea of combining weak edge models with frontier ones. This allows you to adjust your inference power based on your bandwidth in a continuous spectrum. The first Telco Workshop at NeurIPS revealed a trend toward placing GPUs on cell towers, which is interesting because the cell tower is neither a datacenter nor an edge device. That introduces a new layer in the compute hierarchy.

Something else that escaped from the LLMs is distributed model training (and I don’t mean Google training Gemini in remote data centers.) There is a very interesting trend that is catching up on independent entities training their own models and users combining them like Legos to build bigger and more powerful ones. This is a very promising modular architecture. This is how big models are trained. Different teams build specialized models, and in the end, they plug them together like Lego blocks.

After analyzing thousands of NeurIPS papers, where do you think the AI research community is accurately predicting progress, and where might it be missing the most important upcoming shifts?

The research community does not make predictions. Researchers have their own drivers, curiosity, funding, serendipity, and of course, instinct. They can always miss interesting directions, but almost certainly someone will find it and pick it up at some point in the future. That is expected and it is healthy. Executives, investors, and engineers are required to identify the emerging trends so that they can make the right decisions and take the most educated bets. In my 5 year analysis window, there were trends that were picked up early, and other signals were missed. For some of them there is still time to ride the alpha wave.

Data markets are something that I have been watching for years, and they just made the leap this year. The missing component was attribution. We can now identify the training data that contributed to an LLM competition on the fly. That means you can pay dividends. This has been a missed opportunity from the publishers who are on class action lawsuits with frontier models. Some of them had to just capitulate to flat licensing agreements, while I believe they have the opportunity to make more sustained revenues from an attribution model.

There is a revolution coming in robotics. The world models that NVIDIA and others announced are doing very accurate and scalable physics simulation. So expect AI to be more physical moving forward.

The transformer architecture eventually merged with the state space models like RNNs, mamba, etc and has produced amazing small LLMs. We now know the exact limitations of the transformer that plays a major role in performance, but we are still missing the next step. That will come when the transformer has been proven to be die hard and pretty resilient. What we don’t know is if it is going to be a human or a transformer designing the new architecture of an LLM! The transformer united all fragmented architectures in NLP (yes don’t forget that GenAI started from rudimentary NLP tasks, such as entity classification). It worked for math, this year it worked for tables, but it hasn’t worked for physics. I counted more than 15 different architectures. So the new architecture that unifies physics might also be the one that will replace the transformer in the journey of AGI.

Thank you for the great interview, readers who wish to learn more should visit RelationalAI.