Interviews

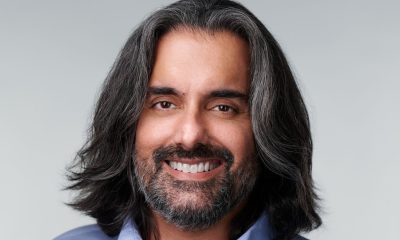

Mark Nicholson, Deloitte’s US Cyber Modernization Leader – Interview Series: A Return Conversation

Mark Nicholson, Deloitte’s US Cyber Modernization Leader, is a Principal at Deloitte with more than two decades of experience at the intersection of cybersecurity, artificial intelligence, and enterprise risk. He leads Cyber AI initiatives and the commercial strategy for Deloitte’s cyber practice, helping large organizations modernize their security frameworks and align cyber investments with evolving risk landscapes. Prior to Deloitte, he co-founded and served as COO of Vigilant, Inc., an information security consulting firm focused on threat intelligence and malicious event monitoring. His earlier career in sales and business development roles across multiple technology companies provided a strong foundation in both the technical and commercial aspects of cybersecurity.

Deloitte is one of the world’s largest professional services firms, offering audit, consulting, tax, and advisory services to organizations across nearly every industry. Its cybersecurity practice focuses on helping enterprises navigate increasingly complex threat environments while enabling digital transformation through technologies such as artificial intelligence. The firm provides services spanning cyber strategy, resilience, risk management, and enterprise security, positioning cybersecurity as both a protective function and a strategic enabler of innovation and growth.

This follows a previous interview that was published in 2025.

You have been involved in cybersecurity since the early days of modern threat monitoring, including co-founding Vigilant and helping bring early Security Information and Event Management (SIEM) and threat-intelligence capabilities to market. How has the evolution from those early monitoring systems to today’s AI-driven cyber defense platforms changed the way organizations detect and respond to threats?

When we first started building monitoring platforms in the early days of SIEM, the core challenge was getting the data in one place and making sense of it. I remember when analysts would print out firewall logs each morning and manually review them to try to find anomalies. Even as SIEM matured, there was a scale issue. Human-speed was no match for the massive number of detected events. Despite the use of automation, cyber defenders still had a data correlation and analytics problem, constantly laboring to draft new rules, often in response to monitoring failures.

One of the hopes is that AI will change that dynamic in a fundamental way. Beyond deploying agentic capabilities to automate level 1 security operations, AI promises to help move detection and response from “after the fact” to much closer to “as it’s happening” by leveraging dynamic machine tuning of monitoring algorithms. In some cases, cyber organizations will also get comfortable letting the AI initiate remediation actions.

But the hard part doesn’t go away, it shifts. As systems become more autonomous and complex, trust and observability become a battleground: What is the system doing, why is it doing it, and how do we know it hasn’t been manipulated? The opportunity with AI is enormous, but it also raises the stakes when the environment is operating at machine speed.

You’ve noted that AI is enabling adversaries to automate reconnaissance, generate exploits, and accelerate attack cycles. In practical terms, how much has AI compressed the time between vulnerability discovery and exploitation?

Historically, there was often a window between vulnerability discovery and exploitation. There was certainly urgency, but generally, unless you were hit by a zero day, there was time to understand the threat, patch, and mitigate before an attacker could deploy exploits at scale. AI has all but eliminated that window.

Adversaries can automate reconnaissance, continuously scan for exposure and use AI-enabled tooling to speed up parts of exploit development and targeting. In many cases, what used to play out over weeks can now compress into hours, and in highly automated scenarios, it can be faster than most security programs are built to handle.

The takeaway is simple: Security teams need automation and AI on the defense side, paired with strong controls, if they want to keep pace.

Security teams are increasingly moving from “human-in-the-loop” to “human-on-the-loop” oversight models. What does that shift look like operationally inside a modern Security Operations Center (SOC), and how should organizations rethink analyst roles as AI takes on more autonomous tasks?

In a traditional SOC, analysts sit at the center of every decision point. Alerts come in, analysts triage them, investigate them, and determine what actions should be taken. That approach worked when the volume of alerts and the pace of attacks were manageable. But in today’s environment, the scale of activity is simply too large for humans to act as a gatekeeper for every decision.

The shift to human-on-the-loop means AI systems can perform many of the routine tasks that analysts previously handled, such as triaging alerts, gathering context, correlating data, and executing certain remediation actions. The human role becomes one of supervision and validation rather than manual execution.

Operationally, that shifts analyst time away from “alert grinding” and toward higher-value work such as threat hunting, detection engineering, adversary simulation and improving the defensive architecture. Humans remain essential, but their role evolves toward oversight, judgment, and strategy rather than acting as the primary processor of security data.

We are hearing a lot about “Secure AI by Design.” From your perspective, why does that concept need to extend beyond model safety into identity systems, permissions architecture, and orchestration layers?

Many discussions about secure AI focus heavily on the model itself, such as protecting training data, preventing model poisoning, or defending against prompt injection attacks. Those are real issues, but they’re only part of the risk.

In practice, AI systems operate as part of much larger digital ecosystems. They access data, interact with APIs, trigger workflows, and increasingly operate through agents that can act with a degree of autonomy.

When that happens, identity and permissions become the control plane. AI agents are effectively new digital identities inside the enterprise. If those identities aren’t governed properly, they can introduce significant risks.

Secure AI by design therefore needs to extend into identity governance, access controls, orchestration layers, and monitoring systems that track what those agents are doing. Organizations need to treat AI agents much like human users, with defined permissions, auditing, and oversight, otherwise the attack surface expands rapidly.

Many enterprises are layering AI tools on top of legacy security workflows that were designed for human speed. What are the biggest architectural changes organizations need to make to actually take advantage of AI in cyber defense?

A common pattern is to bolt AI onto legacy processes and workflows that were designed for human-driven operations. It’s not a bad first approach, especially as computer vision has become a reality. For example, Deloitte has created an agent that can be trained to replace the human in the identity governance and administration process without throwing out existing purpose-built software solutions that would be challenging to sunset. This can drive dramatic cost savings.

The future benefit, though, is that enterprises will likely begin to rethink security workflows from end-to-end: modernize the data foundation so security tools can reliably access high-quality, well-structured telemetry; build orchestration so detection, response and identity functions operate as a coordinated system, not disconnected tools.

Identity prevails as one of the most critical controls. As more automation and AI agents are introduced, the number of non-human identities grows significantly. Managing those identities effectively becomes essential to maintaining control.

AI-native security is ultimately a mix of better data, better orchestration and governance that accounts for both human and machine actors.

As AI systems become more autonomous, the attack surface expands into areas like agent orchestration, API chains, and automated decision pipelines. Which of these emerging surfaces worries you the most?

If I had to pick one area that deserves immediate attention, it’s identity and data access permissions inside agent-driven systems.

As organizations introduce more agentic AI, they’re creating a growing population of autonomous actors operating inside the enterprise. Those agents may have access to data, APIs and workflows that are incredibly powerful and that makes them an attractive path for an attacker if permissions aren’t designed, monitored and audited rigorously. It’s important to treat every agent like a new employee: name it, scope it, monitor it, and enable it to be disconnected fast if needed.

API chains and automated decision pipelines introduce risk, too, but identity governance is often the foundational control. If you can’t clearly answer who an agent is, what it can touch and what it did, you don’t really control it.

From the boardroom perspective, how are executives and directors currently thinking about AI-driven cyber risk, and where do you see the biggest gap between technical reality and board-level understanding?

Boards are increasingly aware that while AI brings enormous opportunity it can also bring meaningful risk. Most directors understand AI will shape business transformation, and they’re beginning to ask questions about governance, security and resilience.

Where the gap shows up is often speed and complexity. Many board conversations still default to traditional cyber frameworks — which remain important — but they don’t always reflect how quickly AI-driven threats can evolve and scale.

The other disconnect is that “Is our AI secure?” sounds like a single question, but the answer lives across data governance, model integrity, identity management and orchestration across multiple systems. The boards that are closing the gap are pushing for control-based reporting that makes those moving parts visible and testable, and investing time to build director fluency, so oversight keeps pace with the technology.

AI is increasingly being used on both sides of the battlefield. Are we entering a permanent AI-versus-AI cybersecurity arms race, and if so, what advantages do defenders have that attackers may struggle to replicate?

We’re clearly in an era where AI is being used by both attackers and defenders. Adversaries are already applying AI to accelerate reconnaissance, identify vulnerabilities and automate parts of the attack lifecycle. But defenders still have real advantages if they choose to use them.

Defenders have visibility into their own environment, access to internal telemetry and the ability to build layered architectures that attackers must navigate. AI can help defenders analyze enormous volumes of data across networks, endpoints, and identities, giving them the potential to detect anomalous behavior much earlier.

The catch is adoption. If defenders stay stuck in manual workflows while attackers automate, the asymmetry becomes brutal. The arms race is real, and the winners will be the ones who deploy AI with strong governance, not the ones who only pilot it.

In your work advising large enterprises, what are the most common mistakes organizations make when trying to integrate AI into their cybersecurity strategy?

One of the most common mistakes we see is treating AI as a standalone tool instead of an architectural shift. Teams run isolated experiments without upgrading the data foundation, governance model or operating processes needed to sustain impact, leading to a plateau in results.

Another mistake is deploying AI capabilities without fully accounting for the new risks: new identities, new data flows and automated decision pathways that expand the attack surface. If those are bolted on without the right controls in place, AI can add fragility instead of resilience.

Finally, many organizations underestimate the importance of workforce engagement. The practitioners who run security operations every day know where the friction is and what “good” looks like. The strongest transformations bring those teams in early so the technology amplifies their judgement rather than disrupts it.

Looking ahead three to five years, what does the AI-native security operations center look like compared to today’s SOC environments?

Well, it will likely look a lot different, in many ways I can’t predict. In all likelihood, the SOC of the future will operate as a hybrid human and digital workforce. AI systems will handle much of the data processing, correlation, and initial response activity. Agentic systems will help automate workflows across vulnerability management, identity governance, incident response, and continuous control monitoring.

Human analysts remain essential, but the center of gravity shifts: supervising AI systems, validating detection use cases (rather than writing them), investigating complex threats and improving defensive architecture.

The goal isn’t to remove humans but rather elevate their roles. Instead of spending time triaging alerts and manually assembling data, analysts will focus on the strategic aspects of cybersecurity. The question will be, “how will we train the next generation of security professionals when level 1 and Level 2 are completely automated?” Perhaps the answer lies in the dramatic improvement in simulation and training technology that AI can help us develop.

The organizations that successfully builds a successful hybrid workforce, combining human expertise with AI-driven automation, will likely be best positioned to operate at the speed required in the modern threat environment.

Thank you for the great interview, readers who wish to learn more should visit Deloitte or read our previous interview.