Interviews

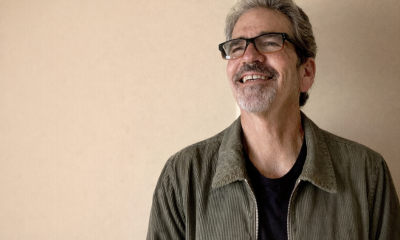

Manuel Romero, Co-Founder and Chief Scientific Officer at Maisa – Interview Series

Manuel Romero, Co-Founder and Chief Scientific Officer at Maisa, is an AI researcher and engineer focused on developing reliable, enterprise-grade artificial intelligence systems. He co-founded Maisa in 2024 to build accountable AI capable of executing complex business processes with transparency and control. Prior to Maisa, Romero held senior AI engineering and machine learning roles at companies including Clibrain and Narrativa, where he specialized in natural language processing and large-scale AI systems. Earlier in his career he worked as a full-stack software engineer and DevOps specialist before transitioning into advanced AI research and development, becoming an active contributor to the open-source AI ecosystem.

Maisa AI develops autonomous “digital workers,” AI agents designed to automate complex enterprise workflows while maintaining traceability, governance, and reliability. The platform allows organizations to build and deploy AI agents using natural language, enabling automation across internal systems and data sources without extensive coding. By focusing on verifiable reasoning and structured execution, Maisa aims to overcome common limitations associated with generative AI systems and help enterprises safely deploy autonomous AI at scale.

You’ve often focused on understanding the deeper “why” behind AI systems. From a technical standpoint, what compelled you to co-found Maisa in 2024, and what gap in enterprise AI architecture did you believe wasn’t being addressed?

The motivation behind founding Maisa came from a realization that most enterprise AI stacks were built around models, not systems.

During the generative AI boom, many companies focused on integrating large language models into existing workflows. However, these systems were often fragile, opaque, and difficult to operate at scale. They lacked:

- deterministic execution where it mattered.

- strong observability, traceability

- reproducibility

The gap we saw was the absence of true AI infrastructure for enterprises. Companies were building applications around LLM APIs, but they lacked something equivalent to a computer architecture for knowledge work.

Maisa was created to address that gap by designing an architecture centered around the Knowledge Processing Unit (KPU), a system that enables AI to operate reliably inside real enterprise workflows.

You’ve worked across advanced natural language processing and generative systems before founding Maisa. How did those experiences shape the architectural choices behind the platform?

My experience working in NLP and NLG, particularly around the training and pre-training of language models and later large language models (hundreds of them), made something very clear when trying to build real systems on top of them. The transformer architecture is extremely powerful, but it comes with at least three foundational limitations that must be addressed to use it reliably in production.

The first is hallucinations. These models generate text probabilistically and can produce outputs that sound correct but are not grounded in verified information.

The second is context limitations. Even with larger context windows, models operate within a bounded token space, which makes it difficult to reason over large or complex bodies of knowledge.

The third is up-to-date information. Pre-trained models represent a snapshot of knowledge at training time, while enterprise environments require systems that can reason over constantly changing information.

Recognizing these constraints shaped many of the architectural decisions behind Maisa. Instead of relying on the model alone, we focused on building a system that provides structured access to knowledge, validation mechanisms, and controlled execution so that AI can operate reliably in real enterprise workflows.

Many enterprises experiment with generative AI but struggle to move beyond pilots. From a systems design perspective, what is the core reason scaling fails in so many organizations?

Many enterprises struggle to move beyond generative AI pilots because most deployments are built as experiments rather than as robust systems. Early prototypes often rely on prompt engineering, lightweight orchestration, and simple retrieval pipelines, which can demonstrate value but do not provide the reliability, observability, or control required for production environments. As organizations try to scale these systems, they encounter issues such as inconsistent outputs, lack of traceability, difficulty integrating with enterprise workflows, and limited governance over how the AI behaves. At its core, the problem is that large language models are probabilistic generators, while enterprise processes require predictable and auditable behavior. Without an architecture that adds structure around reasoning, validation, execution, and monitoring, generative AI systems remain difficult to scale beyond isolated use cases.

Maisa’s Digital Workers are designed to be auditable and structured rather than purely probabilistic. What does that mean in practical terms for enterprises evaluating AI for production use?

When we say Maisa’s Digital Workers are auditable and structured rather than purely probabilistic, we mean that the AI operates within a controlled system where its actions and reasoning can be traced and governed. Instead of allowing a model to freely generate outputs and decisions, the system structures how the AI interacts with data, tools, and workflows. Each step in the process can be logged, inspected, and validated, and actions are executed through defined interfaces rather than directly from model output. For enterprises, this means AI systems can be monitored, audited, and integrated into critical processes with greater confidence. It shifts AI from being a black-box assistant to a system whose behavior can be understood, controlled, and trusted in production environments.

As the architect of the Knowledge Processing Unit, how does it differ from a typical orchestration layer or workflow engine built around large language models?

The Knowledge Processing Unit differs from typical orchestration layers because it is designed to manage the full lifecycle of AI-driven reasoning rather than simply coordinating prompts and model calls. Most orchestration frameworks act as workflow managers that chain together steps such as retrieval, prompting, and tool execution. The KPU operates at a deeper architectural level by structuring how knowledge is accessed, how reasoning is performed, and how actions are executed within the system. It treats knowledge processing as a core computational layer, integrating memory, validation, and controlled execution so that AI can operate reliably inside complex enterprise workflows rather than just generating responses.

In regulated industries, risk tolerance is low. What specific design decisions did you make to ensure AI outputs remain reliable and do not propagate errors across complex workflows?

In regulated industries, reliability and control are essential, so we designed the system with several safeguards to ensure AI outputs remain trustworthy. One key principle is structured execution, where the AI cannot directly trigger critical actions without passing through controlled interfaces. We also incorporate validation layers that check model outputs against schemas, rules, or secondary mechanisms before they are accepted. In addition, the system maintains full observability, recording reasoning steps, tool interactions, and decisions so they can be traced and audited. Together, these design choices help prevent errors from propagating through workflows and allow organizations to operate AI systems with the level of reliability and governance required in regulated environments.

What are the most compelling early use cases where you’ve seen Digital Workers move from guided assistance to fully operational AI-driven execution?

Some of the most compelling early use cases appear in knowledge-intensive workflows where processes are well defined but still require significant analysis and decision making. In areas such as compliance review, technical support operations, and internal knowledge management, Digital Workers can move beyond simply assisting humans and begin executing structured tasks end to end. They can retrieve and analyze large volumes of internal information, apply defined procedures, interact with enterprise systems through controlled tools, and produce outputs that feed directly into operational workflows. The key shift happens when the AI is not only generating suggestions but is able to reliably carry out defined actions within a governed system, allowing organizations to automate parts of complex knowledge work rather than just augment it.

As regulatory scrutiny around AI intensifies globally, how do you see core AI infrastructure evolving to meet compliance requirements without limiting innovation?

As regulatory scrutiny around AI increases, I believe we will see a shift away from architectures that simply call model provider APIs and trust the output blindly. Enterprises and regulators will increasingly demand systems where AI behavior is observable, auditable, and governed. This is where architectures like the Knowledge Processing Unit become important. This type of architecture allows organizations to enforce controls, trace decisions, and ensure that AI outputs are reliable before they influence real processes. Over time, I expect these kinds of systems to become the standard foundation for trustworthy AI infrastructure.

You’ve spoken about ethics and accountability alongside your technical work. How do those perspectives influence how you approach building transparent AI systems?

Ethics and accountability, for me, translate directly into system design choices. If AI systems are going to participate in real operational workflows, they cannot function as opaque black boxes whose behavior cannot be inspected or understood. That perspective has strongly influenced how I approach building AI systems. Transparency, traceability, and human oversight need to be built into the architecture from the start. This means ensuring that reasoning steps can be observed, decisions can be audited, and actions are executed through controlled mechanisms. When these principles are embedded at the infrastructure level, AI systems become not only more trustworthy but also easier for organizations to govern responsibly.

Looking ahead, do you believe agentic AI infrastructure will become as foundational as cloud infrastructure did in the previous decade — and what needs to happen technically for that shift to materialize?

I do believe agentic AI infrastructure has the potential to become as foundational as cloud infrastructure has become over the past decade. As organizations look to automate increasingly complex knowledge work, they will need systems that can reliably coordinate reasoning, memory, and execution across many tasks and data sources. However, for that shift to materialize, the underlying architecture must mature beyond simple model integrations. We need infrastructure that provides structured reasoning, reliable access to enterprise knowledge, strong observability, and controlled execution of actions. When those capabilities are built into the core system, agentic AI can evolve from experimental tools into dependable infrastructure that organizations rely on to run critical operations.

Thank you for the great interview, readers who wish to learn more should visit Maisa AI.