Interviews

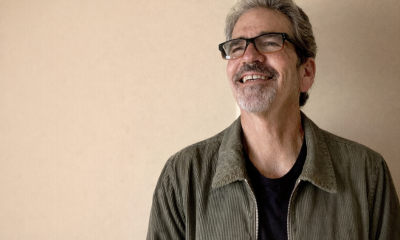

Kevin Paige, CISO at ConductorOne – Interview Series

Kevin Paige, CISO at ConductorOne, is a veteran cybersecurity executive with more than three decades of experience spanning government, enterprise technology, and high-growth startups. Based in the San Francisco Bay Area, he leads identity security strategy for the company while advising organizations on modern workforce security and governance. Paige previously served as CISO at Uptycs, Flexport, and MuleSoft, where he helped build and scale security programs during periods of rapid growth. Earlier in his career, he held security leadership and infrastructure roles at Salesforce and xMatters, and served in both the U.S. Army and U.S. Air Force. In addition to his operational roles, he is active in the cybersecurity startup ecosystem as an advisor and investor.

ConductorOne develops an identity governance and access management platform designed for modern cloud and hybrid environments. Its technology provides unified visibility into identities and permissions across applications, infrastructure, and on-prem systems, enabling organizations to automate access reviews, enforce least-privilege access, and reduce identity-based security risks. By combining identity analytics with automated workflows, the platform helps security teams manage access at scale while improving compliance and operational efficiency.

You’ve had a long career spanning military cyber operations in the U.S. Air Force, enterprise security leadership roles at companies like MuleSoft, Flexport, and Salesforce, and now serve as CISO at ConductorOne. How has your perspective on identity security evolved across these roles, and why do you believe identity has become one of the most critical battlegrounds in modern cybersecurity?

In the Air Force, identity was much simpler — clearance level, need to know, everything behind firewalls, done. At MuleSoft, it became about scale — provisioning thousands of users across hundreds of SaaS apps without creating gaps. At Flexport, the perimeter disappeared entirely and identity was the only control that still worked regardless of where someone was.

Now at ConductorOne, identity is undergoing its most fundamental transformation. It’s no longer just about people — it’s about machines, APIs, service accounts, and AI agents that act autonomously. The tools most organizations use were designed for a world that doesn’t exist anymore.

Identity is the critical battleground because it touches everything. You can have the best endpoint security and network segmentation in the world — if something has the wrong access, none of that matters.

Your upcoming Future of Identity Report found that 95% of enterprises say AI agents are already performing autonomous IT or security tasks. What kinds of tasks are these agents actually carrying out today, and how quickly do you expect their level of autonomy to increase?

What surprised me wasn’t the adoption — it’s the speed. Last year 96% planned to deploy agents. This year 95% already have. That’s not a gradual curve. That’s a threshold crossing.

Agents are handling helpdesk workflows, alert triage, access reviews, provisioning, and in some cases automated remediation. The part most people miss: 64% of organizations already allow agents to act autonomously with only post-action review. The agent acts first, a human checks later — if they check at all.

The agents doing helpdesk tasks today will be making security decisions within 12 months. The question isn’t whether autonomy increases — it’s whether governance keeps pace. Right now, it isn’t.

The report highlights the rise of non-human identities—including application programming interfaces (APIs), bots, and AI agents. Why are these machine identities growing so quickly, and why are many organizations still struggling to manage them effectively?

Three converging forces. Cloud and SaaS adoption means every integration needs its own identity. DevOps generates machine identities at scale — every pipeline, container, and microservice. And AI agents are adding an entirely new category that doesn’t just hold access but uses it to make decisions.

Organizations struggle because the tools weren’t built for this. Traditional IAM assumes a person who logs in and logs out. Non-human identities operate continuously, don’t respond to MFA, often have persistent credentials, and accumulate privileges because nobody reviews their access like they review a human’s.

There’s also an ownership problem. When a developer creates a service account and moves teams, who owns it? Often nobody. Industry research shows 97% of NHIs have excessive privileges. That’s not a tooling problem — it’s a governance gap.

Nearly half of companies say non-human identities now outnumber human users, yet only a small percentage of enterprises have full visibility into what those identities can access. What risks emerge when organizations lose visibility into these automated identities?

Three layers. First, compromised credentials. NHIs often use long-lived API keys or static tokens that don’t rotate. An attacker with one of those has persistent access that doesn’t trigger the same alarms as a compromised human account.

Second, privilege accumulation. Integrations that started with read access quietly gain write access. Nobody removes old permissions because nobody’s reviewing machine identities.

Third — and this is emerging fast — AI agents amplify both of those risks. A compromised service account with database read access is bad. An AI agent with that same access that can autonomously summarize, share, and act on what it reads is exponentially worse.

Our report found NHI visibility is actually declining — from 30% to 22% year over year. Organizations are discovering the problem faster than they can solve it.

Many companies see AI as a productivity accelerator, but your research suggests it can also quietly expand the attack surface. How does the adoption of AI tools and agents create new identity-related security risks?

The most immediate risk is accidental over-permissioning. Teams deploy an AI agent for one workflow but give it broader access than needed because scoping permissions for machines is harder than for people. The agent doesn’t just see support tickets — it sees the entire customer database.

Then there’s prompt injection. Agents processing external inputs can be manipulated to take unintended actions. If the agent has broad access, a crafted prompt turns a helpful assistant into a data exfiltration tool.

Third is shadow AI. Gartner reports that over 50% of enterprise AI usage is unsanctioned. Each unauthorized connection creates new identities and attack surfaces that the security team can’t see.

I’ve seen it firsthand — someone gave an agent access to internal systems, and within days, someone prompted it into revealing the CEO’s compensation and vacation schedule. The agent worked as designed. The failure was the access model.

Identity and access management has traditionally focused on employees logging into systems. How must identity governance evolve now that autonomous software agents are increasingly interacting with infrastructure and making decisions?

The fundamental shift is from periodic to continuous. Traditional governance operates on quarterly reviews and annual recertifications. AI agents operate 24/7, make thousands of decisions between review cycles, and can change behavior based on a model update. By the time a quarterly review catches an over-privileged agent, the damage is done.

Three things need to change. Governance must be continuous — evaluating access in real time, not on a schedule. It must be policy-driven rather than role-driven — dynamic policies scoped to specific tasks, not static role assignments. And it must be fully auditable — every agent action logged and traceable back to who authorized it.

Identity governance has to operate at machine speed to govern machine-speed actors. That mismatch is where the risk lives.

ConductorOne describes its platform as helping organizations secure human and machine identities together. From a technical standpoint, what changes are required in identity infrastructure to properly secure AI agents operating inside enterprise environments?

The biggest change is unification. Most organizations manage human identities through their IDP and machine identities through a patchwork of secrets managers and manual processes. AI agents fall in the gap between those worlds.

Three things need to happen. Every AI agent needs a first-class identity — not a shared service account, not a developer’s credentials, but a dedicated identity with its own lifecycle and audit trail. Those identities need just-in-time, just-enough access — minimum permissions for a specific task, revoked when the task is done. And organizations need continuous monitoring of what agents actually do with their access, not just what they’re allowed to do.

At ConductorOne, we govern human and non-human identities through a single control plane. That’s where the industry is headed — 45% already use IAM tools for NHI governance, another 45% plan to within 12 months. Human-only identity governance is ending.

Some organizations attempt to manage AI risk by restricting or banning AI tools entirely. Based on what you’re seeing across enterprises, is that approach realistic, or does it simply drive AI usage into unmanaged and less visible environments?

It drives it underground. Every time. I’ve seen this with every technology wave — BYOD, cloud, SaaS. When security says no, people don’t stop. They just stop telling security.

Gartner reports shadow AI accounts for over 50% of enterprise AI usage. Banning AI doesn’t eliminate risk — it eliminates visibility. And you can’t secure what you can’t see.

The better approach: make the secure path the easy path. If governed AI adoption is fast and simple, people will use it. If it takes six weeks to get approved, they’ll spin up a personal account on their lunch break.

Banning AI in 2026 is like banning cloud in 2016. You’re not preventing risk — you’re ensuring you won’t see it coming.

As AI systems begin acting more independently, the line between automation and authority becomes blurred. How should organizations think about governance, approvals, and oversight when AI agents are capable of taking operational actions?

Think delegation, not automation. When you delegate to a person, you define scope, hold them accountable, and review their work. Same framework applies to agents.

That means tiered autonomy. Low-risk, repeatable tasks — password resets, ticket routing — run autonomously with logging. Medium-risk actions — security config changes, elevated access — require human approval or real-time notification. High-risk actions — sensitive data, privileged access, irreversible changes — require explicit authorization before the agent acts.

Every agent also needs a human owner accountable for what it does. Without that chain, agents operate in a governance vacuum where nobody answers for the consequences.

Our report found that only 19% have continuous policy-based enforcement for agents. That means 81% are relying on static permissions and hope. That’s not governance.

Looking ahead, what are the most important steps security leaders should take over the next 12–24 months to prepare their identity and access frameworks for a world where AI agents function as full digital identities within the enterprise?

Five priorities.

First, get visibility. Most organizations don’t know how many non-human identities they have. You can’t govern what you can’t see.

Second, treat every AI agent like a user. Dedicated identity, scoped permissions, credential rotation, access reviews. If you wouldn’t give a human standing admin access to everything, don’t give it to an agent.

Third, move from periodic to continuous governance. Quarterly reviews can’t keep pace with agents that change behavior in seconds.

Fourth, build your policy framework now — before you have hundreds of agents. Define autonomy boundaries, approval requirements, and ownership while it’s still manageable.

Fifth, unify governance across human and non-human identities. Separate systems create gaps.

The winners won’t be organizations that deployed the most AI. They’ll be the ones who built identity governance capable of operating at machine speed.

Thank you for the great interview, readers who wish to learn more should visit ConductorOne.