Partnerships

InfraPartners and Emerald AI Introduce “Flex-Ready Data Centers” to Address AI’s Power Bottleneck

The rapid expansion of artificial intelligence is pushing power infrastructure to its limits. Training and running large-scale AI models requires massive compute clusters that consume enormous amounts of electricity, often faster than local power grids can expand. In response, InfraPartners and Emerald AI have announced a partnership aimed at fundamentally rethinking how AI data centers interact with the power grid, details are in the whitepaper.

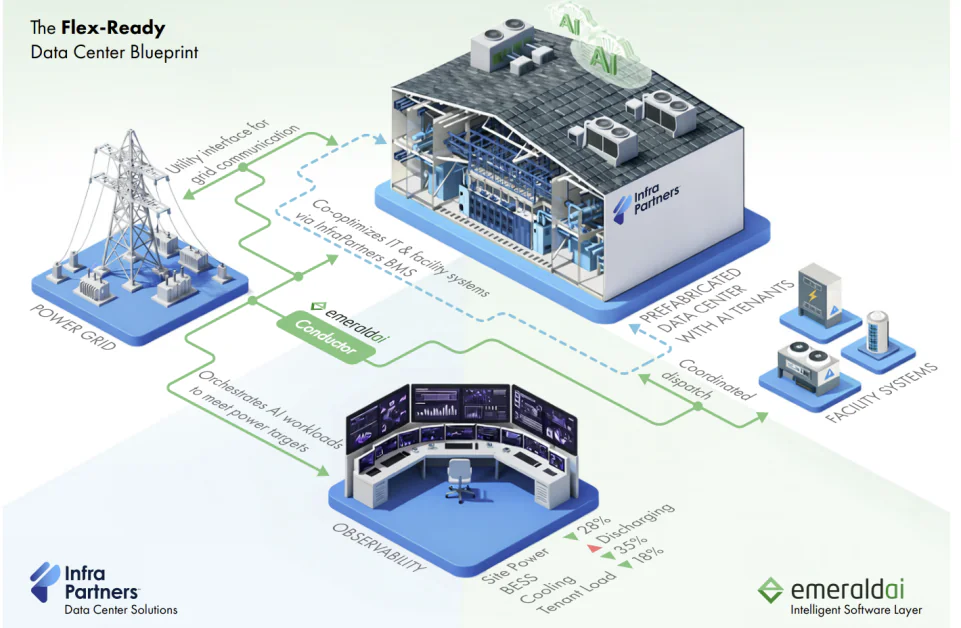

The companies are introducing a new architecture called Flex-Ready Data Centers, combining InfraPartners’ modular infrastructure design with Emerald AI’s orchestration software. The goal is to turn data centers from static electricity consumers into dynamic grid participants capable of adjusting their power demand in real time.

Rather than treating energy consumption as fixed, the approach allows facilities to align computing workloads with grid conditions, renewable energy availability, and electricity pricing—unlocking additional capacity and improving overall grid stability.

Why AI Infrastructure Is Creating a Power Crisis

AI workloads are among the fastest-growing sources of electricity demand globally. The white paper released alongside the partnership highlights how data centers have become one of the most geographically concentrated and rapidly expanding loads on modern power systems.

At the same time, grid expansion is lagging. Transmission buildouts, labor shortages, and supply chain constraints mean that new facilities can face multi-year waits to secure grid interconnections. Meanwhile, the increasing share of renewable energy—particularly wind and solar—introduces volatility in supply, making real-time balancing of generation and demand more complex.

This dynamic creates a structural mismatch: AI infrastructure needs more power, but the grid cannot expand quickly enough to provide it.

The white paper argues that the solution may not simply be building more grid capacity. Instead, it proposes that data centers themselves can become flexible resources that help stabilize power systems, absorbing excess renewable energy or reducing demand during peak grid stress.

The Flex-Ready Data Center Blueprint

The collaboration integrates two core technologies:

- InfraPartners’ Upgradeable Data Center™ architecture, designed to support successive generations of AI hardware without major redesigns.

- Emerald AI’s Emerald Conductor platform, a software layer that orchestrates computing workloads, facility systems, and grid signals.

Together, they form what the companies call a Flex-Ready Data Center, designed from the outset to participate in energy markets and grid management.

According to the white paper, this integration enables data centers to support AI growth while simultaneously improving grid reliability, reducing emissions, and unlocking new economic value through grid programs.

Rather than retrofitting flexibility later, the architecture integrates energy awarenessectly into facility operations from day one.

The Three Dimensions of Data Center Flexibility

Central to the design is a framework that divides data center flexibility into three interacting layers: temporal, spatial, and resource flexibility.

Temporal Flexibility

Temporal flexibility focuses on shifting power demand over time. Instead of running workloads continuously at full intensity, compute jobs can be scheduled based on electricity availability, pricing, or grid stress levels.

Techniques include:

- deferring non-urgent AI training workloads

- dynamically throttling IT power consumption

- adjusting cooling system operation

- coordinating with on-site energy storage

This approach allows data centers to reduce load during peak grid demand while increasing consumption when renewable energy generation is abundant.

Spatial Flexibility

Spatial flexibility extends the concept beyond a single facility.

Large AI operators often run multiple data centers across regions. By intelligently moving workloads between sites, operators can route compute tasks to locations where power is cheaper, cleaner, or more readily available.

In practice, this means AI workloads could follow renewable energy generation or avoid regions experiencing grid congestion.

Resource Flexibility

The third layer involves coordinating all controllable infrastructure within a data center campus.

This includes:

- GPUs and IT systems

- cooling infrastructure

- uninterruptible power supplies (UPS)

- battery storage systems

- on-site power generation

When orchestrated together, these assets allow a facility to adjust power consumption while maintaining reliability and service-level agreements.

Emerald Conductor: Orchestrating Compute, Facilities, and the Grid

The orchestration layer that enables these capabilities is Emerald AI’s Emerald Conductor platform.

The system operates as a hierarchical control platform spanning three operational layers:

1. IT Layer

At the compute layer, Emerald Conductor integrates with workload schedulers and system telemetry to adjust computing intensity. Predictive models identify workloads that can be deferred or reshaped without violating service-level agreements.

AI training, batch processing, and other non-latency-critical workloads become candidates for flexible scheduling.

2. Facility Layer

The platform also connectsectly to the data center’s building management system (BMS), ingesting telemetry from cooling infrastructure, power distribution equipment, UPS systems, and batteries.

This allows the software to dynamically adjust operating parameters, dispatch stored energy, or coordinate cooling strategies while respecting safety margins and redundancy requirements.

DC Flex Ready Executive White P…

3. Grid Interface Layer

At the external level, Emerald Conductor connects data centers to grid signals, including demand response events, wholesale electricity prices, and reliability alerts.

These signals are translated into coordinated actions across IT and facility infrastructure, enabling automated participation in power market programs and grid stabilization services.

InfraPartners’ Upgradeable Data Center Architecture

While Emerald AI provides the orchestration layer, InfraPartners focuses on how the physical infrastructure is designed and built.

Its Upgradeable Data Center™ architecture is intended to address a different but related problem: the rapid evolution of AI hardware.

Modern GPUs and accelerators often require new power densities, cooling technologies, and infrastructure layouts every few years. Traditional data centers struggle to adapt, leading to costly retrofits or stranded capacity.

InfraPartners’ design introduces fungible power and cooling architectures capable of supporting multiple generations of hardware without major redesigns.

The company also relies heavily on factory-based construction, with approximately 80% of the facility assembled and tested offsite before deployment. This manufacturing-first model reduces construction timelines while improving quality control and repeatability.

Facilities can scale incrementally, from 5-megawatt deployments to gigawatt-scale campuses, allowing operators to expand capacity as AI demand grows.

Integrating Flexibility at the Infrastructure Level

The partnership integrates the two systems through deep telemetry and control integration.

InfraPartners’ building management systems stream real-time operational data—including power, cooling, and energy system metrics—into Emerald Conductor’s optimization engine.

The orchestration platform then determines how workloads, infrastructure systems, and energy assets should respond to grid conditions.

Because the infrastructure is designed with flexibility in mind, the system can safely adjust operations without compromising reliability or uptime requirements.

This level of integration also allows data centers to participate in grid programs such as:

- demand response

- wholesale energy markets

- grid reliability services

These programs create new revenue streams while helping utilities manage electricity demand.

A New Model for AI Infrastructure

As AI continues to expand across industries, energy availability is becoming one of the defining constraints on the technology’s growth.

The Flex-Ready Data Center model suggests a different approach to scaling compute infrastructure. Instead of treating data centers as passive loads on the grid, the design positions them as active participants in energy systems, capable of coordinating computing demand with power availability.

If widely adopted, the architecture could help accelerate AI deployment while easing the strain on electrical infrastructure—an increasingly critical challenge as AI models grow larger and more energy-intensive.