Interviews

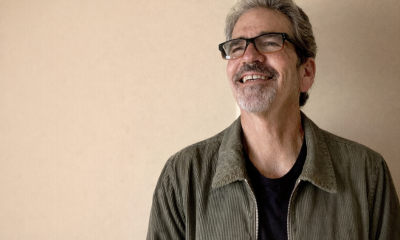

Husnain Bajwa, SVP of Product at SEON – Interview Series

Husnain Bajwa, SVP of Product at SEON, leads product strategy for the company’s risk and fraud prevention solutions, bringing more than two decades of experience in networking, cybersecurity, and enterprise technology. Based in Austin, he previously served as VP of Product Strategy and VP of Global Sales Engineering at Beyond Identity, and earlier spent seven years as a Distinguished Engineer at Aruba Networks. Bajwa has also held leadership roles at Ericsson and BelAir Networks and co-founded CardioAssure. His career combines deep technical expertise with product leadership across telecommunications, security, and digital infrastructure.

SEON is a fraud prevention and anti-money laundering platform that helps businesses detect and stop digital fraud across the customer lifecycle. The company’s technology analyzes hundreds of data signals—including email, device, IP, and behavioral patterns—to identify suspicious activity in real time. Its platform combines machine learning risk scoring with customizable rules to help organizations reduce fraud, automate compliance processes, and protect legitimate users across industries such as fintech, e-commerce, and online gaming.

How has accessible generative AI changed romance and dating app scams in the last 12 months?

Generative AI has become a force multiplier for fraud. It has dramatically lowered the barrier to entry for sophisticated romance fraud, giving attackers access to the same high-powered tools legitimate businesses use.

According to SEON’s 2026 Fraud & AML Leaders Report, 98% of organizations now use AI in fraud and compliance workflows. The same reality applies to criminals. AI is no longer experimental. It is now baseline. What used to require patience, social engineering skill and language fluency can now be automated.

Fraudsters are assembling fully synthetic identities from the ground up, complete with aged email accounts, believable photos, plausible life narratives and supporting digital signals. Each signal may appear legitimate in isolation, but together they form an identity engineered explicitly for deception.

Language is no longer a reliable tell, with AI eliminating grammar mistakes and tonal inconsistencies. It enables emotionally coherent conversations that adapt dynamically to a victim’s responses. One actor can now manage hundreds of personas simultaneously.

The result is fraud that appears legitimate from start to finish. Romance scams have shifted from isolated bad actors to coordinated, AI-assisted operations running continuously at machine speed.

What are three subtle red flags AI-generated profiles exhibit?

The first red flag is what I’d call digital footprint imbalance. The profile story is rich and detailed, but the long-term digital exhaust doesn’t match that depth. AI can generate narrative instantly, but it struggles to replicate years of consistent, cross-channel behavioral history.

The second red flag appears when you zoom out and look at groups of accounts. Individually, accounts look convincing. But when viewed collectively, statistical similarities emerge like shared device fingerprints, similar registration timing and infrastructure overlap. Fraud increasingly hides in pattern similarity rather than obvious mistakes.

Third is suspiciously perfect behavior. Human activity contains randomness. People log in irregularly, shift tone mid-conversation and behave unpredictably. AI-generated personas often introduce mechanical precision, such as evenly paced messaging, optimized usernames and controlled activity depth. Detection today depends less on spotting sloppy errors and more on identifying behavior that is too consistent to be organic.

Beyond identity verification, what signals should platforms monitor?

Static, one-time verification at sign-up is no longer sufficient. Fraudsters routinely pass basic checks and then operate unchecked.

Modern protection requires continuous, adaptive verification that responds to risk as it emerges. That means analyzing digital footprint depth, device intelligence and behavioral telemetry in real time, both before and during user interaction.

Technical signals like persistent device fingerprinting, proxy detection, infrastructure reuse and automation markers are critical. But equally important are behavioral signals: conversation pacing, rapid trust acceleration, attempts to move interactions off-platform and cross-account messaging patterns.

The goal is context-aware decisioning, especially before emotional investment occurs. Instead of asking “Does this identity exist?”, platforms need to ask, “Is this entity behaving like a legitimate human over time?”

How does AI-driven fraud challenge traditional teams and what does real-time mitigation look like?

AI-enabled fraud is scalable, adaptive and continuous. It compresses attack cycles and overwhelms manual review capacity. Tactics evolve mid-engagement, which makes static rule sets obsolete.

Traditional moderation models are reactive. They review cases after harm begins. But if you don’t have real-time decisioning baked into your stack, you’re playing defense after the damage is done.

Real-time mitigation means scoring risk in sub-seconds at onboarding and first interaction. It means using graph-based analysis to uncover coordinated networks rather than evaluating accounts in isolation. It means automated suppression of high-risk clusters before messaging privileges are granted.

Fraud is simultaneously increasing and specializing. The battleground has shifted from obvious abuse to precision identity manipulation. Defense must move from reactive moderation to live orchestration.

What is the biggest misconception users have?

Many users assume that if a profile exists, it has been deeply verified. They equate longevity with legitimacy and authentic-looking photos with authenticity.

In reality, verification is layered and probabilistic. Platforms reduce risk, but they cannot guarantee authenticity at all times. Passing a check at one moment does not mean continuous legitimacy.

Safety is risk-managed, not guaranteed. The presence of a profile means an account met certain thresholds, not that it represents a fully authenticated human identity indefinitely.

What single product capability would most raise the barrier for scammers?

The most impactful capability would be a real-time fraud command center embedded directly into onboarding that can assess entity-level risk across device, email, phone and network signals before messaging begins. It can detect cluster-level patterns early, not after victims report harm. It can apply progressive, context-aware friction instead of blanket verification.

The most effective protection happens before the first message is sent. Once emotional engagement begins, the defensive burden increases significantly.

How can platforms balance fraud detection and user experience?

The supposed tradeoff between frictionless and secure is poor system design, not an immutable law.

Smart fraud prevention applies dynamic friction, escalating verification only when behavioral or technical signals justify it. Low-risk users move seamlessly. Elevated risk triggers deeper scrutiny.

When platforms measure safety and conversion together, fraud prevention improves user experience. Removing bad actors early increases trust and reduces the emotional and financial fallout that drives user churn.

Precision replaces blanket friction.

What role should external fraud prevention platforms play?

No single dating platform sees the full threat landscape. Fraud networks operate across industries, platforms and geographies.

85% of organizations plan to add or replace a fraud vendor in 2026, according to SEON’s report. This signifies that leaders recognize the need for stronger, more integrated intelligence.

External fraud prevention platforms provide cross-industry signal enrichment and broader pattern recognition. They detect infrastructure reuse, emerging adversarial AI tactics and coordinated networks that may not be visible within one ecosystem.

Fraud intelligence strengthens when visibility expands. As AI enables attackers to coordinate at scale, defense must become equally networked and adaptive.

What new AI capabilities will scammers leverage in 12 to 18 months?

We’re moving into an era of adversarial AI, or systems designed specifically to deceive other AI systems.

SEON’s report notes that 25% of leaders now cite criminals’ advancing use of AI and obfuscation techniques as a top external threat. That concern is well-founded.

We can expect more deepfake liveness-bypass attempts, real-time voice cloning for off-platform escalation and AI-driven behavioral mimicry trained on legitimate user data. Fraudsters may increasingly “age” personas over time to simulate long-term history and gradually build trust before activation.

The defining challenge will be proving humanness through nuanced behavioral, biometric and environmental signals rather than static credentials.

What advice would you give users who suspect an AI-assisted scammer?

Slow the interaction. AI-assisted scams rely on emotional acceleration and urgency.

Be skeptical of fast-moving relationships, especially if financial hardship narratives appear. Never send money outside the platform. Request unscripted, real-time video engagement and independently verify images through reverse searches.

If something feels off, report it immediately. Early reporting allows platforms to detect clusters and dismantle coordinated networks before more users are harmed.

Romance should feel organic. When behavior feels engineered, it often is.

Thank you for the great interview, readers who wish to learn more should visit SEON.