Thought Leaders

Generative AI Can Change the World – But Only if Data Infrastructure Keeps Up

Despite the buzz surrounding Generative AI, most industry experts have yet to address a significant question: Is there an infrastructural platform that can support this technology long-term, and if so, will it be sufficiently sustainable to support the radical innovations Generative AI promises?

Generative AI tools have already built quite a reputation, with their ability to write well-synthesized text at the click of a button – tasks that might otherwise require hours, days, weeks, or months to complete manually.

That’s all well and good, but absent the proper infrastructure, these tools simply don’t have the scalability to truly change the world. Soon to exceed $76 billion, Generative-AIs astronomical operating costs are a testament to this fact already, but there are additional factors at play.

Enterprises need to focus on creating and connecting the right tools to leverage it sustainably and must invest in a centralized data infrastructure that makes all relevant data seamlessly accessible to their LLM without dedicated pipelines. With strategic implementation of the proper tools, they will be able to deliver the business value they seek despite the capacity limitations data centers currently impose – only then will the AI revolution truly advance.

A Familiar Pattern

According to a new report from Capgemini Research Institute, 74% of executives believe the benefits of generative AI outweigh its concerns. Such a consensus has already prompted high adoption rates amongst enterprises – about 70% of Asia-Pacific organizations have either expressed their intentions to invest in these technologies or have begun exploring practical use cases.

But the world has been down this road before. Take the internet, for example, which gradually attracted more and more attention before surpassing expectations via a myriad of remarkable applications. But despite its impressive capabilities, it only really took off once its applications began to deliver tangible value to businesses at scale.

Looking beyond ChatGPT

AI is falling into a similar cycle. Businesses have rapidly bought into the technology, with an estimated 93% of enterprises already engaged in several AI/ML in-use case studies. But regardless of the high adoption rate, many enterprises still struggle with deployment – a telltale sign of incompatible data infrastructure.

With the proper infrastructure, companies can look beyond the surface level of Generative AI’s tantalizing capabilities and leverage its true potential to transform their business landscapes.

Indeed, Generative-AI can help write a brief quickly and, in most cases, quite effectively, but its potential goes far beyond that. From potential drug discovery to healthcare treatments to supply chain optimization, none of these breakthroughs are possible if the data centers that support and drive AI applications aren’t robust enough to manage their workloads.

Overcoming the Barrier to Scalability

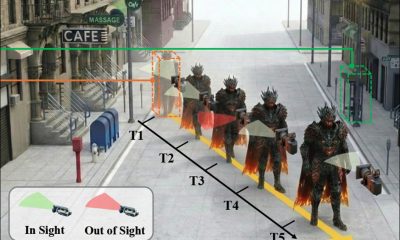

Generative AI has yet to really deliver significant value to businesses because it lacks scalability. This is due to the fact that data centers have capacity limitations – their infrastructure was not originally made to support the massive exploration, orchestration, and model tuning that Large Language Models (LLMs) require in order to run multiple training cycles efficiently.

Reaping value from Generative AI therefore relies on how well a business leverages its own data, which can be improved through developing a robust data architecture. This can be achieved by connecting structured and unstructured data sources to LLMs or by increasing the throughput of existing hardware.

It is essential that companies looking to train their LLM on organizational data can first consolidate that data in a unified manner. Otherwise, data left in a siloed structure will likely generate bias in the LLM’s learning powers.

A Support System

Generative AI didn’t appear out of thin air – it has been in the works for quite some time, and its usage and potential will only grow in the decades to come. But for now, its business applications are hitting a wall which is not scalable.

The reality is that these various tools are only as strong as the data processing infrastructure that supports them. It is therefore critical that business leaders leverage platforms that can process the petabytes of data these tools need to tangibly deliver on the significant value they promise.