Interviews

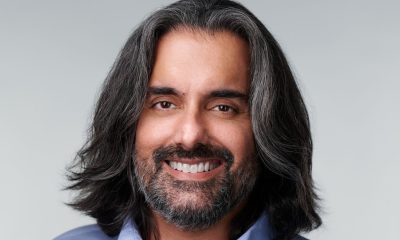

Dr. Anthony Lee, President and CEO of Westcliff University – Interview Series

Dr. Anthony M. Lee is the President of Westcliff University in Irvine, California, one of the fastest growing universities in the US, going from less than a few hundred students to over 3,000 students over the last decade. Dr. Lee has served in leadership positions across numerous universities and schools in the U.S. and internationally. With expertise in innovative hybrid and online programs, Dr. Lee has launched new programs infusing and integrating technology with traditional campus-based classes for an enhanced learning experience. He has successfully led schools through the accreditation process at the university and K-12 levels. His experience has proven him a leader in many critical areas within higher education including marketing, finance, operations, compliance, and accreditation.

How has your definition of workforce readiness evolved in the age of generative AI and automation?

Workforce readiness once meant technical competence and the ability to contribute immediately within a defined role. In the age of generative AI, that definition is no longer sufficient because the nature of work itself is changing.

Today, readiness means operating effectively in environments where intelligence is distributed between humans and machines, where tools evolve continuously, and where execution is increasingly augmented. The competitive advantage is no longer just knowing how to perform a task, but knowing how to frame problems, supervise AI outputs, interrogate assumptions, and apply judgment under real-world constraints.

We are witnessing a shift from task execution to cognitive oversight. Entry-level work is being redefined. Routine production can be automated, responsibility cannot. That places greater emphasis on critical thinking, ethical reasoning, contextual awareness, and the ability to translate AI-generated outputs into accountable decisions.

At Westcliff, we view workforce readiness as the integration of domain expertise, AI fluency, and disciplined human judgment. Students must understand how generative systems function, where they create leverage, and where they introduce risk. More importantly, they must learn how to collaborate with these systems without outsourcing intellectual responsibility.

Our applied learning model is designed around structured engagement with emerging technologies in authentic contexts. The goal is not tool proficiency alone, but adaptive capability, the ability to remain effective as tools change.

In this era, the professionals who stand out will not be those who compete with AI, but those who can direct it thoughtfully, question it rigorously, and remain accountable for the outcomes it influences. That is what workforce readiness now requires.

What does it mean for an institution to take AI seriously at the curriculum and institutional level rather than treating it as an add-on?

AI is not another instructional tool. It alters the architecture of education itself.

Taking AI seriously begins with redesign. Every discipline must examine how generative systems are reshaping professional expectations and define what AI-augmented proficiency requires. Assessment must measure reasoning and applied competence, not just output.

But seriousness also demands institutional coherence. AI literacy cannot sit in isolated courses. Faculty development, governance, assessment strategy, and vendor oversight must align around a clear principle that technology should strengthen academic quality, not dilute it.

At that point, AI becomes part of the university’s intellectual infrastructure. It shapes how learning is structured, how mastery is demonstrated, and how institutional credibility is maintained in a workforce where human judgment and machine capability operate side by side. That is thinking with which we are moving forward at Westcliff.

How do you balance foundational human skills with teaching students to work effectively alongside AI?

I do not see this as a tradeoff. If anything, generative AI increases the premium on foundational human capabilities.

When routine cognitive production becomes inexpensive, the differentiator becomes higher-order reasoning. Critical thinking means framing better questions and interrogating machine-generated outputs. Judgment means knowing when to rely on a system and when to pause. Ethics becomes practical and about understanding the consequences of deploying intelligent systems at scale.

Students must learn to think in AI-present environments. Prompting is a starting point but cognitive supervision is the deeper skill. Graduates must articulate and defend conclusions informed, but not determined by intelligent systems.

At Westcliff, we treat AI fluency as an amplifier of foundational skills. Students engage with intelligent tools while remaining accountable for clarity, credibility, and consequence. In the long term, the professionals who stand out will combine intellectual depth with technological agility. AI delivers speed and scale. Humans must provide discernment, responsibility, and leadership.

What parts of higher education are most vulnerable to disruption by AI, and which areas are more resilient than people expect?

The most vulnerable areas are built on information transfer and standardized output. If a model relies primarily on lecture delivery or assessments that measure production, AI will challenge it quickly.

Higher education is more resilient where it centers structured development, mentorship, applied practice, teamwork, clinical learning, and professional identity formation. AI can support these experiences, but it cannot replace growth built through feedback and accountability.

The dividing line is design. Institutions that see themselves primarily as content providers are exposed. Those that operate as intentional learning systems, focused on reasoning, applied competence, and measurable growth, remain highly relevant.

AI is raising the standard for what a credential must represent.

How should universities rethink degree design as AI compresses time-to-skill and lowers barriers to entry?

As AI compresses the time required to gain technical skills, universities must clarify where a degree adds value.

If tools accelerate task proficiency, higher education must focus on what cannot be automated, conceptual depth, structured problem-solving, ethical judgment, and sustained performance under constraint.

Degree design should be modular and applied, but coherent. The goal is cumulative competence, integrating technical fluency with reasoning and adaptability.

AI lowers barriers to entry. Universities must respond by raising the threshold for integration, judgment, and demonstrated capability. A degree must signal durable competence, not just exposure.

At Westcliff, we view degrees as dynamic ecosystems rather than fixed linear progressions. Stackable credentials and laddered pathways allow learners to reengage as industries shift, while maintaining coherence and rigor. Flexibility matters, but intellectual progression matters more.

What role do you see AI playing in assessment and credentialing?

AI is forcing higher education to confront a reality that predates the technology itself. Many traditional assessments were measuring production rather than understanding. If a task can now be automated with minimal effort, we must question whether it ever captured reasoning.

Assessment must shift toward authentic demonstration of competence, oral defense, scenario-based problem solving, iterative refinement, applied simulations, and portfolio evidence that makes thinking visible. In an AI-enabled environment, what matters is not the artifact alone, but the cognitive process behind it.

At Westcliff, we have moved toward oral and iterative assessment models that require students to articulate and defend their reasoning in real time. AI can assist by generating adaptive prompts and supporting scalable feedback, but design responsibility remains human. Institutions must ensure they are evaluating learner cognition, not model output.

Credentialing is increasingly about verified capability rather than accumulated seat time. Employers want evidence of applied competence and accountable judgment. Institutions that redesign assessment proactively will strengthen the credibility of their degrees.

When implemented thoughtfully, AI exposes where academic standards must be elevated.

How do you approach governance and oversight when introducing AI into teaching and administration?

We approach AI as we would any system that materially affects academic quality and institutional credibility. Governance begins with clarity of purpose, defined boundaries, and clear lines of accountability.

Effective oversight ensures innovation strengthens trust rather than erodes it. That means rigorous data privacy and security standards, bias and fairness review when systems influence student outcomes, and transparency about what tools are used and how data is handled.

It also requires updating integrity frameworks to reflect present realities rather than legacy assumptions. Faculty development is critical so adoption is intentional and aligned with learning goals, rather than being fragmented or reactive.

When we introduced AI-supported assessment models at Westcliff, oversight was embedded in the design. Faculty monitored implementation, evaluated impact, and refined processes based on evidence. Vendor evaluation is continuous, not episodic. Institutions must regularly reassess alignment with academic standards and student protection.

The objective is responsible innovation.

What misconceptions do educators most commonly have about AI, and what mindset shift is required?

Two misconceptions surface repeatedly. The first is that AI is primarily a cheating problem. The second is that it is simply a productivity tool. It is neither in isolation. More fundamentally, it reflects a shift in how knowledge is generated, evaluated, and applied.

When institutions frame AI only as an integrity issue, they default to restriction. When they frame it only as efficiency, they understate its implications. The more important question is how to design learning so that reasoning remains visible and accountable in an AI-enabled environment.

The necessary mindset shift is from control to design. This allows faculty to become even more central as architects of learning experiences that emphasize judgment, synthesis, and applied competence.

With institutional support, shared practices, and coherent governance, educators can really move where AI raises the bar for thoughtful pedagogy and makes academic design more important than ever.

How should universities prepare students for a labor market where roles are fluid and AI redefines entry-level work?

Entry-level roles have traditionally served as training grounds for routine production tasks. In many fields, AI now absorbs much of that early-stage work. As a result, graduates are expected to operate at a higher level sooner.

Preparation must therefore focus on capabilities that are difficult to automate problem framing, communication, decision-making, and responsible supervision of intelligent systems. Students need to be comfortable working in ambiguity, where tools evolve and roles are fluid.

Universities should emphasize adaptable competencies that travel across contexts rather than narrow specialization alone. Structured, project-based learning with real constraints is essential. When students solve complex problems and defend their recommendations, they practice the kind of accountable thinking that employers increasingly value.

AI literacy must become baseline. Not everyone needs to build models, but everyone must understand how intelligent systems influence decisions within their profession.

The labor market will continue to shift. Higher education’s responsibility is not to predict every role, but to graduate individuals who can move across roles, learn continuously, and contribute meaningfully in environments shaped by accelerating technology.

What would signal that a university has successfully adapted to AI-driven change rather than simply survived?

Success will be measured by outcomes and not by public positioning or vanity metrics.

A university has truly adapted when its curriculum and assessment models are redesigned around real world based competence rather than content delivery. Faculty can demonstrate thoughtful learning design versus being laggard adopters and students can clearly articulate and defend their reasoning in ways that cannot be generated on demand.

Institutional adaptation is also visible in governance. AI use is transparent, oversight is embedded and we can showcase that trust in academic outcomes strengthens rather than erodes.

The most reliable indicator, however, is external validation. Employers recognize that graduates demonstrate seasoned judgment, applied capability, decision making and fluency in AI-enabled environments.

Adaptation means agility without compromise. The institution evolves as technology advances while maintaining rigorous standards and ethical clarity. At Westcliff we believe we are producing a learning model that remains credible, resilient, and valuable as AI continues to reshape the landscape.

Thank you for the great interview, readers who wish to learn more about this faculty should visit Westcliff University.