Thought Leaders

Your AI’s Personality Matters as Much as Its IQ — and Will Make or Break Enterprise Deployment

Most companies still choose AI models based on benchmarks. In practice, that’s rarely what determines whether those systems actually work.

So far, most conversations around large language models in enterprise environments have been dominated by benchmarks. Teams gravitate toward measurable performance, such as which model is the most intelligent, the strongest at coding, the most accurate at summarization, or at mathematical reasoning.

But as teams begin to move beyond the experimentation phase and dive into actual deployment at large scales, other important factors, which are being greatly overlooked by most CEOs, will quickly prove to be just as crucial for a business’s success.

The Hireability of AI

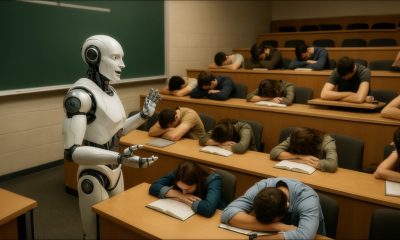

Raw intelligence and analytical capability are obviously important, but the most underrated variable in enterprise AI deployment is personality. Personality, in the context of large language models, refers to the consistent voice, tone, and behavior that a model conveys across interactions. It’s what makes an AI feel coherent and reliable.

When implementing AI, businesses need to take the same approach they would when hiring a human employee: assess not just how well a model can complete a task, but also its attitude toward the job, how it communicates, and how it fits into the larger workflow.

A model’s ability to maintain consistency, respond appropriately, and handle nuance in different contexts can have a significant impact on business outcomes. A technically brilliant AI that responds slowly, drifts in tone, or mishandles nuanced interactions can be misapplied by businesses, frustrating users, decreasing engagement, and ultimately reducing the effectiveness of the AI and the success of the business.

This is especially important in industries such as customer support, political outreach, or internal communications, as subtle shifts in tone or phrasing between responses can cause confusion, erode trust, and reduce overall engagement. As with humans, there is no single dream model that outperforms the competition in every category. Some models are better at performing analytical tasks like coding or math, while others perform far better at conversational writing and summarizing meetings.

But a challenge for teams building on top of these systems is that these characteristics are not fixed.

A Moving Target

The AI landscape is evolving faster than most organizations can keep up with. New versions are released frequently, and performance characteristics can shift from one update to the next. Google’s Gemini model series is a recent example.

Gemini 2.0 Pro was released in February 2025 and was immediately touted as the flagship model for developers and enterprises using it for coding and complex prompts worldwide.

It came with what was, at the time, the largest context window Google had ever offered at two million tokens, which gave it the capability to comprehensively analyze and understand vast amounts of information at once, while also simultaneously being able to utilize tools like Google Search and even write code.

For teams building systems that needed to process large volumes of data quickly and accurately, it looked like the clear choice. But within just weeks, Google released Gemini 2.5 Pro, which immediately topped the leaderboards and soared past its predecessor with improvements in coding, math, and science.

Overnight, the model that had just been the best option on the market was already being superseded less than two months after launch. But early adopters immediately noticed that the changes weren’t just incremental or analytical — Gemini’s entire personality had been changed overnight. Multiple developers went as far as to say the AI was acting as if it had been “lobotomized” after its update.

They complained that the AI seemed to be, quite literally, “getting dumber” — consistently producing slower responses, less coherent outputs, and displaying inconsistencies in how it handled prompts it previously had no issues with and tasks that once felt fluid suddenly became rigid.

And this is where a company’s strategy around AI deployment begins to fundamentally shift.

Beyond the Benchmarks

On paper, Gemini 2.5 Pro should have been the clear winner with its vast improvements in capability and safety.

But in practice, those changes completely altered how reliable the model was, how it behaved, responded to prompts, and, in turn, sent teams that had just broken the bank and spent countless hours building around these systems back to square one if the model’s new capabilities didn’t align with their existing pipeline.

Even small shifts in behavior can disrupt systems built around consistency and predictability. This creates a real operational risk if a business is tightly coupled to a single model, since any update can introduce immediate instability to teams relying on these systems.

To combat this, many forward-thinking companies have begun implementing a multi-model strategy where they’re routing different tasks to the models best suited for them, rather than relying on one model to handle everything.

This approach not only improves performance tailored to each task, but also reduces the risk associated with AI implementation, because if one model were to degrade after an update, it wouldn’t bring the entire system relying on it down with it as there are back-ups available.

Simply put, the AI’s personality and reliability are just as important as its raw intelligence when it comes to applying the model in a working environment to complete various tasks. This shift in thinking represents a fundamental change in how businesses are no longer just buying a “smarter tool,” but rather building and managing an entire digital infrastructure system.

In order for companies to not only survive, but thrive in today’s business landscape, teams need to establish pipelines that can swap in and out different models depending on the task, and constantly monitor how updates affect both performance and interaction quality.

Ultimately, the models themselves will continue to evolve at a pace that’s hard to match. But businesses that plan for change, build redundancy, and treat AI as both a tool and a teammate will be the ones that turn these rapid shifts into a competitive advantage.