Artificial Intelligence

Google’s Multimodal AI Gemini – A Technical Deep Dive

Sundar Pichai, Google’s CEO, along with Demis Hassabis from Google DeepMind, have introduced Gemini in December 2023. This new large language model is integrated across Google’s vast array of products, offering improvements that ripple through services and tools used by millions.

Gemini, Google’s advanced multimodal AI, is birthed from the collaborative efforts of the unified DeepMind and Brain AI labs. Gemini stands on the shoulders of its predecessors, promising to deliver a more interconnected and intelligent suite of applications.

The announcement of Google Gemini, nestled closely after the debut of Bard, Duet AI, and the PaLM 2 LLM, marks a clear intention from Google to not only compete but lead in the AI revolution.

Contrary to any notions of an AI winter, the launch of Gemini suggests a thriving AI spring, teeming with potential and growth. As we reflect on a year since the emergence of ChatGPT, which itself was a groundbreaking moment for AI, Google’s move indicates that the industry’s expansion is far from over; in fact, it may just be picking up pace.

What is Gemini?

Google’s Gemini model is capable of processing diverse data types such as text, images, audio, and video. It comes in three versions—Ultra, Pro, and Nano—each tailored for specific applications, from complex reasoning to on-device use. Ultra excels in multifaceted tasks and will be available on Bard Advanced, while Pro offers a balance of performance and resource efficiency, already integrated into Bard for text prompts. Nano, optimized for on-device deployment, comes in two sizes and features hardware optimizations like 4-bit quantization for offline use in devices like the Pixel 8 Pro.

Gemini’s architecture is unique in its native multimodal output capability, using discrete image tokens for image generation and integrating audio features from the Universal Speech Model for nuanced audio understanding. Its ability to handle video data as sequential images, interweaved with text or audio inputs, exemplifies its multimodal prowess.

Accessing Gemini

Gemini 1.0 is rolling out across Google’s ecosystem, including Bard, which now benefits from the refined capabilities of Gemini Pro. Google has also integrated Gemini into its Search, Ads, and Duet services, enhancing user experience with faster, more accurate responses.

For those keen on harnessing the capabilities of Gemini, Google AI Studio and Google Cloud Vertex offer access to Gemini Pro, with the latter providing greater customization and security features.

To experience the enhanced capabilities of Bard powered by Gemini Pro, users can take the following straightforward steps:

- Navigate to Bard: Open your preferred web browser and go to the Bard website.

- Secure Login: Access the service by signing in with your Google account, assuring a seamless and secure experience.

- Interactive Chat: You can now use Bard, where Gemini Pro’s advanced features can be opted.

Power of Multimodality:

At its core, Gemini utilizes a transformer-based architecture, similar to those employed in successful NLP models like GPT-3. However, Gemini’s uniqueness lies in its ability to process and integrate information from multiple modalities, including text, images, and code. This is achieved through a novel technique called cross-modal attention, which allows the model to learn relationships and dependencies between different types of data.

Here’s a breakdown of Gemini’s key components:

- Multimodal Encoder: This module processes the input data from each modality (e.g., text, image) independently, extracting relevant features and generating individual representations.

- Cross-modal Attention Network: This network is the heart of Gemini. It allows the model to learn relationships and dependencies between the different representations, enabling them to “talk” to each other and enrich their understanding.

- Multimodal Decoder: This module utilizes the enriched representations generated by the cross-modal attention network to perform various tasks, such as image captioning, text-to-image generation, and code generation.

Gemini model isn’t just about understanding text or images—it’s about integrating different kinds of information in a way that’s much closer to how we, as humans, perceive the world. For instance, Gemini can look at a sequence of images and determine the logical or spatial order of objects within them. It can also analyze the design features of objects to make judgments, such as which of two cars has a more aerodynamic shape.

But Gemini’s talents go beyond just visual understanding. It can turn a set of instructions into code, creating practical tools like a countdown timer that not only functions as directed but also includes creative elements, such as motivational emojis, to enhance user interaction. This indicates an ability to handle tasks that require a blend of creativity and functionality—skills that are often considered distinctly human.

Gemini’s capabilities : Spatial Reasoning (Source)

Gemini’s capabilities extend to executing programming tasks(Source)

Gemini sophisticated design is based on a rich history of neural network research and leverages Google’s cutting-edge TPU technology for training. Gemini Ultra, in particular, has set new benchmarks in various AI domains, showcasing remarkable performance lifts in multimodal reasoning tasks.

With its ability to parse through and understand complex data, Gemini offers solutions for real-world applications, especially in education. It can analyze and correct solutions to problems, like in physics, by understanding handwritten notes and providing accurate mathematical typesetting. Such capabilities suggest a future where AI assists in educational settings, offering students and educators advanced tools for learning and problem-solving.

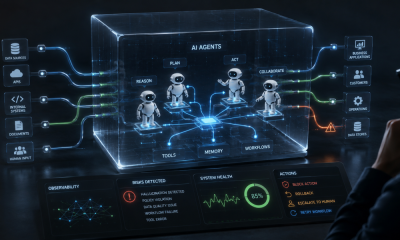

Gemini’s has been leveraged to create agents like AlphaCode 2, which excels at competitive programming problems. This showcases Gemini’s potential to act as a generalist AI, capable of handling complex, multi-step problems.

Gemini Nano brings the power of AI to everyday devices, maintaining impressive abilities in tasks like summarization and reading comprehension, as well as coding and STEM-related challenges. These smaller models are fine-tuned to offer high-quality AI functionalities on lower-memory devices, making advanced AI more accessible than ever.

The development of Gemini involved innovations in training algorithms and infrastructure, using Google’s latest TPUs. This allowed for efficient scaling and robust training processes, ensuring that even the smallest models deliver exceptional performance.

The training dataset for Gemini is as diverse as its capabilities, including web documents, books, code, images, audio, and videos. This multimodal and multilingual dataset ensures that Gemini models can understand and process a wide variety of content types effectively.

Gemini and GPT-4

Despite the emergence of other models, the question on everyone’s mind is how Google’s Gemini stacks up against OpenAI’s GPT-4, the industry’s benchmark for new LLMs. Google’s data suggest that while GPT-4 may excel in commonsense reasoning tasks, Gemini Ultra has the upper hand in almost every other area.

The above benchmarking table shows the impressive performance of Google’s Gemini AI across a variety of tasks. Notably, Gemini Ultra has achieved remarkable results in the MMLU benchmark with 90.04% accuracy, indicating its superior understanding in multiple-choice questions across 57 subjects.

In the GSM8K, which assesses grade-school math questions, Gemini Ultra scores 94.4%, showcasing its advanced arithmetic processing skills. In coding benchmarks, with Gemini Ultra attaining a score of 74.4% in the HumanEval for Python code generation, indicating its strong programming language comprehension.

The DROP benchmark, which tests reading comprehension, sees Gemini Ultra again leading with an 82.4% score. Meanwhile, in a common-sense reasoning test, HellaSwag, Gemini Ultra performs admirably, though it does not surpass the extremely high benchmark set by GPT-4.

Conclusion

Gemini’s unique architecture, powered by Google’s cutting-edge technology, positions it as a formidable player in the AI arena, challenging existing benchmarks set by models like GPT-4. Its versions—Ultra, Pro, and Nano—each cater to specific needs, from complex reasoning tasks to efficient on-device applications, showcasing Google’s commitment to making advanced AI accessible across various platforms and devices.

The integration of Gemini into Google’s ecosystem, from Bard to Google Cloud Vertex, highlights its potential to enhance user experiences across a spectrum of services. It promises not only to refine existing applications but also to open new avenues for AI-driven solutions, whether in personalized assistance, creative endeavors, or business analytics.

As we look ahead, the continuous advancements in AI models like Gemini underscore the importance of ongoing research and development. The challenges of training such sophisticated models and ensuring their ethical and responsible use remain at the forefront of discussion.