Andersonův úhel

Přinášení vizuálních analogií do umělé inteligence

Current AI models fail to recognize ‘relational’ image similarities, such as how the Earth’s layers are similar to a peach, missing a key aspect of how humans perceive images.

Though there are many computer vision models that are capable of comparing images and finding similarities between them, the current generation of comparative systems have little or no imaginative capacity. Consider some of the lyrics in the classic 1960s song, Windmills of Your Mind:

Like a carousel that’s turning, running rings around the moon

Like a clock whose hands are sweeping past the minutes of its face

And the world is like an apple whirling silently in space

Comparisons of this kind represent a domain of poetic allusion that is meaningful to humans in a way far beyond artistic expression; rather, it’s bound up with how we develop our perceptual systems; as we create our ‘object’ domain, we develop a capacity for visual similarity, so that – for example – cross-sections depicting a peach and the planet Earth, or fractal recursions such as coffee spirals and galaxy branches, register as analogous with us.

In this way we can deduce connections between apparently unconnected objects and types of objects, and infer systems (such as gravity, momentum and surface cohesion) that can apply to a variety of domains at a variety of scales.

Vidění věcí

Even the latest generation of image comparison AI systems, such as Learned Perceptual Image Patch Similarity (LPIPS) and DINO, which are informed by human feedback, only perform literal surface comparisons.

Their capacity to find faces where none exist – i.e., pareidolia – does not represent the kind of visual similarity mechanisms that humans develop, but rather occurs because face-seeking algorithms utilize low-level face structure features that sometimes accord with random objects:

Příklady falešně pozitivních výsledků pro rozpoznávání obličeje v datové sadě “Faces with Things”. Source

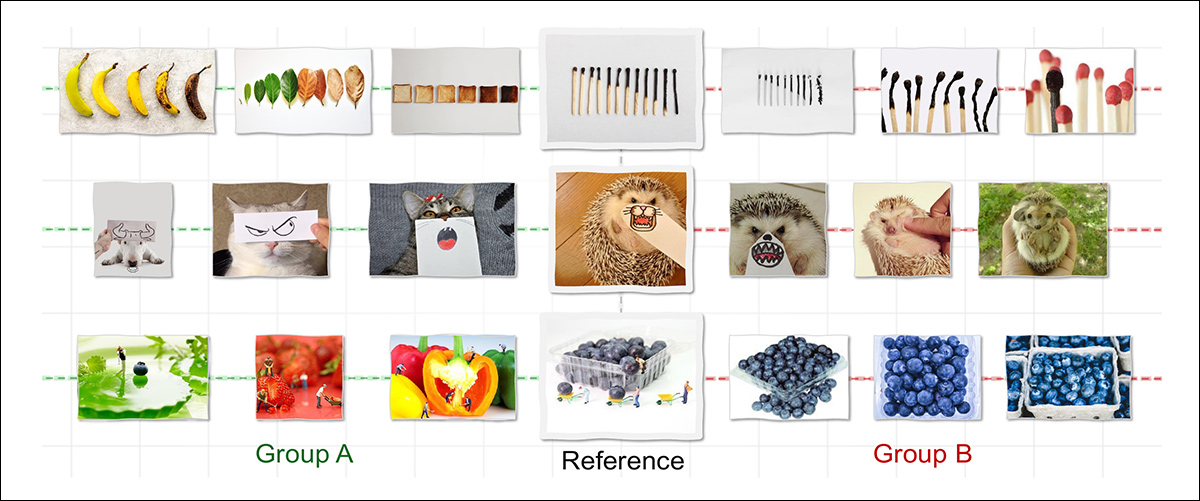

To determine whether or not machines can really develop our imaginative capacity to recognize visual similarity across domains, researchers in the US have conducted a study around Relational Visual Similarity, curating and training a new dataset designed to force abstract relationships to form between different objects that are nonetheless bonded by an abstract relationship:

Most AI models only recognize similarity when images share surface traits such as shape or color, which is why they would link only Group B (above) to the reference. Humans, by contrast, also see Group A as similar – not because the images look alike, but because they follow the same underlying logic, such as showing a transformation over time. The new work attempts to reproduce this kind of structural or relational similarity, aiming to bring machine perception closer to human reasoning. Source: https://arxiv.org/pdf/2512.07833

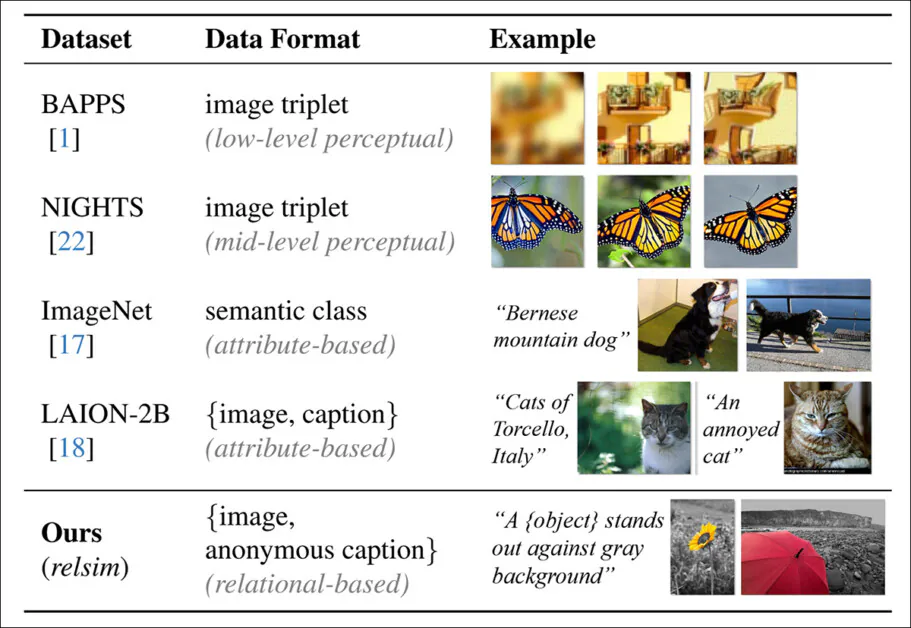

The captioning system developed for the dataset facilitates unusually abstract annotations, designed to force AI systems to focus on base characteristics rather than specific local details:

The predicted ‘anonymous’ captions that contribute to the authors’ ‘relsim’ metric.

The curated collection and its uncommon captioning style fuels the authors’ new proposed metric relsim, which the authors have fine-tuned into a vision-language model (VLM).

A comparison between the captioning style of typical datasets, which focuses on attribute similarity, whereas the relsim approach (bottom row) emphasizes relational similarity.

The new approach draws on methodologies from cognitive science, in particular Dedre Gentner’s Structure-Mapping theory (a study of analogy) and Amos Tversky’s definition of relational similarity and attribute similarity.

From the associated project website, an example of relational similarity. Source