Andersonの視点

AI

2022 generative AI 2025 China generative Tencent Hunyuan Video hobbyist AI open-source full-world video diffusion model tailor to their needs .

Alibaba Wan 2.1 image-to-video FOSS customization Wan LoRAs .

human-centric foundation model SkyReels Alibaba VACE video creation editing suite :

Alibaba VACE Source: https://ali-vilab.github.io/VACE-Page/

generative video AI Arxiv Computer Vision 350 .

Stable Diffusion 2022 Dreambooth LoRA .

Video diffusion models Hunyuan Wan 2.1 temporal consistency humans environments objects .

VFX face-swapping ControlNet .

rock

Wan 2.1 prompt ‘ Source: https://videophy2.github.io/

text-to-video image-to-video physics bloopers .

theory Alibaba UAE models train single images videos training purposes ; temporal order ‘ ‘ .

data augmentation routines source training clip model forwards backwards .

2019 University of Bristol equivariant invariant irreversible source data video clips dataset .

Examples of three types of movement, only one of which is freely reversible while maintaining plausible physical dynamics. Source: https://arxiv.org/abs/1909.09422

‘

‘

‘

‘

Hunyuan Video Wan 2.1 arbitrarily ‘reversed’ clips training .

reports practical experience hyperscale datasets clips movements occurring in reverse .

rock

OpenAI Sora

VideoPhy benchmarking system GitHub .

Scores obtained by leading open and closed-source systems, with the output of the frameworks evaluated by human annotators. Source: https://arxiv.org/pdf/2503.06800

VideoPhy-2 action-centric physical commonsense evaluation video generation .

UCLA Google Research VideoPhy-2: A Challenging Action-Centric Physical Commonsense Evaluation in Video Generation .

study project site code GitHub dataset viewer Hugging Face .

OpenAI Sora

VideoPhy-2 challenging commonsense evaluation dataset real-world actions .

LLM prompts actions physical activities object interactions .

Above: A text prompt is generated from an action using an LLM and used to create a video with a text-to-video generator. A vision-language model captions the video, identifying possible physical rules at play. Below: Human annotators evaluate the video’s realism, confirm rule violations, add missing rules, and check whether the video matches the original prompt.

Kinetics UCF-101 SSv2 datasets activities sports object interactions real-world physics .

STEM-trained student annotators minimum undergraduate qualification reviewed filtered list .

actions principles gravity momentum elasticity .

Gemini-2.0-Flash-Exp prompts actions dataset .

Samples from the distilled actions.

Sample prompts from VideoPhy-2, categorized by physical activities or object interactions. Each prompt is paired with its corresponding action and the relevant physical principle it tests.

Examples from the upsampled captions.

criteria videos input prompt physical principles .

rating-based feedback successes failures .

human annotators scored videos five-point scale .

The interface presented to the AMT annotators.

CogVideoX-5B VideoCrafter2 HunyuanVideo-13B Cosmos-Diffusion Wan2.1-14B OpenAI Sora Luma Ray .

models prompted upsampled captions .

videos less than 6 seconds .

Results from the initial round.

‘

‘

‘

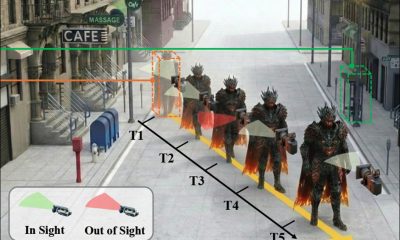

The top row shows videos generated by Wan2.1. (a) In Ray2, the jet-ski on the left lags behind before moving backward. (b) In Hunyuan-13B, the sledgehammer deforms mid-swing, and a broken wooden board appears unexpectedly. (c) In Cosmos-7B, the javelin expels sand before making contact with the ground.

‘

‘

prompt ‘

prompt ‘

*

2025 3 13

The translation is provided while maintaining the exact same structure as the input, without adding or removing any information, and keeping all URLs, HTML tags, and special characters unchanged.